Evaluating Traffic Safety Campaigns: A Guide (2025)

Chapter: 3 Surveys

CHAPTER 3

Surveys

Using surveys to evaluate traffic safety messaging can be a complex, time- and resource-intensive approach, but can also gather valuable data from a much wider group than focus groups. This can lead to more generalizable results that can provide a more accurate picture of the reach and impact of a given traffic safety messaging campaign.

There are several different options, or modes, for conducting surveys. These include the following:

- Pen-and-Paper Personal Interview (PAPI). Typically, PAPIs are self-administered surveys mailed to respondents, filled out in person by respondents, and mailed back to evaluators.

- Computer-Assisted Web Interviewing (CAWI). CAWI is a web-based survey that respondents access through a URL shared via email, on a website, via text or social media, or on a personal electronic device like a tablet, laptop, or smartphone.

- CAWI-plus-mailing. CAWI-plus-mailing is a hybrid approach where respondents may be mailed a postcard or letter that provides them with a URL link to a CAWI survey.

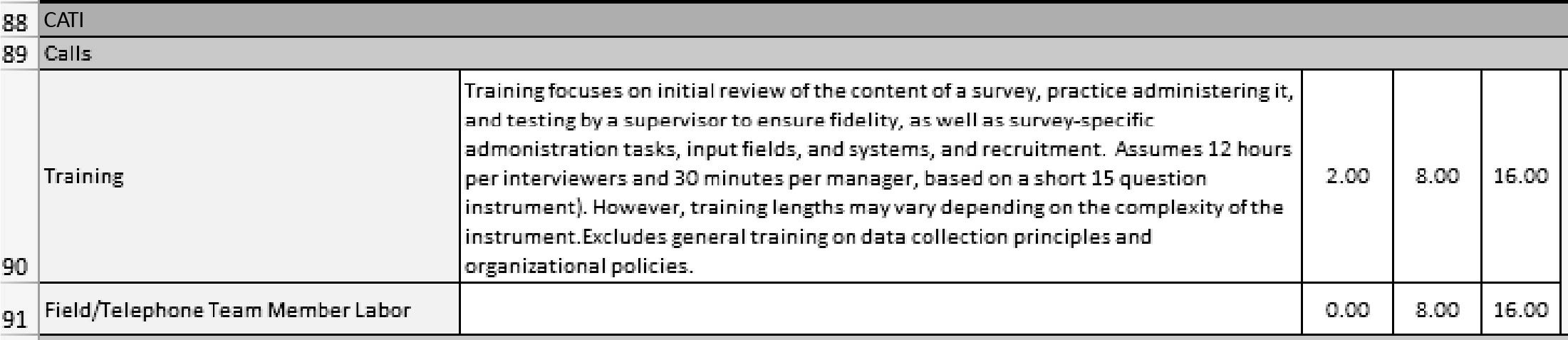

- Computer-Assisted Telephone Interview (CATI). CATIs involve interviewers calling respondents and asking them to answer questions over the phone.

- Intercept Surveys. These are surveys where interviewers approach respondents in public and ask them to answer questions, which the interviewers then record, either on paper or on a handheld electronic device like a phone.

Traffic safety practitioners may choose one or more of these modes to collect data via a survey. Each mode comes with its own benefits and challenges, and sometimes multiple modes are used to maximize benefits and minimize challenges. For instance, evaluators may use a web-based survey to reach younger members of an audience who are more technologically savvy and a CATI survey to capture data from older respondents who may be averse to filling out a survey online. The research team describes several key factors to consider when using surveys and when choosing which mode(s) to use. In addition, the team describes staffing roles that may apply to different modes and common steps to follow in planning for survey data collection, sampling, recruitment, administration, and analysis. The quick reference guide for the Survey Costing Tool is available in BTSCRP Web-Only Document 7.

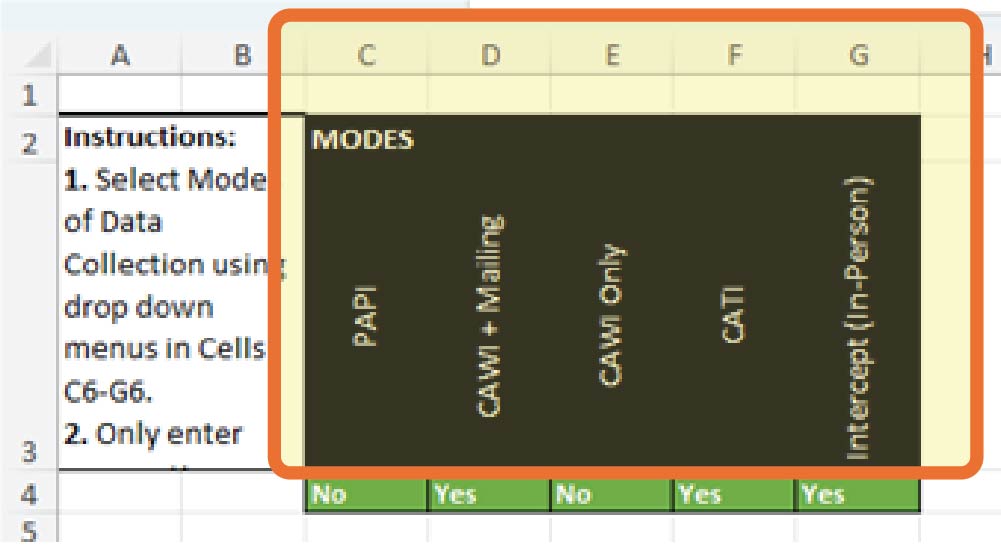

When using the Survey Costing Tool (Tab 2 in Appendix A) (see Exhibit 3.1), the selection of modes affects whether hours are included for specific staff roles and whether specific supplies and ODCs are included in the budget calculations. For example, the level of effort represented by the number of hours allotted for each step and activity for members of the core project team managing a survey from start to finish remains relatively static, but the number of hours for the sampling/methodology/analysis team or the IT support team varies depending on the number of modes used. On the other hand, the mailing, field, and telephone interviewer teams only need hours if those modes are being used. ODCs also change depending on the modes selected.

Long Description.

The tab reads '2 survey costing tool.'

Long Description.

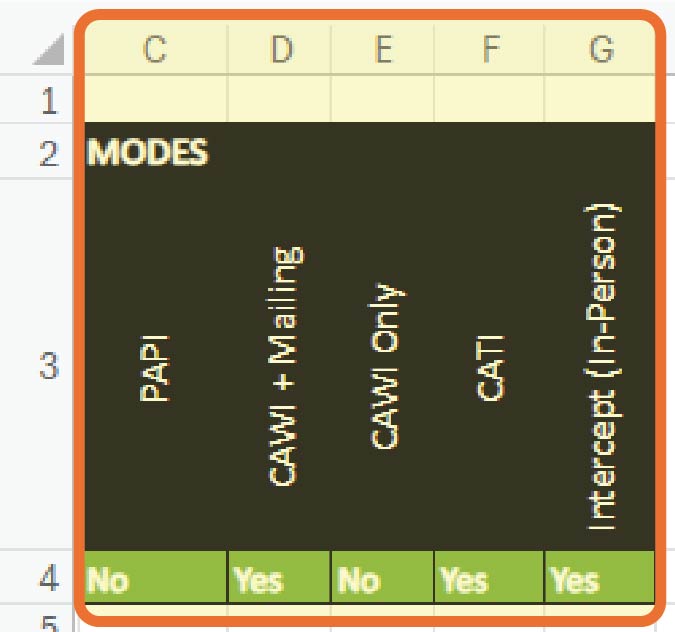

The selecting modes given below columns C through G are PAPI, CAWI plus mailing, CAWI only, CATI, and intercept (in-person).

Including a PAPI mode adds costs for purchasing envelopes and paper as well as printing, mailing, and specific data entry costs, but using a CAWI survey incurs software subscription costs, but not other ODCs. To automatically calculate total hours and costs based on which modes are being used, modes can be selected via the “yes” or “no” dropdowns for each mode in Cells C4–G4 in Tab 2 (see Exhibit 3.2). Cells C3–H3 label the relevant modes.

Staffing

Staffing is a key consideration when selecting surveys as an appropriate methodology. Your survey team needs a three-person project team that includes a leader (referred to as a subject matter expert in Appendix A, who is knowledgeable about the traffic safety behavior and/or evaluation), a project manager, who is responsible for the day-to-day oversight of the project, and a project support person. Often, the project manager can also play the role of the subject matter expert, but it may be helpful to have these roles filled by different people. In addition, the project team is often the same team responsible for initiating, planning, and, with the support of advertising agencies and media buyers, implementing the campaign. Thus, all three roles may be filled by traffic safety practitioners with varying levels of experience and seniority within an organization.

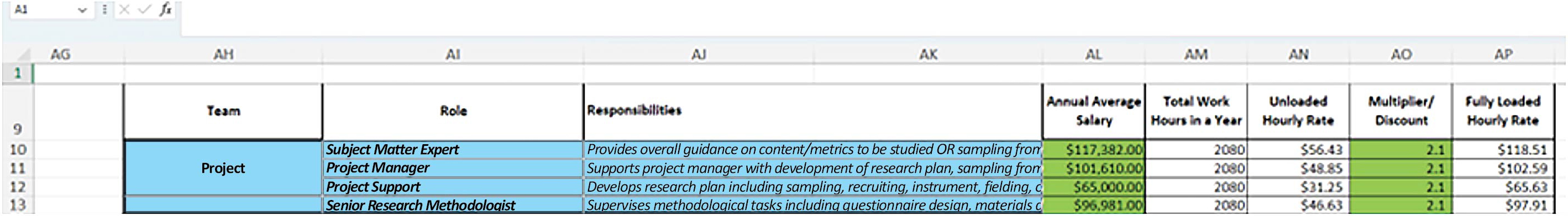

Appendix A, Tab 2, Survey Costing Tool, provides further information on the responsibilities for these positions (Cells AJ10–AJ12) as well as typical salaries for individuals in these roles based on Department of Labor statistics (Cells AL10–AL12), as shown in Exhibit 3.3. The research team recommends that SHSOs using this tool research annual salaries in their jurisdictions that are realistic for these roles and input them into the green cells in Column AL. Formulas in Columns AM and AN will calculate a basic hourly rate based on how many hours a given person is expected to work in a year. This rate can then be adjusted (or loaded) to include fringe benefits and overhead rates per organization by inputting an appropriate figure into Column AO. These hourly rates are then used to calculate labor totals per step in Cells AJ113, AJ114, AJ116, AJ118, and AJ119.

Long Description.

The column headers from AH to AP are team, role, responsibilities, annual average salary, total work hours in a year, unloaded hourly rate, multiplier or discount, and fully loaded hourly rate. The data are represented from rows 10 to 13.

However, surveys may require additional team members with specialized training and skills. While the team describes those roles and skills in the next few paragraphs, it is important to note that not all traffic safety practitioners will have the necessary knowledge and skills to fill these roles. Indeed, acquiring some aspects of the knowledge and skills described may take additional time for professional development, up to and including seeking academic qualifications (which come with added costs). Instead, traffic safety practitioners (based on their budget) have the option of contracting these roles and tasks to vendors who may include academic institutions or research organizations with experience conducting surveys.

One set of roles that requires specialized training and skills is the sampling methodology and analysis team. Depending on the complexity of the survey used to evaluate a traffic safety messaging campaign and the number of modes, this team may require up to six team members with varying degrees of experience and training, including senior, mid-level, and junior research methodologists and senior, mid-level, and junior statisticians. This group will be responsible for determining what size sample is needed to be able to generalize the survey findings to a broader audience (for example, all drivers in a given state) and what characteristics members should have for the sample to be representative of that larger group (e.g., in terms of age, race/ethnicity, and length of time driving). Likewise, this group will also be responsible for analyzing survey responses and identifying which results are statistically significant, that is, which results show an effect on road usersʼ awareness that the campaign may have had versus those that may be chance occurrences.

Another key team that may be involved is an IT support team. IT support is needed for all survey types: CAWI surveys that need to be programmed, PAPI surveys that require surveys to be printed and mailed to a database of potential respondents, CATI survey calls that are made to a random sample of telephone numbers, and data entry for PAPI, CATI, and intercept surveys. (Note: for CAWI surveys, respondents enter their own data.) This support may come from an existing team working at SHSOs and other organizations responsible for traffic safety, who may have some applicable skills and knowledge that could be used to support surveys. Some of the roles in the IT support team include an IT project manager, a survey programmer, a data solutions developer, a network systems engineer, someone responsible for quality assurance (QA), a senior software engineer (SSE), a systems architect, and a database analyst.

PAPI surveys and CAWI surveys that rely on an initial postcard or letter to introduce the survey also need a large mailing team that comprises a production manager and an assistant production manager responsible for QA as well as several production clerks and depending on the number of clerks, an additional supervisor or supervisors, as well as someone else responsible for monitoring and other QA. The production manager and assistant production manager/QA lead are responsible for planning the mail-out of introduction letters, pre-incentives, surveys, reminder letters, and thank-you/post-incentive letters as well as preparing for and managing the return of surveys that will then be sent for data entry. The production clerks and supervisors may be responsible for printing mailing addresses onto envelopes, stuffing the envelopes, and processing returned surveys and unopened mail that has been returned to sender, including removing those addresses with unopened mail from subsequent mailings of reminders. This role may be played by employees who are part-time workers. Cells AJ30–AP30 present the roles and responsibilities of production clerks. Cell AM30 can be used to calculate discounts for rates for this role by specifying the number of hours that part-time or full-time production clerks are anticipated to work (Exhibit 3.4). Full-time hours for this role are assumed to be 2,080 hours per year, but this can be reduced as needed.

Like the sampling methodology and analysis team, it may be possible for SHSOs to contract with a vendor to provide mailing and printing services if they are supplied with a database of addresses and all the text of the materials to be mailed.

Long Description.

The column headers from A H to A P are team, role, responsibilities, annual average salary, total work hours in a year, unloaded hourly rate, multiplier or discount, and fully loaded hourly rate. The data are represented from rows 27 to 34 in which row 30 is highlighted.

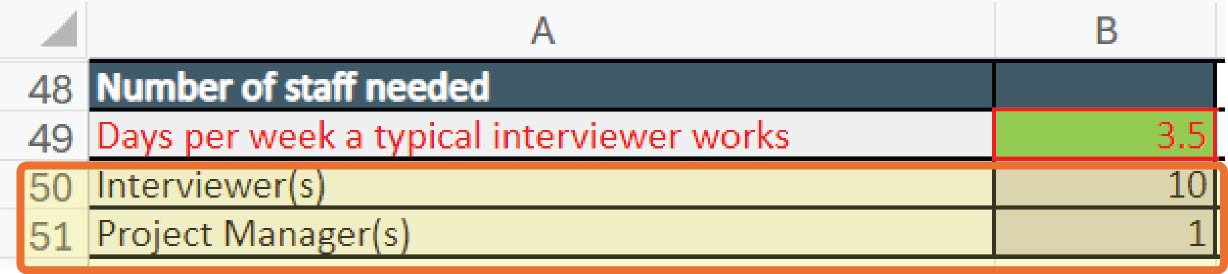

Like PAPI surveys, CATI and intercept surveys also require a large field team. Interviewers need to be trained on how to recruit members of the public to respond, either in person or over the phone, to ask questions in the survey, and to input answers from respondents consistently. Similar to mailing production clerks, interviewers may also be part-time employees. To calculate labor costs for part-time employees, the number of working hours for interviewers (Cell AM33) can also be adjusted. Monitoring and QA for these types of surveys are important so that responses can be compared and contrasted accurately. In addition, given that not everyone approached in public or called on the phone will want to respond to a survey, it may take some time for these interviewers to recruit respondents and complete surveys. Keeping these teams on track requires a manager.

Like the project team, descriptions of the roles for each of the other teams have been provided in Column AJ (Cells AJ13–AJ34) in Appendix A, Tab 2, Survey Costing Tool (Exhibit 3.5). Typical salaries for individuals in these roles based on Department of Labor statistics are provided in Column AL (Cells AL13–34), which allow unloaded and loaded hourly rates to be calculated in Columns AN and AP (Cells AN13–34 and AP13–34, respectively) based the multiplier in Column AO that covers applicable fringe and overhead rates. Based on the hours per role for each step and activity in Columns C through AA, the spreadsheet calculates overall labor totals per step in Cells AJ113, AJ114, AJ116, AJ118, and AJ119. The research team recommends that SHSOs research and input realistic annual salaries and fringe/overhead rates in their jurisdictions into the green cells in Columns AL and AO to calculate more applicable overall costs.

Other Direct Costs

Just as different survey modes require different teams, different survey modes require different supplies and ODCs. For example, when budgeting for PAPI surveys, evaluators should include costs for the purchase of envelopes, paper, printing, and mailing costs:

- PAPI surveys may require larger envelopes for mailing the surveys as well as smaller envelopes for introductory letters, reminders, and thank-you letters.

- Printing for PAPI surveys included printing of surveys, introductory letters, reminders, and thank-you letters, as well as printing of mailing addresses on envelopes.

- Mailing costs for PAPIs should cover both outgoing and return mail and can include an address lookup service to ensure accuracy and avoid the costs of mailing materials to invalid addresses (for instance to businesses rather than individuals) as well as postage in the form of physical stamps or permit imprints, used for large volumes of mail, that are printed directly onto envelopes, but come with an annual cost for their use.

CAWI surveys that rely on postcards or letters to introduce the survey and send reminders also incur similar envelope and paper costs (or postcard costs), printing, and outgoing mail costs, but avoid return mail fees. However, costs for CAWI surveys (whether introduced by letter or postcard, or shared via email, social media, text, or other means) would include subscription costs for web survey platforms like SurveyMonkey or Qualtrics. While it is possible to have a skilled IT team build a web survey from scratch, it is often more cost-effective and convenient to purchase a subscription to an existing service. CATI surveys incur a per-minute telephone call cost, and intercept surveys that rely on paper forms incur similar costs to those for PAPI surveys for printing the surveys themselves as well as additional costs for hiring interviewers (advertisements and background checks), mileage, tolls, and parking for interviewers to travel to locations where they might expect to encounter eligible respondents. If intercept surveys are conducted using an electronic device, it may also be necessary to purchase laptops or tablets for the interviewers to use.

Long Description.

The column headers from A H to A P are team, role, responsibilities, annual average salary, total work hours in a year, unloaded hourly rate, multiplier or discount, and fully loaded hourly rate. The data are represented from rows 27 to 34. The entire sheet is highlighted.

Purchasing sample frames or access to existing sample frames may also be a cost to consider for all survey modes. This includes obtaining a list of addresses for address-based samples (ABS) for PAPI or CAWI surveys that involve introductory letters or postcards, or purchasing lists of telephone numbers for CATI surveys or email addresses for CAWI-only surveys if the URL will be distributed via emails or listservs. Other costs include fees for using optical character recognition (OCR) services to scan in PAPI surveys that have been completed and returned, or statistical software packages like SPSS, Stata, or R that are used to analyze data once it has been cleaned and entered. These packages can often be purchased on a subscription basis for an organization that allows multiple users to access the software.

The amount and form of incentives are another consideration. Incentives may differ based on mode. Incentives offered for CAWI surveys, especially those conducted with existing online panels (see Recruitment), can be lower but may be higher for CATI or intercept surveys, as respondents need to spend some time on the phone or in person with interviewers completing surveys. On the other hand, some PAPI surveys use pre-incentives to encourage respondents to agree to participate as well as post-completion incentives. Pre-incentives may be sent to a larger number of people who may not ultimately respond and thus should be lower than the post-incentive for the same survey to save on budget. Cash is typically the best form of incentive to offer, though some surveys use gift cards, either physical or virtual. However, it should be noted that there is often a fee associated with processing gift cards that should also be accounted for in the budget.

These and other costs beyond labor are referred to as ODCs. They are included in Tab 2, Survey Costing Tool, of Appendix A in Column AH (Cells AH58–AH110), with notes on sample costs and the nature of costs in Column AJ (Cells 58–110), as shown in Exhibit 3.6. Like with the salaries in Cells AL10–AL34, SHSOs are encouraged to research more local costs for these ODCs and input them into the green cells in Column AJ between Row 58 and Row 110. This will allow the spreadsheet to calculate more specific jurisdictional level costs for each activity and step in Column AC and provide more accurate total ODC costs per step in Cells AJ 115 and 117.

Like other forms of evaluation, using surveys to measure the effectiveness of traffic safety messaging campaigns involves a series of steps (Exhibit 3.7). This series of steps begins with designing the study, developing an instrument, selecting the mode(s) for the survey, and defining and drawing a sample. The next step involves obtaining clearance to collect data from human subjects. Thereafter, depending on the mode of data collection, the subsequent step is to enlist IT support (which applies regardless of mode). Then, recruitment, fielding, and administration of the survey begin. After the fielding period closes, the final steps are to prepare for data analysis, which may involve activities like cleaning and coding the data, analyzing that data, and drafting and finalizing a report. Projected labor hours for each role in these steps are reflected in Tab 2, Survey Costing Tool, in Appendix A. The hours for many of these steps do not change based on the number of people who respond to the survey, but they may increase as the number of modes increases. However, other costs are reliant on the number of respondents sought and the response rate associated with that mode.

Survey Development

Step 1. Design Study

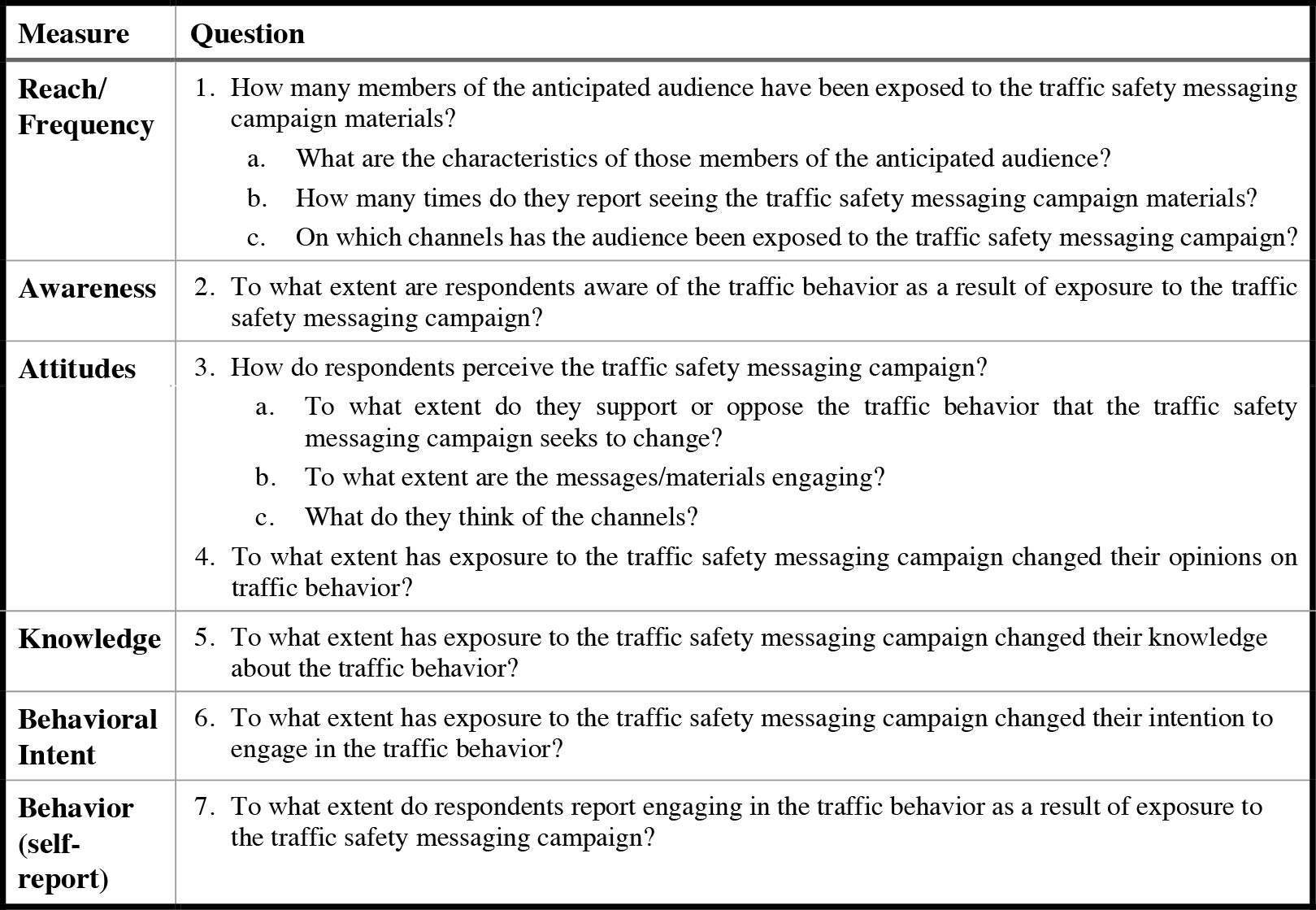

Assessing traffic safety messaging campaign effectiveness using surveys requires developing research goals and research questions for the study, which then influences the questions to ask in the survey and the types and numbers of potential respondents to recruit. These research goals and questions should focus on measures like reach, frequency, awareness, attitudes, and effectiveness. Exhibit 3.8 lists some common evaluation questions used to guide summative evaluations using surveys.

Long Description.

The column headers from AH to AJ are ODCs, notes, and costs. The data are represented from rows 58 to 110. The row headers provided in between are PAPI: pricing, envelopes, mailing services such as stuffing envelopes and postages, outgoing postages, return mail; CAVI plus Mail: pricing (postcard), envelopes, mailing services such as stuffing envelopes and postages, outgoing postages; CAVI: subscription; CATI: calls; Intercept; incentives.

Long Description.

Step 1 is survey development. Step 2 is survey administration. Step 3 is preparation for data analysis.

The selected research goals and questions guide the development of the survey instrument using existing survey items or developing new ones. Likewise, these research goals and questions can also inform the sampling plan for a survey, that is, the particular features of the people who should complete the survey and how many of them are needed.

Step 2. Develop Survey Instrument

Development of a survey instrument, also referred to as a questionnaire design can rely on common metrics used to evaluate traffic safety messaging campaigns, such as reach, awareness, attitude, or behavior change (see Exhibit 3.8) as well as existing survey questions that have already been validated (shown to work elsewhere to accurately capture the necessary data). Typically, survey questions are close-ended, offering respondents a limited number of responses to choose

Long Description.

The column headers of the table are Measure and Question. The data given in the table row-wise are as follows: Row 1: Reach or Frequency: 1. How many members of the anticipated audience have been exposed to the Traffic Safety Messaging Campaign materials? a. What are the characteristics of those members of the anticipated audience? b. How many times do they report seeing the Traffic Safety Messaging Campaign materials? c. On which channels has the audience been exposed to the Traffic Safety Messaging Campaign? Row 2: Awareness: 2. To what extent are respondents aware of the Traffic Behavior as a result of exposure to the Traffic Safety Messaging Campaign? Row 3: Attitudes: 3. How do respondents perceive the Traffic Safety Messaging Campaign? a. To what extent do they support or oppose the Traffic Behavior that the Traffic Safety Messaging Campaign seeks to change? b. To what extent are the messages or materials engaging? c. What do they think of the channels? 4. To what extent has exposure to the Traffic Safety Messaging Campaign changed their opinions on the Traffic Behavior? Row 4: Knowledge: 5. To what extent has exposure to the Traffic Safety Messaging Campaign changed their knowledge about the Traffic Behavior? Row 5: Behavioral Intent: 6. To what extent has exposure to the Traffic Safety Messaging Campaign changed their intention to engage in the Traffic Behavior? Row 6: Behavior (self-report): 7. To what extent do respondents report engaging in the Traffic Behavior as a result of exposure to the Traffic Safety Messaging Campaign?

from. These are easier for respondents to complete quickly and make data entry, coding, and analysis easier for the project team. Surveys rely on multiple-choice questions, check-all-that-apply questions, and even scales where respondents indicate the degree to which they agree or disagree with a statement or the likelihood of a given behavior being enacted. However, surveys can also include questions that ask respondents to rank or order different responses, as well as questions that ask respondents to compare their current perceptions of campaign materials or behaviors with their perceptions of the same items/activities in the past. Surveys can also include questions where the answer to one question determines whether the respondent sees a particular set of questions next or skips those questions (referred to as skip logic); these relationships between questions also need to be programmed.

For instance, saying “yes” to having seen the traffic safety message campaign materials on a billboard leads to the respondent being asked questions about their perceptions of the billboard, by saying “no,” they skip over the questions about the billboard and move onto questions about seeing other types of campaign materials, such as a television advertisement. Skip logic can increase the complexity of a survey, but it can also decrease the length of the survey for respondents who meet certain conditions, making it more likely that they will complete the survey. Skip logic applies to all survey modes. On paper, there would be instructions for respondents to skip certain questions and to move on to a specific question depending on their answer, but when the same survey is conducted over the phone or in person as an intercept survey, the interviewers would see those instructions, but the respondents would not. Similarly, CAWI surveys on the web would be programmed so that the respondents see the appropriate question next without seeing the instructions or skipped questions. This can help with perceptions of survey length and complexity. Surveys that are too long or too complex may discourage potential respondents from taking the survey or not completing the survey if they begin taking it.

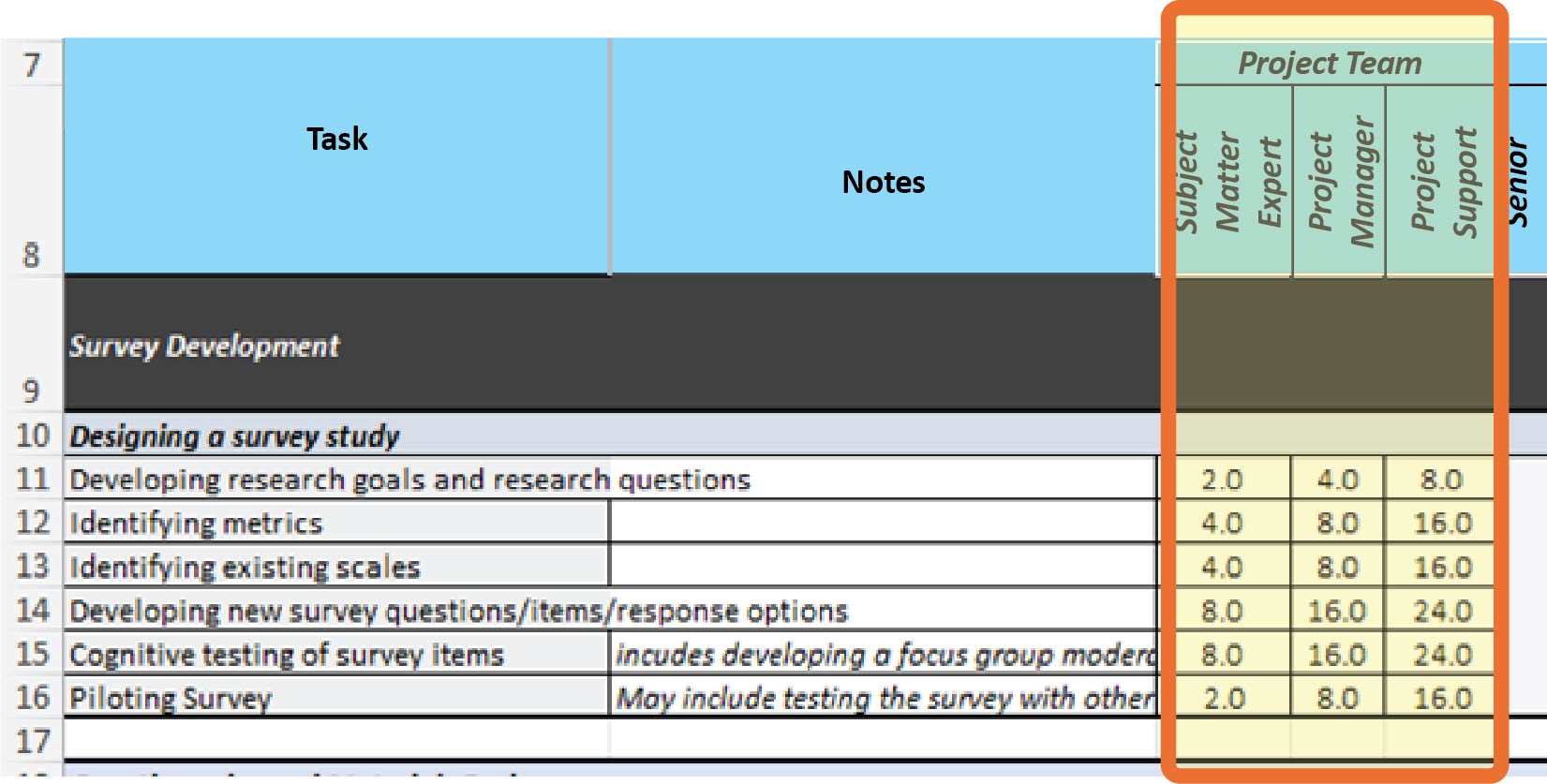

Relying on existing survey questions allows survey responses to be compared with similar survey results in other settings or at different times, which can help show changes over time or regional differences. As a first step, traffic safety practitioners should use the research goals and questions to select priority measures to focus on to keep the survey short. They may also want to review prior surveys used to evaluate traffic safety messaging campaigns to identify existing measures, scales, and survey items to either reuse or adapt. The quick reference guide, available in BTSCRP Web-Only Document 7, presents a generic campaign evaluation survey that can be adapted and used to evaluate a variety of different traffic safety messaging campaigns, with the sources of particular items listed where relevant. When adapting survey items or creating new ones, it may be helpful to consult with survey experts or colleagues to determine whether those items are easily understood. Anticipated hours for the project team, including the subject matter expert, the project manager, and the project support person for these first four activities within this step are detailed in Cells C11–C14, D11–D14 and E11–E14, respectively, in Tab 2, Survey Costing Tool, in Appendix A (see Exhibit 3.9). They are based on the assumption that the project manager is leading the work, with input and review from the subject matter expert, and that they will be directing the work of the project support person as they develop the first draft of the survey.

These three project team roles are also involved in questionnaire and materials design, but these activities also involve members of the sampling/methodology/analysis team, including three research methodology experts (also called methodologists) at different levels: a senior, mid-level, and junior research methodologist. Depending on the complexity of the survey, not all of these roles may be needed, but at least a team member with some experience designing and conducting surveys (i.e., a mid-level research methodologist) should be included in the evaluation team. If this expertise is not already available among the staff of the organization conducting the evaluation, it may be possible to contract with an independent consultant, a research organization, or an academic institution to access this expertise.

Long Description.

The column headers are task, notes, and project team. The project team column contains three sub-columns titled subject matter expert, project manager, and product support. Two headers given below are survey development followed by designing a survey study. The data are represented from rows 11 to 16. The columns under the project team are highlighted.

During the questionnaire and materials design phase, the project team consults with these members of the sampling/methodology/analysis team to decide on which mode(s) to use; which modes will be applied in which order (i.e., the flow of modes), for instance, beginning with a PAPI survey, but adding CATI and intercept modes at a certain time to increase responses and control sampling; and what incentive structure will be used (e.g., will the survey offer a pre-incentive, an early response incentive, or a post-incentive only, and will the amount vary by mode).

Likewise, these members of the sampling/methodology/analysis team will be able to provide input on how the questionnaire should be structured or ordered and on how different materials may be designed or produced, based on the mode(s) selected, to allow for responses (or non-responses) to be tracked, or to facilitate consistent data entry, later in the survey process. This includes advising on how letters, postcards, envelopes, and other materials should look, including wording, graphics, scratch-off areas for respondent ID codes or URLs, and whether pre-incentives should be visible through envelope windows. Projected labor hours for the project team and for these members of the sampling/methodology/analysis team are included in Cells C19–H21 in Tab 2, Survey Costing Tool (see Exhibits 3.10 and 3.11).

Long Description.

The column headers are task, notes, and a third column. The third column is divided into two sections titled Project Team and Sampling or Methodology. The project team column shows three sub-columns titled subject matter expert, project manager, and product support. The sampling or methodology contains three sub-columns titled research methodology, research methodology, and research methodology. Two row headers given below the column headers are survey development followed by questionnaire and materials design. The data are represented from rows 18 to 21. The columns under project team and sampling or methodology are highlighted.

Long Description.

The tab reads sampling support hours.

The project allotment of hours for the project team (Cells C19–E19) is fairly static across modes. However, the number of hours for the research methodologists varies by the number of modes being used. Hours for each type of mode per role are included in Tab 2A, Sampling Support Hours, in Appendix A (see Exhibit 3.10). Total hours for research methodologists across modes are automatically calculated when each mode is selected in Cells C4–G4 at the top of Tab 2 (see Exhibit 3.12). The project team will also consult with other members of this team to develop a sampling plan and frames (the next activity in this step after designing the survey study and designing the questionnaire), and later on (as part of the survey administration step) to monitor the sample as respondents complete surveys and make adaptations as necessary during fielding. However, while they provide valuable input on sampling and sample monitoring, these other members of the sampling/methodology/analysis team, including a senior, mid-level, and junior statistician, come into play during the analysis step.

When creating a survey, the survey mode has a substantial impact on the design of the questionnaire. As a reminder, typical survey modes include the following:

- PAPIs: Surveys are mailed to respondents.

- CAWIs: Respondents complete surveys online.

- CATIs: Surveys are conducted by interviewers over the phone.

- Intercept surveys: Interviewers collect data in person.

- CAWI-plus-mailing: Respondents are mailed a postcard or letter with a link to a CAWI survey.

Each mode comes with its own benefits and challenges. Some surveys rely on only one mode, while others use multiple modes, which can add to the complexity of a survey study but can also mitigate the challenges associated with a single mode. For example, PAPI surveys can be resource- and time-intensive as they require supplies like paper and envelopes, costs for printing and mailing surveys to respondents, and for respondents to mail surveys back. These costs can increase if introduction and reminder letters are used, but can also be mitigated if the organizations conducting the survey can rely on bulk mailing. PAPI surveys may also incur costs for data entry of completed surveys, whether this relies on automatic OCR technology or human

Long Description.

The selecting modes given below the columns C through G are PAPI, CAWI plus mailing, CAWI only, CATI, and intercept (in-person) out of which PAPI and CAWI only are given as no and the rest of them as yes.

beings to input the data. It may also be possible to reduce mailing costs by using the CAWI-plus-mailing approach if letters or postcards are mailed without the need to pay for return mail, and if postcards are used instead of letters, which require envelopes. On the other hand, CAWI-only surveys are often much cheaper to administer. Respondents enter the data themselves, and there are typically no mailing costs. However, CAWI surveys do require software for programming them and websites to host the surveys on, and costs associated with these elements or subscriptions to services that provide both programming and hosting.

CATI surveys may work best for respondents who are reluctant to spend the time filling out a paper survey and mailing it back, or who may not have access to the internet or the technological literacy to respond to a web-based survey. However, CATI surveys do require more staff and more staff time to administer, and they incur telephone costs that other surveys do not. CATI surveys may also require additional upfront effort from the project team to create scripts for the phone interview, lists of frequently asked questions for interviewers to use when answering questions from potential respondents about the survey, scripts in case they need to leave voicemails when calls are not answered, and protocols for how many times a given number will be called if there is no answer. For example, the protocol could call for up to three attempts to call a number to reach potential respondents and for interviewers to leave messages mentioning the post-paid incentive for completing the survey, to increase the likelihood of response.

In addition, CATI surveys need telephone numbers to call. Whether this relies on software to randomly dial telephone numbers or an existing list of verified telephone numbers, both come with a cost. Those costs may change depending on whether landlines or cellphone numbers are used, and the labor needed to make those calls can increase depending on how many respondents answer the calls from the interviewers and then choose or decline to respond. However, like with intercept surveys, potential respondents may find it harder to decline a person asking them to complete a survey than to ignore a survey mailed to them, emailed to them, or shared on the web. Intercept surveys also have the benefit of being able to be conducted in a place relevant to the questions being asked, for instance, near a billboard or bus shelter displaying campaign materials, or a location frequented by different types of road users. However, they may face additional costs for interviewer time, travel, and per diem. The relative costs of all of these modes are also influenced by whether an incentive (pre- and/or post-completion) is offered and by the typical response rates for each mode.

Another important consideration is the length of the fielding period, which is the time period during which the survey is in the field or available to the public to complete. This time period should be sufficient for the team to conduct outreach to and recruit potential respondents, to disseminate the survey to those respondents, for respondents to complete the survey, for several reminders, and for the completed surveys to be returned. The length of time is influenced by the speed of the method of dissemination and return. Mailed PAPI surveys take longer to reach potential respondents and therefore require a longer fielding period, whereas respondents can begin completing CATI, CAWI, or intercept surveys as soon as the team reaches out to them and recruits them. The other major factors in determining the length of the fielding period are the response rate for each mode and the number of survey completions sought. Fielding periods for those modes with lower response rates, for instance, PAPI and CAWI studies that rely on a letter or postcard to introduce the survey should be longer to allow more time to collect enough responses to meet the sample requirements.

Fielding periods for other modes (like CAWI surveys) can be shorter but should still allow time for several reminders to be shared in bi-weekly or weekly increments (and perhaps every 2 to 3 days in the last week) before the survey closes. The length of fielding periods for intercept CATI surveys is somewhere between these two ends of the spectrum and is based not only on the response rate and number of completions sought but also on the number of interviewers,

Long Description.

The tab reads intercept support hours plus ODC.

Long Description.

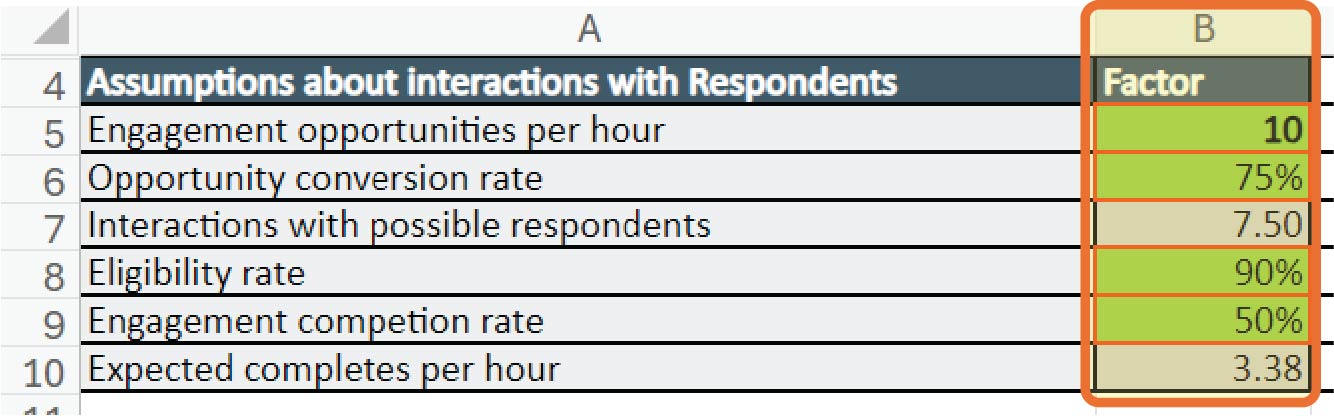

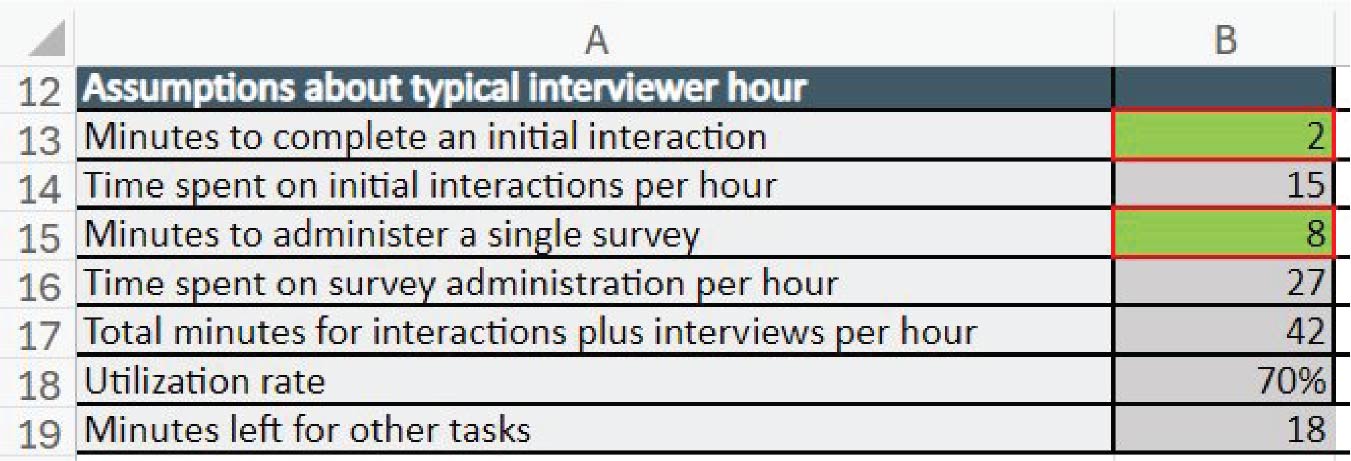

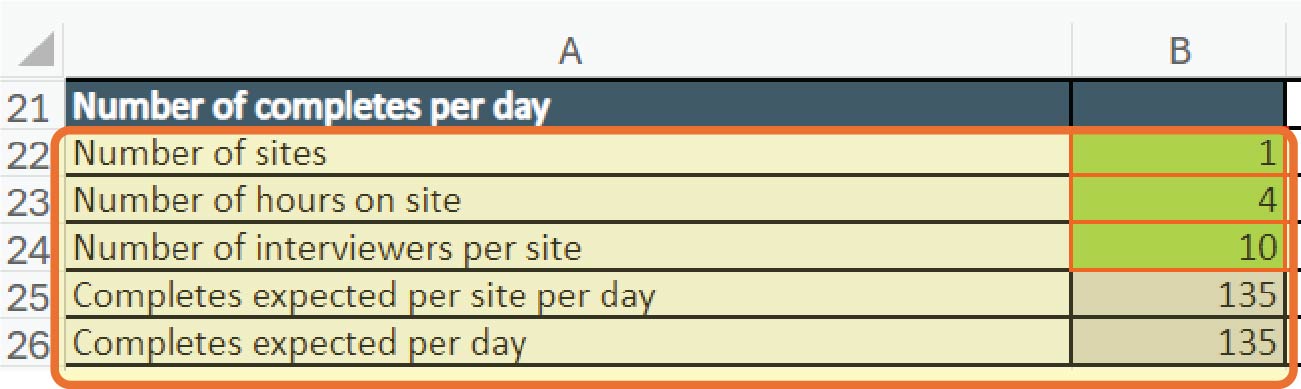

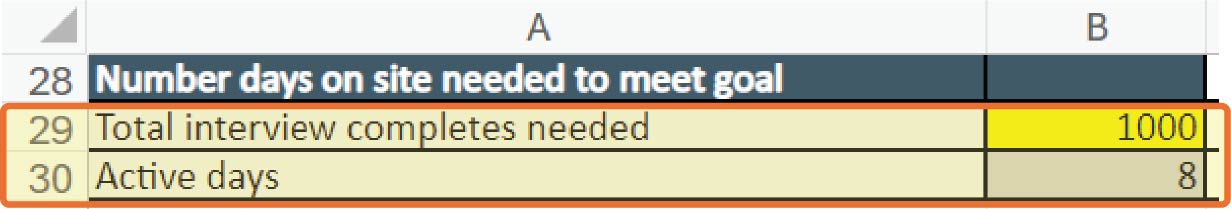

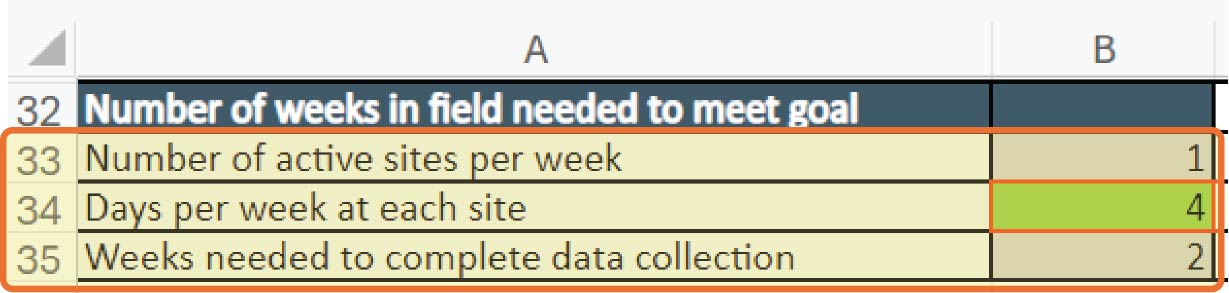

hours in the day that they can conduct interviews, the length of each interview, and for intercept surveys, by the numbers of sites where data is being collected. Tabs 2D, Intercept Support Hrs+ODC, and 2E, Telephone Support Hrs Calcs, provide green cells where assumptions about some factors can be input (see Exhibits 3.13 and 3.14).

These assumptions are used to calculate hours and ODCs per mode on each tab. If these modes are selected in Tab 2 (Cells C4–G4) (see Exhibit 3.12), the hours and costs from Tabs 2D and 2E are then updated in Tab 2. However, these assumptions can also be used to calculate fielding periods for these modes. The ideal number of days/weeks needed to complete intercept surveys to achieve a given number of survey completions appears in Cells B30 (days) and B35 (weeks) in Tab 2D (see Exhibit 3.15), and the number of days to complete sufficient CATI surveys via telephone to meet the same goal appears in Cell B33 in Tab E.

In addition to designing the instrument, another important activity in developing a survey study that uses CAWI as at least one of the modes is to program the instrument. Programming the instrument refers to transferring the questions from a static document that describes the questions and response options for each question into a web-based interface where respondents will be able to answer those questions. That interface may allow the survey to be rendered differently if it is accessed via a laptop or desktop computer or if it is accessed via a smartphone or tablet. In that interface, when building or programming a survey, selecting the types of questions may restrict respondentsʼ options for answering (e.g., limiting them to select only one option versus checking all that apply). Similarly, programming a survey also allows for skip logic to be applied. This makes the survey more complex, and it is important to have team members test the survey programming by completing it several times and selecting different answers each time that trigger different skip logic relationships between questions.

It may also be helpful to conduct cognitive testing of new/adapted survey items to make sure that potential respondents are interpreting and answering the questions as intended. Cognitive testing involves asking a small group of potential respondents a given question and interviewing them about their answer choices and the thought process they used. It includes developing an

Long Description.

The headers in column A, rows 28 and 32 are the 'Number days on-site needed to meet goal' and 'Number of weeks in the field needed to meet goal', respectively. The data on rows 30 and 35 are highlighted. They are Active days: 8 and Weeks needed to complete data collection: 2.

interview guide for testing, recruiting for interviews, conducting interviews, reviewing results, and revising the survey instrument/questionnaire. Tab 1, Focus Group Costing Tool, in Appendix A, can be used to budget for cognitive testing interviews. The research team recommends conducting 10 interviews to test new survey questions.

Finally, conducting a pilot of the survey is also an important part of this step. The pilot may include testing the skip logic as well as testing how long it takes for members of the survey sample to complete the survey (which may affect the incentive offered) by asking other staff or recruiting 20 to 30 potential respondents to complete the survey and provide feedback on their experience. For the latter, assume double the incentive for any mode being used. Piloting may reveal the need to reorder or cut questions depending on responses from the pilot group. Additionally, given that the survey will be collected from human beings, it needs to be reviewed by an IRB and receive human subjectsʼ research approval or exemption. However, hours for IRB review are not included, as it can be hard to estimate how much effort applying for IRB review will take because there is considerable variation in how different organizations handle this aspect of evaluation.

Step 3. Sampling

The next step in designing a survey study is to develop a sampling plan that describes how many and what types of respondents the study is looking for. Both the number of respondents and their characteristics affect the extent to which the survey results can be viewed as representative of the audience for the traffic safety messaging campaign. In terms of the types of people who are asked to complete the survey, the sample frame should reflect the audience that the campaign seeks to reach in terms of demographics like age, race/ethnicity, and gender. Therefore, if the campaign has been implemented on a statewide basis, the sampling should take into account the demographic makeup of the state and include similar proportions of respondents based on their demographics. Alternately, if the evaluation intends to understand how different groups of people within the state, based on their location (e.g., rural or urban, or specific counties), modes of transportation (e.g., drivers versus pedestrians), and demographics (if the campaign was targeted at younger, male drivers), the sampling plan should ensure that the appropriate proportions of each group are represented among the respondents, and the plan may call for oversampling some groups who may typically be harder to reach.

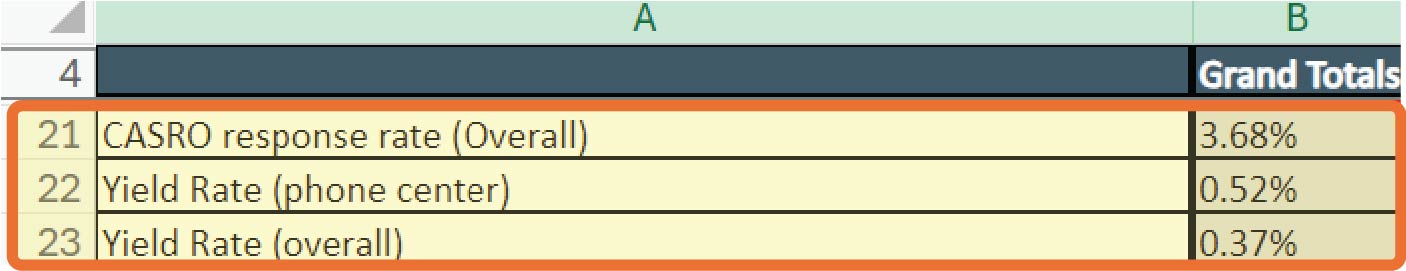

The size of the sample may also vary, based on how many responses it takes for the survey results to be considered representative and how likely members of the public are to respond to a survey, depending on the mode(s) used. For example, response rates for paper surveys range from 5% to 8%, depending on the type of sampling used, but can be as high as 25% to 30% if using social media to recruit for and share links to web-based surveys. In addition, the sample size can affect the ability to determine whether differences between different groupsʼ survey results are statistically significant (i.e., due to actual group differences rather than random chance) as well as the ability to detect statistically significant changes resulting from exposure to the campaign). Sampling can be a complex process that requires specific skills, experience, and qualifications, which SHSO staff may not have, and thus, SHSOs may want to consider contracting with a research organization or partnering with a local academic research institution to develop the sampling plan and draw a sampling frame.

Activities that need to be conducted to develop a sampling plan include identifying relevant respondent characteristics (e.g., age, gender, race/ethnicity, income, mode of transportation, area of residence) for design and weighting. These characteristics will be based on the characteristic audience for the campaign. Next, the team conducting the survey needs to identify and quantify the broader audience for the campaign or a population with the same relevant characteristics. This can involve comparing the characteristics of anticipated respondents to population, census,

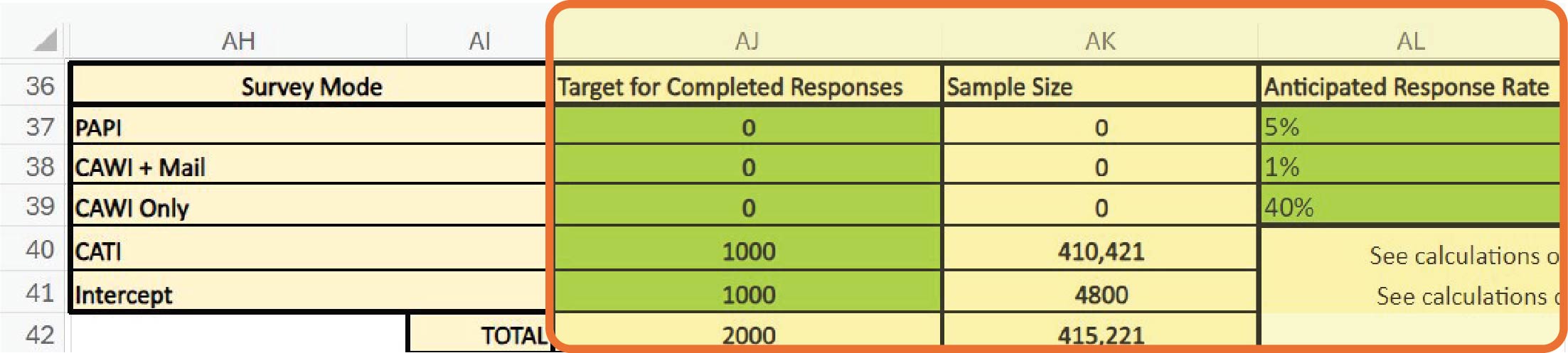

or Department of Motor Vehicles (DMV) data to identify how many members of the population fit those criteria. This informs the sample size and the numbers or proportions of members of specific subgroups within the sample. The sample size can also be informed by reviewing published evaluations of similar studies to determine what proportion of the population should complete the survey for the results to be representative as well as typical response rates per mode. This can help to identify the number of potential respondents that the survey should be sent to and the ideal modes for the survey. Users can input their targeted number for completed survey responses into Cells AJ37–AJ39 in Tab 2, Survey Costing Tool, and their anticipated response rates in Cells AL37–AL39 for PAPI, CAWI-plus-mailing, and CAWI-only surveys, respectively, to yield the total sample size they need in Cells AK37–AK39 (see Exhibit 3.16). Anticipated response rates may be based either on their research into local surveys or on the ranges recommended in the notes in Cells AM37–AM39.

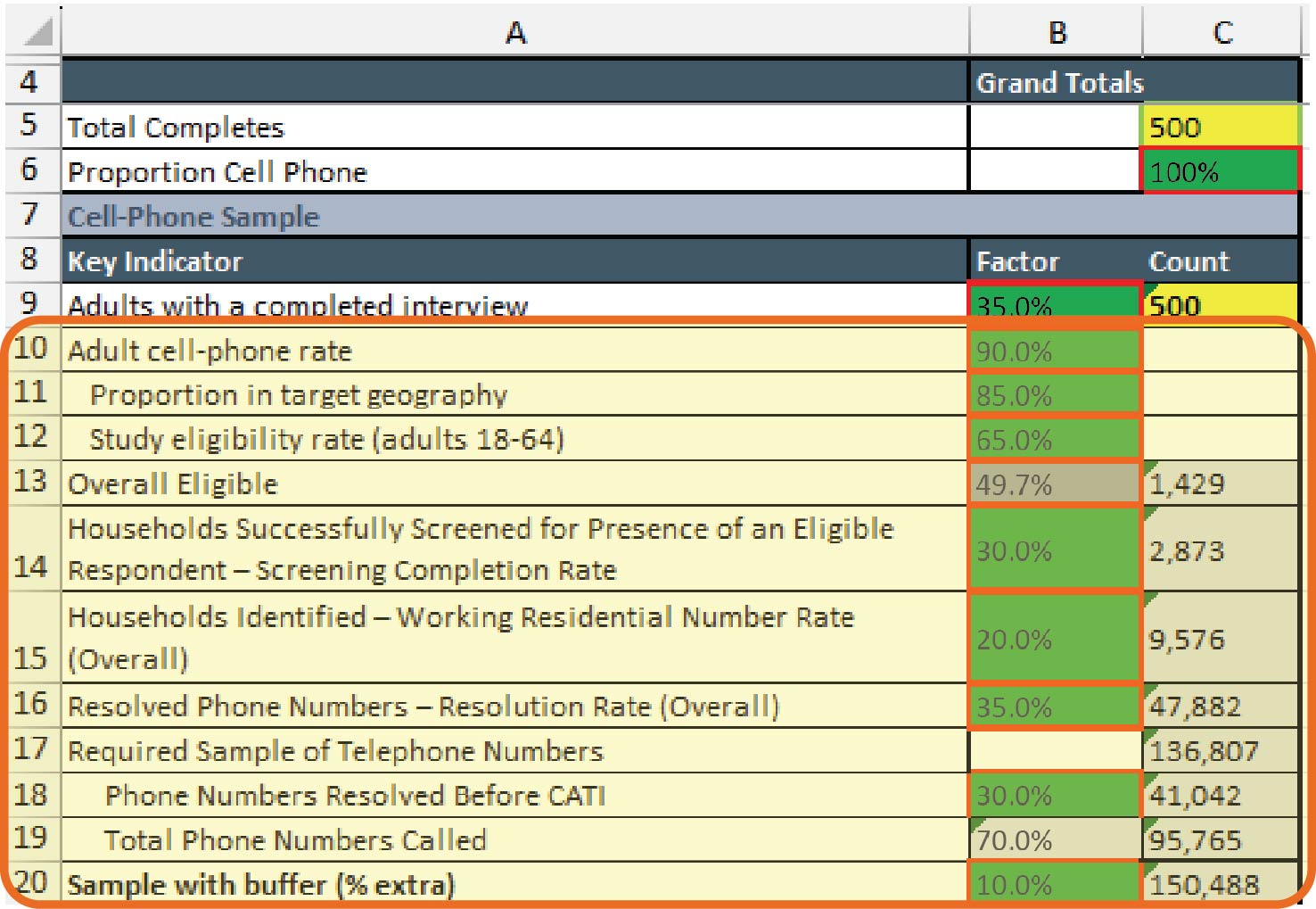

For intercept and CATI surveys, there are some additional factors to consider in determining sample size. For intercept surveys, these include foot traffic in a given area, likelihood of potential respondents stopping to talk to interviewers after being greeted, screener completion rates, eligibility rates, interviewer commute times, and planned days on-site. For CATI surveys, this can include the number of adults with cellphones or landlines, the number of residential numbers, and how many people answer the phone as well as screener completion and eligibility rates. Calculating the ideal sample size for intercept and CATI interviews begins with identifying the number of completed surveys sought for each mode in Cell AJ40 and AJ41 in Tab 2, Survey Costing Tool (see Exhibit 3.16). However, users should navigate to the respective tabs (Tab 2D, Intercept Support Hrs+ODC, and Tab 2E, Telephone Support Hrs Calcs), and input their assumptions into the relevant cells in green on those tabs to determine the sample sizes, which will be calculated based on those assumptions and reflected in Tab 2 in Cells AK40 and AK41.

Determining the sample size also involves determining the number of respondents in specific groups (or proportions of those groups relative to the total sample) who should be recruited to complete the survey. These proportions may need to be adjusted to account for groups who are known to be reluctant to complete surveys. Recruiting a higher proportion of members of those groups to ensure that enough responses from these groups are collected to be representative is referred to as oversampling.

The next steps involve determining the type of sample frames and sampling methods. Sampling frames refer to those members of the population that can be contacted and/or from whom information can be collected. These can come from the following:

- For PAPI/CAWI-plus-mailing surveys, via

- Existing lists of mailing addresses, maintained by various organizations, including state tax agencies or the Department of Motor Vehicles.

Long Description.

The column headers from A H to A L are survey mode, Target for completed responses, sample size, and anticipated response rate. The data are provided from rows 37 to 42. The columns A J through A L are highlighted.

-

- ABS, which provides a list of addresses within a given geographic area, often based on information from the U.S. Postal Serviceʼs (USPS) Computerized Delivery Sequence (CDS) file that contains all the addresses served by the USPS.

- For CATI surveys, via

- Existing lists of telephone numbers, which could come from similar sources to the lists of mailing addresses, or could come from vendors that supply a list of verified landline or cellphone numbers.

- Random digit dialing (RDD), where software is used to randomly generate 7- to 10-digit strings that could be potential telephone numbers, dial these, and connect an interviewer if someone answers. RDD number generation can be limited to specific area codes for a geographic focus, and landlines can be autodialed, but cellphone numbers must be dialed manually (according to law), and hence, evaluators may opt for pre-verified lists.

- For CAWI surveys, samples can come from existing lists of email addresses or targeted social media advertisements where social media platforms promote the survey to users of their platforms, from whom they have gathered demographic information.

Like sampling frames, sampling methods also vary. Sampling methods can be divided into probability and non-probability methods. The former assumes that members of a sampling frame are being randomly selected for outreach about the survey, while the latter focuses on reaching out to potential respondents who meet specific characteristics.

In terms of probability methods, approaches to selecting potential respondents include randomly selecting them on an individual basis or dividing the body of potential respondents into smaller groups, called clusters, based on preexisting criteria like their place of residence (e.g., neighborhoods, zip codes, or even towns and cities) and then randomly selecting among those clusters. The clusters should display similar diversity to the whole sample frame for this approach to be effective. This approach may apply to evaluating traffic safety messaging campaigns that seek to reach all adult drivers in a state (e.g., the sample frame is large and spread across a wide geographic area). Stratified sampling is another probability sampling method that begins by stratifying members of the sample frame according to specific criteria like age, race/ethnicity, gender, and place of residence, and then applying cluster or simple random sampling. This can ensure that the sample includes people with the relevant characteristics.

Options for non-probability samples include purposive sampling, where efforts are made to recruit specific types of respondents selected by the evaluators, to ensure that people with specific characteristics are included in the sample. Other non-probability sampling methods include convenience sampling, where respondents are included based on ease of access to them (e.g., based on existing relationships as well as their availability or presence in a given location, which is used for intercept surveys); or relying on members of the sample to self-select or volunteer to take the survey. Another non-probability option is snowball sampling, which is typically used when there is no list of people to randomly choose from (i.e., no sampling frame to apply). This often happens with hard-to-reach or mobile populations (e.g., people who move frequently or are not easily found). Snowball sampling means one person from the target group completes the survey then is asked to introduce the survey to others, and they are, in turn, also asked to share the survey.

Hours for the project team to engage in these activities are listed in Cells C24–E28 in Tab 2, Survey Costing Tool. Hours for the members of the sampling/methodology/analysis team to engage in these activities are included in Cell F24–K28 (see Exhibit 3.17). The allotted hours for the project team (Cells C24–E28) remain the same across modes, but the number of hours for the sampling/methodology/analysis team (Cells D24–K28) varies by the number of modes being used.

Long Description.

The column headers are task, notes, and a third column. The third column is divided into two sections. The first section is titled Project Team and contains three sub-headers titled subject matter expert, project manager, and product support. The second section is titled Sampling or Methodology or Analysis Team and contains six sub-columns titled research methodology, research methodology, research methodology, senior satisfaction, mid-level satisfaction, and junior satisfaction. Two headers given below are survey development followed by developing a sampling plan. The data are represented from rows 24 to 29.

Hours for each type of mode per role are included in Tab 2A, Sampling Support Hours, in Appendix A. Total Hours for sampling/methodology/analysis team across modes are automatically calculated and carried from Tab 2A into Tab 2 when each mode is selected in Cells C4–G4 at the top of Tab 2.

The final activity in developing a sample plan involves preparing load and mail files for PAPI and CAWI studies that rely on an initial introductory letter or postcard. This activity is not required for other modes. Surveys that involve mailing typically have a list of addresses (see ABS or list-based samples in Exhibit 3.17) that need to be loaded into a customer management database system that links addresses with unique participant identification numbers (PINs) so that outgoing and return mail can be tracked. The mailing team (or vendor if one is involved in mailing) needs access to these files to print and batch the letters, postcards, or surveys as well as reminder and thank-you letters. This step can also involve the creation of experimental variables to organize the surveys according to those PINs and conduct quality control tests to ensure that PINs and other identifiers for outgoing mail can be correctly linked to return mail. A junior statistician on the sampling/methodology/analysis team is usually responsible for creating and checking these files at the start of data collection or when a new batch of mail is sent.

Hours for the project and the sampling/methodology/analysis teams to engage in these activities are included in Cells C29–K29, with some additional hours for the mailing team involved in the preparation and loading of mail files in Cells T29–V29. The hours for the sampling/methodology/analysis and mailing teams are influenced by the number of modes being used for the survey and hours for each type of mode per role are drawn into Tab 2 from Tab 2A, Sampling Support Hours, and Tab 2C, Mail Support Hours (see Exhibit 3.18), depending on which mode is selected in Cells C4–G4 at the top of Tab 2 (see Exhibit 3.2).

Long Description.

The tab reads mail support hours.

Step 4. IT Support

Teams conducting surveys will likely need support from IT specialists, regardless of the mode of survey data collection:

- For PAPI surveys, IT professionals can help develop and manage databases of cases. These unique addresses make up the sample to which surveys will be sent. These databases are used to track which addresses have been sent surveys and reminders, which have responded, and

- which need to be sent thank-you letters and incentives. Likewise, IT support is needed for data entry for PAPI surveys so that the data collected from completed surveys can be correctly entered into databases that allow those responses to be analyzed, whether this is being done via OCR software or entered by hand by coders.

- For CAWI surveys, IT support is needed to program the web interface for collecting data, disseminate the survey, collect and clean the data that is gathered, and prepare it for analysis.

- Intercept surveys may also need a similar level of support if they are collecting data via laptops or tablets. In this case, data would be entered into a web interface by interviewers rather than by respondents. If responses are being collected on paper, an IT team would create similar data entry systems to those previously described for PAPI surveys.

- Likewise, CATI surveys would also need IT support to set up databases to track cases, including unique telephone numbers and whether telephone numbers called were still in use, whether they were connected to residences or businesses, and which callers had responded to the survey. The IT team will also need to create a web interface for interviewers to enter the data collected.

An IT support team includes several key roles:

- An IT project manager coordinates all activities to ensure the success of the project.

- A survey programmer is responsible for the design, development, launch, and maintenance of all questionnaires, including programming skips, branching, and tabular formatting for all modes of survey data collection.

- A data solutions developer is responsible for the output of the information collected for reporting, analysis, and delivery to the client.

- A network systems engineer is responsible for supporting and developing IT infrastructure for data collection, maintaining that infrastructure, monitoring its performance, troubleshooting, and diagnosing and resolving difficult and complex IT system problems.

- A QA analyst develops test plans and executes test scripts to ensure that new applications meet all defined requirements.

- A senior software engineer leads application developers and ensures business needs are coded properly and that the most applicable software programs and platforms are used to meet the business requirements.

- A systems architect is in charge of devising, configuring, operating, and maintaining computer and networking systems and will ensure the appropriate software and integrated systems are in place to foster quality automation.

- The database analyst works with other programmers, researchers, and statisticians to develop and maintain systems that extract data and prepare these data for delivery to internal and external clients, including designing and maintaining databases, managing large amounts of data, identifying, and establishing data relationships, and delivering well-organized, structured output for analysis.

Some of these roles, for instance, network systems engineers, software engineers, and system architects, already exist in organizational IT teams and are responsible for managing IT systems. Only some of these roles will be involved in survey data collection on an extensive basis, and not all roles will be needed for all modes. Typically, the bulk of the effort for IT support for surveys falls on the IT project manager and the survey programmer, who will liaise with the survey project team to set up the necessary systems for data collection and entry. Other roles come into play when there are more complex needs. Cells AH19–AK26 in Tab 2, Survey Costing Tool, in Appendix A, describe these IT support roles in additional detail (see Exhibit 3.5).

Tab 2B, IT Support Hours, describes the hours per role per mode for the activities needed to support survey administration. Some of these activities are conducted before surveys are fielded. This typically begins with an initial IT kickoff meeting where the survey project team meets with the IT project manager to discuss the specifications of any IT systems that need to be built. Thereafter, the IT team develops an IT project framework and process, where the actual systems

are built. For PAPI surveys, this step includes programming a receipt control module if needed, including a task to link received surveys to the cases or addresses to which it was sent, and doing some basic testing to make sure everything is working.

For CAWI, CATI, and intercept surveys, programming a receipt control module is part of the subsequent step, survey development, which also involves programming the survey instrument created in the prior steps. The next set of activities conducted before fielding focuses on quality automation, which involves developing or configuring an automated testing program to conform to the questionnaire and running the programmed questionnaire through automated quality testing. Automated testing can detect a wide variety of possible errors and is much more thorough than manual testing. Once it is configured, automated testing can be run an unlimited number of times, making it far more efficient than manual testing.

Other activities are conducted to support data collection during fielding and can include case management for intercept surveys as well as general data-collection support for all modes. Case management for intercept surveys involves developing an automated case management system for interviewers to keep track of their cases. General data-collection support from the IT team is needed for maintenance and troubleshooting if something goes wrong after data collection begins.

Tab 2B describes the hours per role per mode for each of these activities. The hours have been calculated, assuming a 6-week fielding period. There is no need to change the hours in any fields on this tab, and therefore it has been locked. The survey costing tab (Tab 2) will draw the hours indicated for each survey mode based on the selections in Cells C5–G5 on that tab and populate the appropriate cells for each phase (Cells L32–S35 for pre-fielding IT support, and L112–S113 for survey administration IT support), as shown in Exhibit 3.19.

Step 5. Recruit Respondents

For surveys, respondents are typically recruited and asked to complete the survey in the same step, though sometimes an introductory letter can be used in advance of a PAPI survey being mailed out. Recruiting survey respondents involves various methods tailored to reach diverse populations effectively. Teams may rely on randomized approaches like ABS or RDD, purposive, snowball, or convenience sampling. Some randomized approaches rely on sample frames based on existing lists of addresses or telephone numbers, which often have to be purchased from companies that collect and verify this information. In these instances, individuals are selected to be contacted using probability-based methods like simple random sampling, cluster sampling, or stratified sampling, all described in the Sampling Plan section.

Purposive sampling approaches can include paying market research companies for access to their existing pools of respondents who have already agreed to participate in surveys or other data-collection activities, or paying groups that represent particular types of audience members, for instance, healthcare providers, for access to their membership lists. Relying on existing databases like these streamlines identification and recruitment of potential respondents but may limit diversity in respondent profiles without additional outreach efforts. In addition to the cost of access to these databases, survey project teams should also account for labor hours spent identifying organizations like these (both market research companies and representative organizations), reaching out to them, establishing contracts or memoranda of understanding (MOUs), and monitoring recruitment via these organizations. Another purposive approach that SHSOs may be able to use involves leveraging DMV lists, which provide access to a broad demographic of licensed drivers. Using DMV lists offers a unique opportunity for SHSOs to target respondents based on traffic-related behavior (driving) in specific geographical areas or demographic groups pertinent to the projectʼs objectives. However, accessing DMV lists may present logistic challenges, such as ensuring data privacy compliance and obtaining updated contact information.

Long Description.

The column headers are task, notes, and a third column. The third column contains three sub-headers titled project team, sampling or methodology or analysis team, and I T team. The project team column contains three sub-headers titled subject matter expert, project manager, and product support. The sampling or methodology column contains six sub-headers research methodology, research methodology, research methodology, senior satisfaction, mid-level satisfaction, and junior satisfaction. The I T team contains eight sub-headers titled I T project manager, survey programmer, data solutions developer, network system engineer, assurance analyst, software engineer, systems architect, and database analyst. Two headers given below are survey development followed by pre-fielding I T support. Another header given in row 11 is survey administration I T support. The data are represented from rows 32 to 35 and 12 and 13. The sub-columns under the I T team are highlighted.

Social media platforms may also be effective for recruitment for convenience samples due to their large user bases and targeted advertising capabilities. For example, teams can deploy targeted advertising and posts to reach survey participants. The flexibility and reach of social media make it a versatile tool for recruitment, although managing engagement and ensuring the quality of responses can be a challenge. Additionally, costs vary based on advertising budgets and platform strategies, with additional expenses for content creation and monitoring.

Once recruitment methods are selected, they should be included in a recruitment plan that identifies how, where, and when respondents will be recruited as well as how many respondents and the criteria for inclusion based on the sample frames identified in the sampling plan and the mode of the survey. The plan should also detail what will be communicated to potential respondents, whether an incentive (and/or pre-incentive) will be offered, how much the incentive will be, and how many attempts will be made to reach potential respondents or remind them to take the survey. In addition, the recruitment plan should also describe how incoming responses will be screened to ensure representativeness. If a survey relies on an adaptive design, where recruitment and outreach methods change depending on the types of respondents who complete the survey during the fielding period to ensure representativeness or oversampling particular groups, these methods and the circumstances in which they will be applied can be included in the recruitment plan. It will also be helpful to create recruitment materials and include these in the plan. These include the images and text to be used in letters, postcards, social media advertising, emails, or phone/intercept scripts that describe the survey. The descriptions in these materials should include what topic the survey is asking about, who is eligible to complete the survey, the length of the survey, and what incentive is being offered. Considerations for incentivizing survey participation play an important role in recruitment effectiveness.

Offering incentives, whether pre- or post-survey completion, enhances response rates and participant engagement. Pre-incentives are provided upfront to encourage initial survey participation for PAPI surveys. These are typically cash, usually $1 to $2, depending on the complexity of the survey. For a campaign effectiveness survey, which may be less complex, $1 may be sufficient for a pre-incentive. Teams mailing out introductory letters with pre-incentives can often double the response rate by making the incentives visible through the mailing envelope window. Post-incentives, distributed after survey completion, ensure respondents fulfill their commitment and provide accurate data. These include cash rewards; gift cards for online shopping or grocery stores; or entry into prize draws, where merchandise is given away. Cash is best—while giveaways can attract interest, they may yield lower response rates compared to monetary incentives. Likewise, gift cards often come with a $1 to $2 processing fee that must be budgeted for. The amounts for post-incentives may vary slightly based on the mode of the survey, between $5 and $15 per survey. As described under pre-incentives, campaign effectiveness surveys may be less complex, and therefore, a $5 incentive for a two-page PAPI survey may be sufficient. This should be increased if the survey is more complex, if it is conducted in person via intercept surveys or over the phone via CATI surveys, respondents need to spend additional time responding to each question. This additional time should be reflected in a higher incentive.

Survey Administration

The following sections provide information on the administration of each mode of survey data collection and are thus not labeled as steps, given that evaluators can choose to implement multiple modes for the same survey. In addition, the information provided for some more resource-intensive modes, such as PAPI or CAWI surveys disseminated through an initial mailed letter or postcard, focuses on supplies and ODCs, with some minor discussion of labor hours for specific team members. For other, more time- or labor-intensive modes, for instance, CATI or intercept surveys, the detail provided describes the number of hours needed for specific roles, in particular, interviewers, and how those numbers are calculated, with less discussion on ODCs.

PAPI Surveys

Although this mode refers to PAPIs, these surveys are typically self-administered rather than interview-administered. This means that respondents complete the surveys on paper themselves by filling out a questionnaire and mailing it back to the organization that originally sent the survey. The PAPI mode is often used for longer surveys that may take more time to complete. Sampling for PAPI surveys may rely on either an ABS, which restricts mailing of surveys to random addresses within a particular geographic region, or location-based service (LBS), which relies on an existing database of addresses. Given that PAPI surveys are mailed out to potential respondents who have not already been recruited, the response rate can be low. However, it may be possible to recruit for these surveys using an introduction letter, with a pre-incentive, a few weeks before the actual survey is mailed. A pre-incentive is a small incentive offered with an introductory recruitment letter. This is effective in improving response rates. In addition, the response rate for PAPI surveys can be doubled by offering the pre-incentive in a way that makes it visible through a clear window on the mailing envelope. The pre-incentive ranges from $1 to $2, depending on the complexity of the survey. For traffic safety messaging campaign effectiveness surveys, an appropriate pre-incentive would be $1.

After mailing introductory letters, reminder letters can also be mailed to respondents who have not completed and mailed back a survey by a certain point. Once the survey is sent, respondents who complete the survey can then be mailed a thank-you letter and an incentive for completion, around $5.

PAPI surveys can be time-intensive and require a multi-person mailing team, including a production manager, an assistant production manager/operations analyst, several production clerks, someone to supervise those clerks, and someone whose role focuses on monitoring and other QA activities. Descriptions of all these roles are included in Tab 2, Survey Costing, in Appendix A, in Cells AJ27–AK31, with typical salaries based on Department of Labor categories in Cells AL27–AL31. This mode also tends to be more time-intensive for returns and post-fielding activities rather than fielding itself. The production manager is typically the only role allotted hours for fielding, including sending out an initial outreach communication and reminders. For post-fielding activities, the amount of effort for the production manager and other roles depends on whether letters or postcards are used to introduce the survey and send reminders, whether a printed survey is being mailed and returned to the senders (PAPI-only), or whether the introductory postcards/letters are being used to direct respondents to a web-based survey (referred to as postcard push-to-web or mail-push-to-web). All these options involve receipt control activities, but PAPI-only surveys may also involve hours for editing, computer-assisted data entry (CADE), and Fortran programming. Receipt control refers to tasks where production clerks log any mail that is returned, including both unanswered or unopened mail (which allows the team to remove those addresses from the sample database) as well as responses to initial outreach letters/postcards and completed surveys. The other activities are used for data entry from the PAPI surveys into electronic databases for analysis.

Users can use the dropdowns in Cells G4–O4 (see Exhibit 3.20) in Tab 2C, Mail Support Hours, in Appendix A to select the modes for survey dissemination, initial outreach, and reminders, which then calculate the appropriate hours for each mode in the table (Cells F10–O18 on the same tab), which in turn alter the hours for the mailing team on the main Survey Costing Tool tab (Tab 2), in Cells T29–V29, T47–T48, T82–Y83, T118–X124, and W125.

PAPI surveys can also be resource-intensive. Supplies for PAPI surveys include paper for introductory and reminder letters and surveys to be printed on as well as different sizes of envelopes. In addition, there are printing costs for the letters and surveys as well as printing addresses on envelopes. Cells AJ60–AJ64 describe average anticipated printing costs as well as the costs of envelopes for surveys and either introductory, reminder, or thank-you letters. Estimated survey

Long Description.

The column headers are survey dissemination mode, initial outreach, and reminder. Row 4 with yes or no responses for the surveys listed in the row above is highlighted.

printing costs assume a two-page survey with 10 questions at $0.10 per survey, with a 9″ × 12″ outer mailing envelope that costs $0.15. Introduction, reminder, or thank-you letters assume a single page with an estimated $0.05 printing cost with a No. 10 outer mailing envelope at $0.08. In addition, the surveys and letters must be inserted into the appropriate envelopes, and postage must be applied, which can also be time-intensive. However, some vendors can conduct this activity and provide other related services for a fee. For surveys, the current estimated cost per survey, assuming a two-page survey is inserted into a 9″ × 12″ envelope, is $2.25, and the costs per letter for single-page introductory, reminder, and thank-you letters, inserted into a No. 10 outer mailing envelope, are $0.50. These vendors also offer an address lookup service where batches of addresses from ABS are compared with a database of public records maintained by LexisNexis, the worldʼs largest electronic database for legal and public-records-related information, to ensure that the zip codes, county codes, and other address information are accurate. This can cost between $0.07 to $0.10 per record but allows organizations to mail surveys, letters, reminders, and pre-incentives to avoid the cost of mailing to inaccurate or incomplete addresses.

For budgeting, PAPI surveys must also take into account mailing costs for outreach and return mail. Outgoing mailing costs can increase depending on the number of pages in the survey, which affects the weight of the outbound mailings. Outgoing survey mailings often include return envelopes that are pre-stamped to ensure that the respondents are not incurring mailing costs themselves, especially as respondents will be unlikely to complete a survey if they have to pay to mail it themselves. Outgoing costs can be reduced somewhat by mailing in bulk (i.e., mailing a large number of surveys at the same time and using a permit imprint, which allows companies to print postage information directly onto envelopes or postcards rather than using individual stamps). However, companies have to pay an initial application fee of $320 to the USPS for the permit as well as an annual fee, also $320, to be able to use an imprint and receive special bulk pricing. They may also be able to purchase specialized machines that weigh and apply the permit stamp with the appropriate mailing costs, referred to as mailing meters. The current average costs for survey mailing have been estimated in Cell AJ74 as $1.46 based on pre-sorted mail (referred to as USPS presort), either stamped or metered, and assumes 9″ × 12″ envelopes (see Exhibit 3.21). Likewise, the cost for reminders and thank-you letters, which are assumed to weigh less, has been estimated at $0.62, which is also based on the average cost for USPS presort, first-class metered, and first-class stamped using #10 envelopes.

Likewise, return mail costs can be reduced by using preprinted business return envelopes (BREs) that also require a permit from the USPS to preprint business reply information on envelopes in place of a stamp. The envelopes are currently $0.14, but the fee for the permit and per-envelope mailing costs can vary depending on the total volume of returns anticipated. The current annual low-volume BRE fee for mailings anticipating fewer than 977 returns is $265,

Long Description.

The column headers given under AH through AI are ODCs, notes, and costs. The headers given below are PAPI: mailing services such as stuffing envelopes an postages, outgoing postages, and return mail. The data given in rows 77 and 78 are highlighted.

and the estimated mailing cost per envelope for a two-page survey is currently $1.71, assuming a 9″ × 12″ envelope. The annual high-volume fee is currently $1,065, including annual and separate quarterly fees with a per-envelope mailing cost, also assuming a 9″ × 12″ envelope is $1.01. The BRE low- and high-volume permit fee figures are included in Cells AJ66 and AJ67, and the per-envelope fees are included in Cells AJ77 and AJ78 (see Exhibit 3.21). These figures can be updated based on usersʼ more timely research. To calculate the correct amount, users should enter “0” in the low-volume fields (Cells AJ66 and AJ77). If using the high-volume service, they should enter “0” in Cells AJ67 and AJ78 if they are budgeting for the low-volume service (see Exhibit 3.21).

CAWI with Mailing

Postcards or letters may also be used to introduce and share an address or URL for a CAWI survey and to send reminders to respondents who have not started surveys. This mode is referred

to as CAWI-plus-mailing or mail-push-to-web. Like PAPI surveys, this mode incurs similar envelope and paper costs (or postcard costs), printing, and mailing costs for outgoing mail but avoids return mail fees associated with PAPI-only surveys.

Labor hours and ODCs for preparation of CAWI surveys, including programming and testing, are included under the Survey Development and Develop Instrument sections. ODCs for programming and fielding are described in the CAWI-only section. Labor hours for printing letters or postcards for the CAWI-plus-mailing for the project team appear in Cells D65–E67 in Tab 2, Survey Costing Tool, in Appendix A, and for managing incentives in Cells D79 and E79 (see Exhibit 3.22). ODCs for paper and envelopes, mailing services, and postage for introduction and reminder letters or postcards for this mode are included in Cells AB65–AC77 and calculated automatically based on inputs in terms of the number of completions sought (Cell AJ38) and the anticipated response rates (Cell AL38) which yield the sample size (Cell AK38) as well as ODCs input into Cells AJ83–95. Current estimates for paper, envelopes, postcards, mailing services, and postage are included in these cells. Users may update the values for each type of ODC in those cells based on their own research.

CAWI-Only Surveys