Reference Manual on Scientific Evidence: Fourth Edition (2025)

Chapter: Reference Guide on Epidemiology

Reference Guide on Epidemiology

STEVE C. GOLD, MICHAEL D. GREEN, JONATHAN CHEVRIER, AND BRENDA ESKENAZI

Steve C. Gold, J.D., is Professor of Law and Judge Raymond J. Dearie Scholar, Rutgers Law School, Rutgers University–Newark, Rutgers, The State University of New Jersey.

Michael D. Green, J.D., is Visiting Professor, Washington University in St. Louis School of Law.

Jonathan Chevrier, Ph.D., is Associate Professor of Epidemiology, Department of Epidemiology, Biostatistics and Occupational Health, School of Population and Global Health, Faculty of Medicine and Health Sciences, McGill University.

Brenda Eskenazi, Ph.D., is Professor Emeritus of Maternal and Child Health and Epidemiology, School of Public Health, University of California at Berkeley.

CONTENTS

The Different Kinds of Epidemiologic Studies

Experimental and Observational Studies of Suspected Toxic Agents

Types of Observational Study Design

Genetic and Molecular Epidemiologic Studies

Epidemiologic and Toxicologic Studies

Interpreting the Results of an Epidemiologic Study

Internal and External Validity

Sources of Error in Epidemiologic Studies

Information bias due to misclassification

Techniques to identify confounding factors

Techniques to prevent or limit confounding

Techniques to control for confounding factors

Biases That Affect a Body of Epidemiologic Evidence

Consideration of Alternative Explanations

Specificity of the Association

Consistency with Other Relevant Knowledge

Methods for Synthesizing or Combining the Results of Multiple Studies

Qualifications of Experts in Epidemiology

FIGURES

2. Design of a case-control study

3. Risks in exposed and unexposed groups

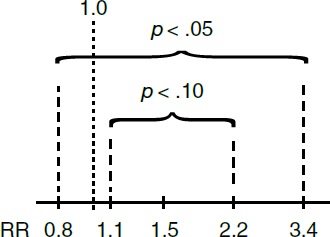

5. 90% and 95% confidence intervals for a hypothetical study with a relative risk of 1.5

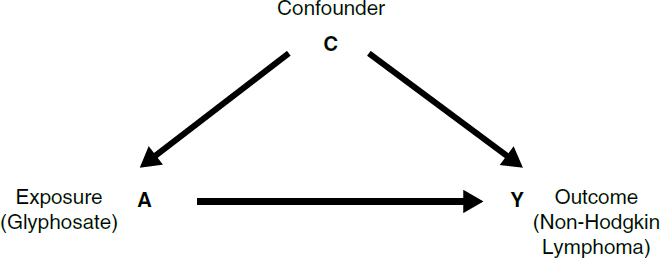

6. Directed acyclic graph (DAG) for a hypothetical study of glyphosate and non-Hodgkin lymphoma

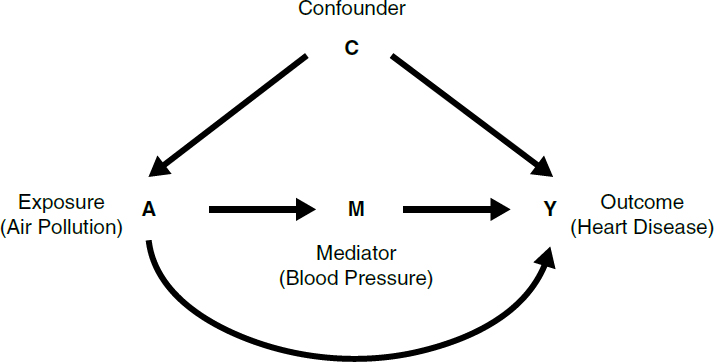

7. Directed acyclic graph for a hypothetical study of air pollution and heart disease

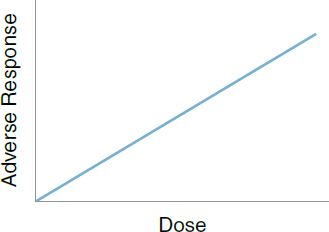

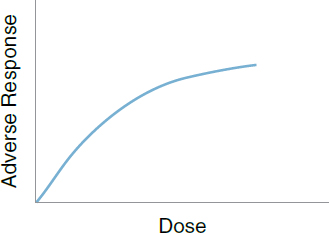

10. Linear threshold dose response

11. Supra-linear dose response

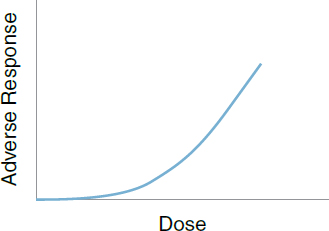

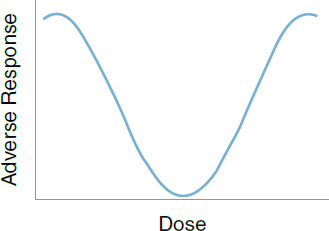

13. Non-monotonic dose response

TABLES

1. Cross Tabulation of Exposure by Disease Status

2. Cross Tabulation of Cases and Controls by Exposure Status

Introduction

Epidemiology is the field of public health and medicine that studies the occurrence, distribution, and determinants of health and disease in human populations. The purpose of epidemiology is to better understand disease causation and to prevent disease in groups of individuals.1 Epidemiology assumes that disease is not distributed randomly in a group of individuals and that identifiable subgroups, including those exposed to certain agents, are at increased risk of contracting particular diseases.2

Judges and juries increasingly are presented with epidemiologic evidence as the basis of an expert’s opinion on causation.3 In the courtroom, epidemiologic research findings are offered to establish or dispute whether exposure to an agent4 caused a harmful effect or disease.5 Since Daubert v. Merrell Dow

1. More generally, epidemiologists study and seek to improve health outcomes in populations; this may go beyond the study of “diseases.” For example, an epidemiologist might be interested in suspected causes of osteoarthritis of the knee (a health outcome that is a disease) and also in interventions thought to improve osteoarthritic knees’ range of motion (a health outcome that is not a disease). In this reference guide, we generally refer to the dependent variable being assessed in an epidemiologic study as a health outcome. In certain contexts, however, we use the term disease, because a plaintiff’s disease is the type of outcome most often at issue in cases in which epidemiology serves as evidence of causation. In any case, in the legal context, references to health outcomes or diseases should be understood, unless stated otherwise, as constituting adverse changes that are legally cognizable. In addition, in this reference guide we generally discuss outcomes as if they are discrete variables that either are present or absent (such as cancer), even though many health outcomes are continuous variables (such as blood pressure or body mass index).

2. Epidemiologists also conduct studies of beneficial agents that reduce disease risk or that prevent or cure disease or otherwise improve health outcomes.

3. Epidemiologic studies have been well received by courts deciding cases involving toxic substances. See, e.g., Norris v. Baxter Healthcare Corp., 397 F.3d 878, 882 (10th Cir. 2005) (“[E]pidemiology is the best evidence of general causation in a toxic tort case.”); Newman ex rel. Newman v. McNeil Consumer Healthcare, No. 10 C 1541, 2013 WL 9936293, at *10 (N.D. Ill. Mar. 29, 2013) (“Epidemiological studies are important . . . [and provide] ‘the primary, generally accepted methodology for demonstrating a causal relation between a chemical and a set of symptoms or a disease.’” (quoting Conde v. Velsicol Chem. Corp., 804 F. Supp. 972, 1025–26 (S.D. Ohio 1992), aff’d, 24 F.3d 809 (6th Cir. 1994))). Well-conducted studies are uniformly admitted. 3 Modern Scientific Evidence: The Law and Science of Expert Testimony § 23.1, at 321 (David L. Faigman et al. eds., 2021–2022) [hereinafter Modern Scientific Evidence].

4. We use the term agent to refer to any substance external to the human body that potentially causes disease or other health effects. Drugs, medical devices, chemicals, radiation, vaccines, pathogens (e.g., viruses or bacteria), and minerals (e.g., asbestos) are all agents whose toxicity an epidemiologist might explore. A single agent or a number of independent agents may cause disease, or the combined presence of two or more agents may be necessary for the development of the disease. Epidemiologists also conduct studies of individual characteristics that might pose risks, such as blood pressure and diet, but those studies are rarely of interest in judicial proceedings except as possible alternative causes to an alleged tortious cause. Epidemiologists also may conduct studies of drugs and other pharmaceutical products to assess their efficacy and safety in clinical trials.

5. E.g., In re Testosterone Replacement Therapy Prods. Liab. Litig. Coordinated Pretrial Proc., No. 14 C 1748, 2017 WL 1833173 (N.D. Ill. May 8, 2017) (assessing whether plaintiffs’ arterial

Pharmaceuticals,6 the predominant use of epidemiologic studies is in connection with motions to exclude the testimony of expert witnesses. Courts deciding such motions routinely address epidemiologic studies and whether they are sufficient to support an expert’s causation testimony.7

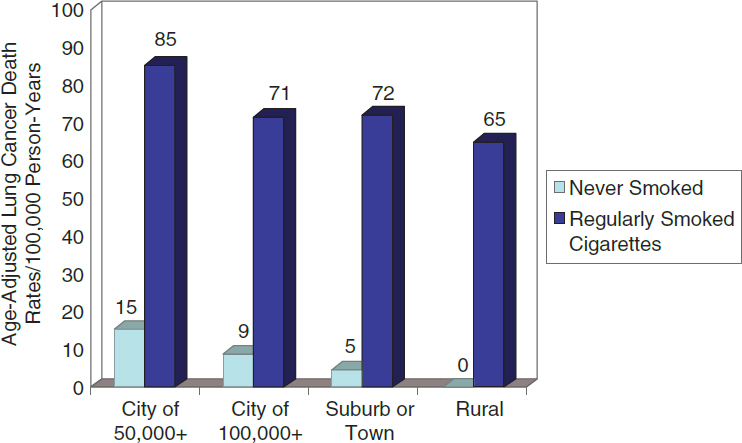

Epidemiology aims to develop evidence to identify agents that are associated with an increased risk of disease in groups of individuals, quantify the amount of excess disease that is associated with an agent, and attempt to provide a profile of the type of individual who is more likely to contract a disease after being exposed to an agent. Epidemiology focuses on the question of general causation (i.e., is the agent capable of causing disease?) rather than that of specific causation (i.e., did it cause disease in a particular individual?).8 For example, in the 1950s, Doll and Hill and others published articles about the increased risk of lung cancer in cigarette smokers. Doll and Hill’s studies showed that smokers

cardiovascular injuries or venous thromboembolisms were caused by prescription testosterone-replacement-therapy drugs); In re Mirena IUD Prods. Liab. Litig., 169 F. Supp. 3d 396 (S.D.N.Y. 2016) (assessing whether uterine perforation was caused by intrauterine devices); In re Lipitor (Atorvastatin Calcium) Mktg., Sales Pracs. & Prods. Liab. Litig., 174 F. Supp. 3d 911 (D.S.C. 2016) (assessing whether Lipitor caused the development of type 2 diabetes); In re E.I. du Pont de Nemours & Co. C-8 Pers. Inj. Litig., No. 2:13-MD-2433, 2015 WL 4092866 (S.D. Ohio July 6, 2015) (assessing whether water sources contaminated with ammonium perfluorooctanoate (C-8, or PFOA) caused residents to develop certain diseases, including testicular cancer and preeclampsia).

6. 509 U.S. 579 (1993).

7. See Michael D. Green & Joseph Sanders, Admissibility Versus Sufficiency: Controlling the Quality of Expert Witness Testimony, 50 Wake Forest L. Rev. 1057 (2015). Often the expert witness in a case in which a study bears on causation is not the investigator who conducted the study. See, e.g., DeLuca v. Merrell Dow Pharms., Inc., 911 F.2d 941, 953 (3d Cir. 1990) (holding a pediatric pharmacologist expert’s credentials sufficient pursuant to Fed. R. Evid. 702 to interpret epidemiologic studies and render an opinion based thereon); In re Deepwater Horizon Belo Cases, No. 3:19CV963-MCR-HTC, 2022 WL 17721595, at *13 (N.D. Fla. Dec. 15, 2022) (stating that non-practicing physician who worked in public health and biomedical research was qualified to testify about general causation); Donner v. Alcoa Inc., No. 10-CV-00908-DW, 2014 WL 12600281, at *5 (W.D. Mo. Dec. 19, 2014) (holding admissible a pathologist’s opinion about general and specific causation regarding exposure to aluminum dust and pulmonary fibrosis); Watson v. Dillon Cos., Inc., 797 F. Supp. 2d 1138, 1162 (D. Colo. 2011) (holding medical doctors’ opinions about general and specific causation regarding inhalation of butter flavoring ingredients in microwave popcorn and a rare lung condition were admissible); Sugarman v. Liles, 190 A.3d 344, 345–68 (Md. 2018) (holding admissible a physician’s testimony about general and specific causation regarding lead paint and cognitive injuries).

8. The distinction between general causation and specific causation is widely recognized in court opinions. See, e.g., Norris v. Baxter Healthcare Corp., 397 F.3d 878, 881 (10th Cir. 2005) (“Plaintiff[s] must first demonstrate general causation because without general causation, there can be no specific causation.”); Harrison v. BP Expl. & Prod. Inc., No. CV 17-4346, 2022 WL 2390733, at *4 (E.D. La. July 1, 2022) (“Once a plaintiff’s diagnoses have been confirmed, the plaintiff has the burden of establishing general causation and specific causation.”); Rhyne v. United States Steel Corp., 474 F. Supp. 3d 733, 743 (W.D.N.C. 2020) (“Plaintiffs must prove both general causation and specific causation.”). For a discussion of specific causation, see the section titled “Specific Causation” below.

who smoked 10 to 20 cigarettes a day had a lung-cancer mortality rate that was about 10 times higher than that of nonsmokers.9 These studies identified an association between smoking cigarettes and death from lung cancer that contributed to the determination that smoking causes lung cancer.

However, it should be emphasized that an association is not equivalent to causation.10 An association is the relationship between two events (e.g., exposure to a chemical agent and development of disease) that occur more frequently together than one would expect by chance. An association identified in an epidemiologic study may or may not be causal.11

Assessing whether an association is causal requires an understanding of the strengths and weaknesses of the study’s design and implementation, as well as a judgment about how the study findings fit with other similar studies and

9. Richard Doll & A. Bradford Hill, Lung Cancer and Other Causes of Death in Relation to Smoking: A Second Report on the Mortality of British Doctors, 2 Brit. Med. J. 1071 (1956), https://doi.org/10.1136/bmj.2.5001.1071.

10. In In re Lipitor (Atorvastatin Calcium) Mktg., Sales Pracs. & Prod. Liab. Litig., 227 F. Supp. 3d 452 (D.S.C. 2017), aff’d sub nom. In re Lipitor (Atorvastatin Calcium) Mktg., Sales Pracs. & Prod. Liab. Litig. (No II) MDL 2502, 892 F.3d 624 (4th Cir. 2018), the court explained the relationship between an association and causation:

Establishing an association is the first threshold step in establishing general causation, and it is not surprising that courts may invoke this language to help differentiate the inquiries of general and specific causation. However, this fact does not change voluminous and well-established precedent that association, alone, is not sufficient to establish causation and does not change the simple fact that association is not causation. The parties have always agreed that establishing association is just the first step of a two-step process for establishing general causation. Id. at 483 n.23. See also Harris v. CSX Transp., Inc., 753 S.E.2d 275, 282 (W. Va. 2013) (“It should be clearly understood that the term “association” is a term of art in epidemiology . . . [and] is not the same as causation. An epidemiological association identified in a study may or may not be causal.”); Soldo v. Sandoz Pharms. Corp., 244 F. Supp. 2d 434, 461 (W.D. Pa. 2003) (discussing Hill criteria developed to assess whether an association is causal; see section titled “General Causation” below); Magistrini v. One Hour Martinizing Dry Cleaning, 180 F. Supp. 2d 584, 591 (D.N.J. 2002) (“[A]n association is not equivalent to causation.” (quoting the second edition of this reference guide). Association is more fully discussed in the section titled “Interpreting the Results of an Epidemiologic Study” below.

Causation is used to describe the relation between two events when one event (the cause) is a necessary link in a chain of events that results in the effect. Of course, alternative causal chains may exist that do not include the agent but that result in the same effect. For general treatment of causation in tort law and an explanation that for factual causation to exist an agent must be a necessary link in a causal chain sufficient for the outcome, see Restatement (Third) of Torts: Liability for Physical and Emotional Harm § 26 (2010). Epidemiologic methods cannot deductively prove causation; indeed, all empirically based science cannot directly prove a causal relation. See, e.g., Kenneth J. Rothman & Sander Greenland, Causation and Causal Inference in Epidemiology, 95 Am. J. Pub. Health S144 (2005), https://doi.org/10.2105/AJPH.2004.059204. However, epidemiologic evidence can justify an inference, and sometimes a very strong inference, that an agent causes a disease. See section titled “Effect Modification” below.

11. See section titled “Sources of Error in Epidemiologic Studies” below.

scientific knowledge. It is important to emphasize that all studies have limitations that must be considered to interpret their results properly.12 Some limitations are inevitable given the limits of technology, resources, the ability and willingness of persons to participate in a study, participant burden, and ethical constraints. In evaluating epidemiologic evidence, the key questions, then, are the extent to which a study’s limitations compromise its findings and permit inferences about causation.

Judges should also appreciate that, with some frequency, courts may confront cases in which there is little or no epidemiologic evidence available that addresses the agent–disease causation issue in dispute. Epidemiology studies can be expensive and time-consuming to conduct. Other constraints, such as the rarity of exposure to the suspected toxicant, may prevent development of meaningful epidemiologic evidence. Cases in which agent–disease causation is strongly disputed also tend to be ones in which there is not a robust body of epidemiology that provides persuasive evidence of the existence of general causation vel non.

Epidemiology studies risk in samples of populations. Employing the results of group-based studies of risk to make a causal determination for an individual plaintiff is beyond the limits of epidemiology. Nevertheless, a substantial body of legal precedent has developed that addresses the use of epidemiologic evidence to prove causation for an individual litigant through probabilistic means. The law developed in these cases is discussed later in this reference guide.13

The following sections of this reference guide address a number of critical issues that arise in considering the admissibility of, and weight to be accorded to, epidemiologic research findings. A glossary of oft-encountered epidemiologic terms is appended to the guide.14

Over the past several decades, courts frequently have confronted the use of epidemiologic studies as evidence and have recognized their utility in proving causation. As the Third Circuit observed in DeLuca v. Merrell Dow Pharmaceuticals: “The reliability of expert testimony founded on reasoning from epidemiologic

12. See In re Phenylpropanolamine (PPA) Prods. Liab. Litig., 289 F. Supp. 2d 1230, 1240 (W.D. Wash. 2003) (quoting the second edition of this reference guide and criticizing defendant’s “ex post facto dissection” of a study, recognizing that “scientific studies almost invariably contain flaws.”); Henricksen v. ConocoPhillips Co., 605 F. Supp. 2d 1142, 1169 (E.D. Wash. 2009) “[T]his court understands that in epidemiology hardly any study is ever conclusive, and the court does not suggest that an expert must back his or her opinion with published studies that unequivocally support his or her conclusions.”); Barrera v. Monsanto Co., 2019 WL 2331090, at *8 (Del. Super. Ct. May 31, 2019) (discussing flaws in cohort and case control studies relied on by expert witnesses); Joseph L. Gastwirth, Reference Guide on Survey Research, 36 Jurimetrics J. 181, 185 (1996), https://www.jstor.org/stable/29762414 (review essay) (“One can always point to a potential flaw in a statistical analysis.”).

13. See section titled “Specific Causation” below.

14. See Glossary of Terms below.

data is generally a fit subject for judicial notice; epidemiology is a well-established branch of science and medicine, and epidemiologic evidence has been accepted in numerous cases.”15 Indeed, much more difficult problems arise for courts when there is a paucity of epidemiologic evidence.

Three basic issues arise when epidemiology is used in legal disputes and the methodological soundness of a study and its implications for resolution of the question of causation must be assessed:

- Do the results of an epidemiologic study or studies reveal an association between an agent and disease?

- Could this association have resulted from limitations of the study (bias or sampling error), and if so, from which?

- Based on the analysis of limitations in Question 2 above, and on other evidence, how plausible is a causal interpretation of the association?

In this reference guide, the section titled “The Different Kinds of Epidemiologic Studies” explains the nature and relative strengths and weaknesses of various types of epidemiologic research designs; the “Interpreting the Results of an Epidemiologic Study” section addresses the meaning of their outcomes, including discussion of how to assess the validity of an epidemiologic study and of how to evaluate the existence and significance of several potential sources of error.16 The “General Causation” section discusses general causation, considering whether an agent is capable of causing disease. The “Methods for Synthesizing or Combining the Results of Multiple Studies” section deals with methods for combining the results of multiple epidemiologic studies and the difficulties entailed in extracting a single global measure of risk from multiple studies. The “Specific Causation” section addresses the matter of whether a specific agent caused the disease in a given plaintiff, including the recurrent issue of whether

15. 911 F.2d 941, 954 (3d Cir. 1990); see also Norris v. Baxter Healthcare Corp., 397 F.3d 878, 881, 882 (10th Cir. 2005) (“We agree with the district court that epidemiology is the best evidence of general causation in a toxic tort case.”); Riddell-Hare v. BP Expl. & Prod., Inc., No. CV 17-4177, 2022 WL 3445718, at *4 (E.D. La. Aug. 17, 2022) (observing that the “Fifth Circuit has held that epidemiology provides the best evidence of causation in a toxic tort case”); Brasher v. Sandoz Pharms. Corp., 160 F. Supp. 2d 1291, 1296 (N.D. Ala. 2001) (“Unquestionably, epidemiologic studies provide the best proof of the general association of a particular substance with particular effects, but it is not the only scientific basis on which those effects can be predicted.”); In re Accutane Litig., 191 A.3d 560, 576 (N.J. 2018) (explaining expert’s testimony that epidemiologic studies provided the best available evidence on the disputed causal issue).

16. For a more in-depth discussion of the statistical basis of epidemiology, see David H. Kaye & Hal S. Stern, Reference Guide on Statistics and Research Methods, in this manual, and two case studies: Joseph Sanders, The Bendectin Litigation: A Case Study in the Life Cycle of Mass Torts, 43 Hastings L.J. 301 (1992), https://perma.cc/C2LN-SB53; Devra L. Davis et al., Assessing the Power and Quality of Epidemiologic Studies of Asbestos-Exposed Populations, 1 Toxicological & Indus. Health 93 (1985), https://doi.org/10.1177/074823378500100407; see also section titled “References” below.

and how population-based epidemiologic evidence can be used to address specific causation vel non.

The Different Kinds of Epidemiologic Studies

Experimental and Observational Studies of Suspected Toxic Agents

To determine whether an agent affects the risk of developing a certain disease or an adverse health outcome, we might ideally want to conduct an experimental study in which the subjects would be randomly assigned to one of two groups: one group exposed to the agent of interest and the other not exposed. After a period of time, the study participants in both groups would be evaluated for the development of the disease. This type of study, called a randomized trial, randomized controlled trial (RCT), clinical trial, or true experiment, is considered the gold standard to estimate the causal effect of an agent on a health outcome or adverse side effect. Such a study design is often used to evaluate new drugs or medical treatments and is the best way to determine whether the observed difference in outcomes between the two groups is caused by exposure to the drug or medical treatment.

Randomization minimizes the likelihood that there are differences in relevant characteristics between those exposed to the agent and those not exposed. Researchers conducting clinical trials generally attempt to use study designs that are placebo-controlled, which means that the group not receiving the active agent or treatment is given an inactive ingredient that appears similar to the active agent under study. Where possible, they are also double-blinded, which means that neither the participants nor those conducting the study know which group is receiving the agent or treatment and which group is receiving the placebo. However, ethical and practical constraints limit the use of such experimental methodologies to studies that aim to assess the effects of agents or interventions that are potentially beneficial to human beings or the withdrawal of an agent thought to be harmful, such as studies of smoking cessation.17

17. Although clinical trials cannot intentionally expose subjects to suspected toxicants, those studies can provide evidence that a new drug or other beneficial intervention also has adverse effects. See In re Lipitor (Atorvastatin Calcium) Mktg., Sales Pracs. & Prods. Liab. Litig., 227 F. Supp. 3d 452, 481 & n.19 (D.S.C. 2017) (explaining clinical trial that found an association between Lipitor and diabetes), aff’d. 892 F.3d 624 (4th Cir. 2018); In re Bextra & Celebrex Mktg. Sales Pracs. & Prod. Liab. Litig., 524 F. Supp. 2d 1166, 1181 (N.D. Cal. 2007) (relying on a clinical study of Celebrex that revealed increased cardiovascular risk to conclude that the plaintiff’s experts’ testimony on causation was admissible).

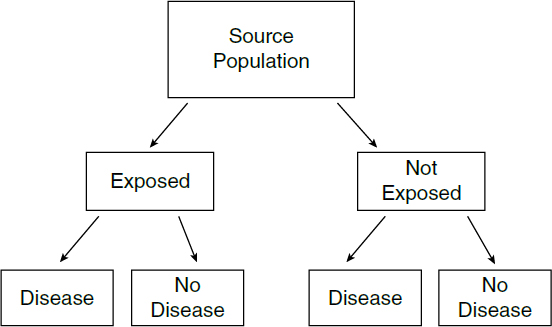

When an agent’s effects are suspected to be harmful, researchers cannot knowingly expose people to the agent.18 Instead, epidemiologic studies typically “observe” a group of individuals who have been exposed to an agent of interest, such as cigarette smoke or an industrial chemical, and compare them with another group of individuals who have not been exposed.19 Thus, the investigator identifies a group of subjects who have been exposed20 and compares their rate of disease or death with that of an unexposed group or compares health outcomes among groups of individuals with different levels of exposure.

In contrast to clinical trials, in which other potential risk factors can be controlled by randomizing exposure, observational epidemiologic studies entail the possibility that some other characteristic (a confounder)21 may be nonrandomly distributed between the exposed and unexposed groups, which could distort a study’s results.22

Epidemiologic investigators address the possible role of these other characteristics—such as sex, age, social class, diet, exercise, exposure to other environmental agents, and genetic background—by measuring them when possible and by considering them in the design of the study and in the analysis and

18. See, e.g., Gordon H. Guyatt, Using Randomized Trials in Pharmacoepidemiology, in Drug Epidemiology and Post-Marketing Surveillance 59 (Brian L. Strom & Giampaolo Velo eds., 1992), https://doi.org/10.1007/978-1-4899-2587-9_8; Comm’n on Use of Third Party Toxicity Rsch. with Hum. Rsch. Participants, Nat’l Rsch. Council, Intentional Human Dosing Studies for EPA Regulatory Purposes: Scientific and Ethical Issues 9 (2004), https://doi.org/10.17226/10927 (“No study is ethically justifiable if it is expected to cause lasting harm to study participants.”); 45 C.F.R. § 46.111 (2022) (requiring that in federally funded research on human subjects, risks to subjects be minimized and be reasonable in relation to anticipated benefits to subjects and the importance of knowledge gained); see also McClellan v. I-Flow Corp., 710 F. Supp. 2d 1092, 1109 (D. Or. 2010) (“ethical considerations preclude randomized, controlled epidemiological studies of continuous infusion given the [risk of harm]”). Experimental studies can be used where the agent under investigation is believed to be beneficial, as is the case in the development and testing of new pharmaceutical drugs. See, e.g., McDarby v. Merck & Co., 949 A.2d 223, 270 (N.J. Super. Ct. App. Div. 2008) (involving an expert witness relying on a clinical trial of a new drug to find the adjusted risk for the plaintiff).

19. Classifying these studies as observational in contrast to randomized trials can be misleading to those who are unfamiliar with the area, because subjects in a randomized trial are observed as well. Nevertheless, the term observational studies is widely used to distinguish them from experimental studies.

20. The subjects may have voluntarily exposed themselves to the agent of interest, as is the case, for example, for those who smoke cigarettes, or subjects may have been exposed to an agent involuntarily or even without their knowledge, such as in the case of employees who are exposed to chemical fumes at work.

21. Confounding is a type of bias, discussed in the section titled “Biases” below.

22. Both experimental and observational studies are subject to random error. See section titled “Sampling Error” below.

interpretation of the study results (see section titled “Sources of Error in Epidemiologic Studies” below).23

Types of Observational Study Design

Several different types of observational epidemiologic studies can be conducted. Study designs may be selected based on their suitability to investigate the question of interest, feasibility, timing and ethical constraints, resource limitations, or other considerations.

Most observational studies collect data about both exposure and health outcome in every individual in the study. While many study designs exist, the three main types of observational studies are cohort, case-control, and cross-sectional studies. These studies collect data about a sample of individuals selected from a “source” population, from which the researcher seeks to make inferences about the population.24 A final type of observational study is an ecological study, which uses aggregate data collected about groups of people rather than individuals.25

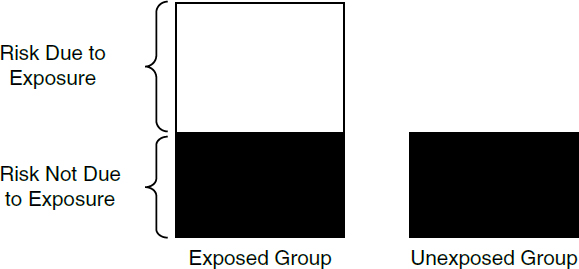

The difference between cohort and case-control studies is that cohort studies measure and compare health outcomes (such as the presence or absence of a particular disease)26 in the exposed and unexposed groups (or between individuals that experienced different levels of exposure), while case-control studies measure and compare the frequency (or level) of exposure in the group with the disease (the cases) and the group without the disease (the controls). In a cohort study, the subjects’ exposure is determined before their health status (see Figure 1). The risk of disease among the exposed can then be compared with the risk of disease among the unexposed. In a case-control study, the health status is determined first. The odds that someone with the disease was exposed to a suspected agent can then be compared with the odds that someone without the disease was similarly exposed. As for most epidemiologic studies, these study designs aim to determine if there is an association between exposure to an agent and a health outcome and the strength (magnitude) of that association.

23. See David A. Freedman, Editorial: Oasis or Mirage?, 21 Chance no. 1, 59–61 (2008), https://doi.org/10.1080/09332480.2008.10722888.

24. Sometimes, for practical reasons, the source population from which the researcher draws study subjects is not exactly the same as the population about which the researcher wants to make inferences, in which case the term target population is used to distinguish the population about which inferences are made from the source population.

25. See section titled “Ecological Studies” below. For thumbnail sketches on all types of epidemiologic study designs, see Brian L. Strom, Basic Principles of Clinical Epidemiology Relevant to Pharmacoepidemiologic Studies, in Pharmacoepidemiology 44, 50–55 (Brian L. Strom et al. eds., 6th ed. 2020), https://doi.org/10.1002/9781119413431.ch3.

26. Although epidemiologists refer generally to “health outcomes,” some disease is usually the health outcome of interest in the types of court cases in which epidemiologic research plays an important role. See supra discussion, note 1.

Cohort Studies

In cohort studies, researchers define a study population without regard to the participants’ disease status. The cohort may be defined in the present and followed forward into the future (prospectively), in which case they are known as prospective, longitudinal, or follow-up studies. Alternatively, a cohort study may be constructed retrospectively as of some time period in the past and followed from that historical time toward the present. In cohort studies, there is usually a group that is unexposed and another that is exposed, or several groups with different levels of exposure (including, in appropriate cases, a group with no exposure). Exposure may also be measured continuously (e.g., blood lead levels). In a prospective study, individuals are enrolled and followed over time, during which exposure and subsequent health outcomes are measured, while in a retrospective study, the researcher will obtain data on past exposure from available records, samples, questionnaires, or other evidence.27 So, for a study aiming to determine the association between an exposure and the occurrence of a disease, a researcher would compare the proportion of unexposed individuals who have the disease with the proportion of exposed individuals who have the

27. Sometimes in retrospective cohort studies, the researcher gathers historical data about exposure and disease outcome of a cohort. See Harold A. Kahn, An Introduction to Epidemiologic Methods 39–41 (1983); Mark A. Klebanoff & Jonathan M. Snowden, Historical (Retrospective) Cohort Studies and Other Epidemiologic Study Designs in Perinatal Research, 219 Am. J. Obstetrics & Gynecology 447 (2018), https://doi.org/10.1016/j.ajog.2018.08.044. Irving Selikoff, in his seminal study of asbestotic disease in insulation workers, included several hundred workers who had died before he began the study. Selikoff was able to obtain information about exposure from union records and information about disease from hospital and autopsy records. Irving J. Selikoff et al., The Occurrence of Asbestosis Among Insulation Workers in the United States, 132 Ann. N.Y. Acad. Sci. 139, 143 (1965), https://doi.org/10.1111/j.1749-6632.1965.tb41097.x.

disease.28 If the exposure causes the disease, the researcher would expect a greater proportion of the exposed individuals to develop the disease than the unexposed individuals.29 For a health outcome that is measured continuously, such as intelligence quotient (IQ) or blood pressure, the researcher could compare the average of these outcomes among the exposed and unexposed. If exposure is measured as a continuous rather than a dichotomous variable—for example, if all study subjects were exposed to some radiation from a nuclear accident but the degree of exposure was lower for those farther away from the accident—the researcher could examine the association between the extent of exposure and the health outcome.

One advantage of the cohort study design is that the temporal relationship between exposure and disease can often be established more readily than in other study designs. By tracking people who initially have not been diagnosed with the disease, the researcher can determine the time of disease onset (or diagnosis) and its relation to exposure. This temporal relationship is critical to the question of causation, because exposure must precede disease onset for exposure to have caused the disease.

As an example, in 1950 a cohort study was begun to determine whether uranium miners exposed to radon were at increased risk of death due to lung cancer as compared with nonminers. The study group (also referred to as the exposed cohort) consisted of 3,400 underground miners. The control group or unexposed cohort (which need not be the same size as the exposed cohort) comprised nonminers from the same geographic area. Members of the exposed cohort were examined every three years, and the degree of this cohort’s exposure to radon was measured from samples taken in the mines. Ongoing testing for radioactivity and periodic medical monitoring of lungs permitted the researchers to examine whether disease was linked to prior work exposure to radiation and allowed them to discern the relationship between exposure to radiation and disease. Exposure to radiation was associated with the development of lung cancer in uranium miners.30

The cohort design often is used in occupational studies such as the one just discussed. But because the design is not experimental, the investigator has no control over what other exposures a worker in the study may have had. Hence, an increased risk of disease among the exposed group may be caused by agents other than the exposure of interest. A researcher planning a study must attempt to

28. See Table 1, infra, in the section titled “Relative Risk.”

29. Researchers often examine the rate of disease or death in the exposed and control groups. The rate of disease or death entails consideration of the number developing disease within a specified period. All smokers and nonsmokers will, if followed for 100 years, die. Smokers will die at a greater rate than nonsmokers in the earlier years.

30. This example is based on a study description in Abraham M. Lilienfeld & David E. Lilienfeld, Foundations of Epidemiology 237–39 (2d ed. 1980). The original study is Joseph K. Wagoner et al., Radiation as the Cause of Lung Cancer Among Uranium Miners, 273 NEJM 181 (1965), https://doi.org/10.1056/NEJM196507222730402.

identify factors (called confounders) other than the exposure that may be related to exposure and may be responsible for the increased risk of disease. We discuss this problem (which is not limited to cohort studies) below in the “Confounding Bias” section. If data are gathered about potential confounders, the researcher generally uses statistical methods31 to estimate an association that is independent of these factors. Evaluating whether the association is causal involves additional analysis, as discussed below in the “General Causation” section.

Case-Control Studies

In case-control studies, the researcher begins with a group of individuals who have a disease (cases) and then selects a similar group of individuals who do not have the disease (controls). Controls should come from the same source population as the cases. The researcher then compares the extent of exposure in the two groups. For example, researchers might use employment records or questionnaire responses to determine whether and to what extent each study subject was exposed to the agent of interest, or researchers might measure biomarkers in the cases and controls to infer their exposure history. If a certain exposure is associated with the disease, a higher odds of exposure would be observed among the cases than among the controls (see Figure 2). Because researchers employing this research design often (though not always) gather historical information about exposure to an agent in the case and control groups, case-control studies have sometimes, but less precisely, been referred to as “retrospective studies.”32

For example, in the late 1960s, doctors in Boston were confronted with an unusual number of young female patients with vaginal adenocarcinoma. Those

31. See generally Daniel L. Rubinfeld & David Card, Reference Guide on Multiple Regression and Advanced Statistical Models, in this manual; Kaye & Stern, supra note 16, “Statistical Models” (discussing difficulties of multiple regression).

32. As described, the design of a case-control study is inherently retrospective rather than prospective. But other types of epidemiologic studies, including some cohort studies, may also be retrospective. See Strom, supra note 25 and accompanying text.

patients became the cases in a case-control study (because they had the disease in question). Controls who did not have the disease were selected based on their being born in the same hospitals and at the same time as the cases. The cases and controls were compared for exposure to agents that might be responsible, and researchers found maternal ingestion of diethylstilbestrol (DES), a drug prescribed to prevent miscarriage and premature delivery, in all but one of the cases but none of the controls.33

An advantage of the case-control study is that it usually can be completed in less time and with less expense than a cohort study. Case-control studies are also particularly useful in the study of rare diseases, because if a cohort study were conducted, a large group of individuals would have to be studied in order to observe the development of a sufficient number of cases for statistical analysis.34 A number of potential limitations of case-control studies are discussed in the section below titled “Biases.”

Cross-Sectional Studies

A third type of observational study is a cross-sectional study. In this type of study, for each study participant the presence of both the exposure of interest and the health outcome of interest is assessed at a single point in time. Thus, cross-sectional studies reveal the prevalence (i.e., the presence at that particular time) of both exposure and disease but do not provide the disease incidence (i.e., the development of disease over time).35 A researcher interested in the association between a health outcome (e.g., IQ) and an exposure that is relatively consistent over time (e.g., blood lead levels from living in contaminated housing) might use a cross-sectional study design. However, because a cross-sectional study determines both exposure and disease in an individual at the same point in time, it may be impossible to establish the temporal relation between exposure and disease—that is, whether the exposure preceded the disease, which is necessary for drawing any causal inference. Indeed, cross-sectional studies may be

33. See Arthur L. Herbst et al., Adenocarcinoma of the Vagina: Association of Maternal Stilbestrol Therapy with Tumor Appearance in Young Women, 284 NEJM 878 (1971), https://doi.org/10.1056/NEJM197104222841604.

34. For example, to detect a statistically significant doubling of disease caused by exposure to an agent where the incidence of disease is 1 in 100 in the unexposed population would require sample sizes of 3,100 for the exposed and nonexposed groups for a cohort study, but only 177 for the case and control groups in a case-control study (see section titled “Sampling Error” below for a discussion of statistical significance). Harold A. Kahn & Christopher T. Sempos, Statistical Methods in Epidemiology 66 (1989). See also Kaye & Stern, supra note 16, “What Is the Standard Error?,” “What Is the Confidence Interval?,” and “Tests or Interval Estimates?”

35. See In re Deepwater Horizon Belo Cases, No. 3:19CV963-MCR-HTC, 2022 WL 17721595, at *10 (N.D. Fla. Dec. 15, 2022) (criticizing failure of expert to identify a study on which she relied as a cross-sectional study).

affected by reverse causation, in which the health outcome under study actually causes a change in exposure.36 Though more limited than cohort or case-control studies, cross-sectional studies can provide valuable leads to further directions for research, particularly when reverse causation can be ruled out based on other knowledge.37

Ecological Studies

Up to now, we have discussed studies in which data on both exposure and health outcome are obtained for each individual included in the study, although individual data are sometimes augmented by group-level information, such as data from census tracts. Other studies, called ecological studies, collect only aggregate data on groups as a whole. In ecological studies, rather than gathering information about individuals, researchers obtain and compare overall rates of disease or death (or other summary measure for continuous health outcomes) for different exposure groups.38

One type of ecological study compares exposure groups across geographic locations. For example, epidemiologists might compare disease rates among areas with more or less air pollution.39 When making such comparisons, epidemiologists may overlay data on demographic factors obtained from sources such as the American Community Survey. Epidemiologists may look at geospatial or temporal trends (which may identify so-called “clusters” of disease) to form hypotheses and research questions.40

Ecological studies may be useful to identify associations for further exploration, but they can rarely provide causal answers. We illustrate the difficulty of

36. For example, a cross-sectional study might find that disinfectant use is associated with greater odds of asthma, but could not distinguish whether disinfectants cause people to have asthma or whether having asthma causes people to use disinfectants.

37. For more information and references about cross-sectional studies, see David D. Celentano & Moyses Szklo, Gordis Epidemiology 154–57 (6th ed. 2018).

38. Studies may be conducted in which all members of a group or community are treated as exposed to an agent of interest (e.g., a contaminated water system) and disease status is determined individually. These studies should be distinguished from ecological studies.

39. See Jonah Lipsitt et al., Spatial Analysis of COVID-19 and Traffic-Related Air Pollution in Los Angeles, 153 Env’t Int’l 106531 (2021), https://doi.org//10.1016/j.envint.2021.106531.

40. For example, the observed emergence of a cluster of adverse events associated with the use of heparin, a longtime and widely prescribed anticoagulant, led to suspicions that some specific lot of heparin was responsible. The observed pattern led the Centers for Disease Control and Prevention to conduct a case-control study, which concluded that contaminated heparin manufactured by Baxter was responsible for the outbreak of adverse events. See David B. Blossom et al., Outbreak of Adverse Event Reactions Associated with Contaminated Heparin, 359 NEJM 2674 (2008), https://doi.org/10.1056/NEJMoa0806450; In re Heparin Prods. Liab. Litig., 803 F. Supp. 2d 712 (N.D. Ohio 2011).

using ecological studies to determine causality with the following hypothetical ecological study.

Suppose that researchers, interested in determining whether a high dietary-fat intake is associated with breast cancer, compared different countries in terms of their average fat intakes and their average rates of breast cancer. If countries with high average fat intake also tend to have high rates of breast cancer, the finding would suggest an association between dietary fat and breast cancer. However, such a finding would be far from conclusive, because it lacks particularized information about an individual’s exposure and disease status (i.e., whether an individual with high fat intake is more likely to have breast cancer).41 In addition to the lack of information about an individual’s intake of fat, the researcher does not know about the individual’s exposures to other agents (or other factors, such as a woman’s age when she first gave birth) that may also be responsible for the increased risk of breast cancer. This lack of information about each individual’s exposure to an agent and disease status can lead to an erroneous inference about the relationship between fat intake and breast cancer, a problem known as the ecological fallacy. The fallacy is assuming that, on average, the individuals in the study who have suffered from breast cancer consumed more dietary fat than those who have not suffered from the disease. This assumption may not be true. Nevertheless, the study is useful in that it identifies an area for further research: the fat intake of individuals who have breast cancer as compared with the fat intake of those who do not. Researchers who identify a difference in disease or death in an ecological study may follow up with a study of individuals.42

In another type of ecological study, epidemiologists may compare disease rates over time and focus on disease rates before and after a point in time when some event of interest took place. For example, after the once widely prescribed drug Bendectin was removed from the market, the rate of limb-reduction birth defects did not change, which suggested that Bendectin did not cause birth

41. For a discussion of the data on this question and what they might mean, see Kaye & Stern, supra note 16.

42. Some courts have admitted expert testimony that relied on the results of ecological studies. In Cook v. Rockwell Int’l Corp., 580 F. Supp. 2d 1071, 1095–96 (D. Colo. 2006), the plaintiffs’ expert conducted an ecological study in which he compared the incidence of two cancers among those living in a specified area adjacent to the Rocky Flats Nuclear Weapons Plant with other areas more distant. (The likely explanation for relying on this type of study is the time and expense of a study that gathered information about each individual in the affected area.) The court recognized that ecological studies are less probative than studies in which data are based on individuals but nevertheless held that this limitation went to the weight of the study. Plaintiffs’ expert was permitted to testify to causation, relying on the ecological study he performed. See also Mead v. Sec’y of Health & Hum. Servs., 2010 WL 892248, at *38 (Fed. Cl. Mar. 12, 2010) (“Because these studies examine trends in the aggregate rather than examining disease onset in exposed individuals, ecological studies are afforded less evidentiary weight in the medical community than controlled studies are.”).

defects.43 By contrast, researchers’ discovery that the timing of a dramatic increase in limb-reduction birth defects followed widespread use of the drug thalidomide supported the conclusion that thalidomide caused those defects.44

However, other than with such powerful agents as thalidomide, which increased the incidence of limb-reduction birth defects by several orders of magnitude, these secular-trend studies (also known as timeline studies) are less reliable and less able to detect modest causal effects than are the observational studies described above.45 Other factors that affect the measurement or existence of the disease, such as improved diagnostic techniques and changes in lifestyle or age demographics, may change over time. If those factors can be identified and measured, it may be possible to control for them with statistical methods. Of course, unknown factors cannot be controlled for in these or any other kinds of epidemiologic studies.

Genetic and Molecular Epidemiologic Studies

Epidemiology, like other fields of biological and medical science, has in recent decades been touched powerfully by advances in scientists’ ability to identify and study the molecular constituents of human cells, tissues, and organs. Perhaps the best-known of these advances is the development of genomics: the study of the human genome, the sequence of DNA bases arranged in chromosomes that form

43. See Wilson v. Merrell Dow Pharms., 893 F.2d 1149 (10th Cir. 1990) (affirming judgment entered for defendant on a jury verdict). In Wilson, the defendant introduced evidence showing total sales of Bendectin and the incidence of limb-reduction birth defects during the 1970–1984 period. In 1983, Bendectin was removed from the market, but the rate of limb-reduction birth defects did not change. The Tenth Circuit held that the district court correctly determined that the timeline data were admissible and the defendant’s expert witnesses could rely on those data in rendering their opinions. Id. at 1152–53. See also Siharath v. Sandoz Pharms. Corp., 131 F. Supp. 2d 1347 (N.D. Ga. 2001). In Siharath, the court took note of the absence of secular trend data to support plaintiff’s claim. After describing why several observational studies produced, at best, inconclusive results about any association between use of the lactation-suppressing drug Parlodel and the very rare complication of postpartum stroke, the court observed: “No evidence has been offered of an increase in postpartum strokes after the drug [Parlodel] was approved for suppression of lactation; no evidence has been offered of a decrease in postpartum strokes after the approval for suppression of lactation was withdrawn.” Id. at 1358.

44. Michael D. Green, Bendectin and Birth Defects 70–71 (1996) (describing how thalidomide’s teratogenicity was discovered after Dr. Widukind Lenz found a dramatic increase in the incidence of limb-reduction defects in Germany beginning in 1960).

45. See Graddy v. Sec’y of Health & Hum. Servs., No. 08-416V, 2017 WL 11286536, at **18–22 (Fed. Cl. Aug. 31, 2017) (finding that the expert witness’s ecological study on childhood autism prevalence and the introduction or increased doses of certain vaccines did not allow for a reliable evidentiary inference of causation regarding MMR II, varicella, and hepatitis A vaccines and autism).

the basis of heredity.46 But genomics is just one of several fields of large-scale research on categories of biological molecules and features, such as epigenomics,47 proteomics,48 and others. Rapid technological and computational improvements have increasingly facilitated “omics” studies that can be performed on a larger scale, at a lower cost, and in a shorter time.49

Variations in genes and other biochemical constituents across the population may be associated with the incidence of disease or the ability to metabolize an agent, and therefore can be subject to epidemiologic study. Genetic epidemiology assesses the genetic components that, perhaps in combination with environmental or other risk factors, contribute to the development or progression of disease in human populations.50 More generally, molecular epidemiology “joins an understanding of disease at a molecular level with population-based study designs and approaches.”51 The number of epidemiologic studies that include molecular and genetic factors is quickly increasing.52

Genetic and molecular epidemiologic studies add to epidemiology the ability to consider types of data that were previously unavailable. Where researchers previously could collect data only on the health outcome of interest, the exposure of interest, and relatively easily observed other potentially relevant traits

46. See Int’l Hum. Genome Sequencing Consortium, Initial Sequencing and Analysis of the Human Genome, 409 Nature 860 (2001), https://doi.org/10.1038/35057062. Genomics is contrasted to genetics by its scale: genetics is the study of the inheritance or effect of one gene or a relatively small number of genes; genomics is the study of the entire human genome or large portions of it.

47. Epigenetic factors are biochemical features or constituents that affect the extent to which genes are “turned on,” that is, expressed through synthesis of the proteins the genes encode. They have been described as an “extra layer of instructions that influences gene activity.” Gail P. A. Kauwell, Epigenetics: What It Is and How It Can Affect Dietetics Practice, 108 J. Am. Dietetic Ass’n 1056, 1056 (2008), https://doi.org/10.1016/j.jada.2008.03.003. Epigenomics has been defined as “[t]he study of epigenetic marks . . . on a genome-wide scale.” Pauline A. Callinan & Andrew P. Feinberg, The Emerging Science of Epigenomics, 15 Hum. Molecular Genetics R95, R96 (2006), https://doi.org/10.1093/hmg/ddl095.

48. Proteomics is the study of the proteome, the “complete set of proteins made by an organism.” Nat’l Cancer Inst., Proteomics, Dictionary of Cancer Terms, https://perma.cc/74G5-ZWNB; Proteome, id., https://perma.cc/ZQ79-HX5R.

49. See, e.g., Matthew K. Sigurdson et al., Redundant Meta-Analyses Are Common in Genetic Epidemiology, 127 J. Clinical Epidemiology 40, 46 (2020), https://doi.org/10.1016/j.jclinepi.2020.05.035 (“The advent of genomewide agnostic approaches and massive new biobanks and consortia has created a new paradigm for human genome epidemiology.”); Sara Goodwin et al., Coming of Age: Ten Years of Next-Generation Sequencing Technologies, 17 Nature Rev. Genetics 333, 333–37 (2016), https://doi.org/10.1038/nrg.2016.49 (describing gene sequencing technologies and stating that cost of sequencing a single genome had been reduced to about $1,000).

50. This description is closely paraphrased from John S. Witte & Duncan C. Thomas, Genetic Epidemiology, in Timothy L. Lash et al., Modern Epidemiology 963, 963 (4th ed. 2021), https://doi.org/10.1093/aje/kwj102.

51. Claire H. Pernar et al., Molecular Epidemiology, in Lash et al., supra note 50 at 935.

52. See, e.g., Muin J. Khoury et al., Genetic Epidemiology with a Capital E, Ten Years After, 35 Genetic Epidemiology 845 (2011), https://doi.org/10.1002/gepi.20634.

such as age or socioeconomic status, researchers may now also be able to collect information about the study subjects’ genotype or relevant cellular or molecular components. But adding genetic or molecular information about the study subjects does not somehow turn epidemiology from a population-based science into the study of individuals, any more than including information about the study subjects’ exposures and health outcomes did. Neither do genetic and molecular epidemiology constitute new types of epidemiologic study designs. Rather, they apply existing modes of epidemiologic study to genetic or molecular information. Thus, genetic and molecular epidemiologic studies employ various combinations of the study designs, such as cohort studies and case-control studies, described in the preceding subsection. Depending on the type of genetic or molecular data under study and the details of the study design, genetic and molecular epidemiologic studies are subject to the same sources of error that must be considered in designing and interpreting the results of any epidemiologic study.53

As with other epidemiologic studies, genetic and molecular epidemiology studies may be used to support or undermine claims of disease causation in litigation. Although commentators have long forecast that the output of genetic and molecular epidemiology would revolutionize causal proof, as of this writing few judicial opinions have addressed these types of studies, and it is far from clear that a revolution is in the offing. Nevertheless, it is increasingly likely that expert witnesses will rely on such studies in forming their opinions presented in courts.

In particular, genetic epidemiology may provide additional evidence of whether there is a causal connection between an environmental exposure and a disease at issue in litigation—either in a general sense or in the plaintiff’s specific instance. For example, a study found that maternal exposure to organophosphate insecticides was associated with shorter gestational duration only among infants who had a genetic variant that resulted in a slower elimination of these insecticides.54 Alternatively, genetic epidemiology may reveal associations between genetic variations and a plaintiff’s disease, raising the issue of whether or not a genetic variation may be a competing cause of the disease. This requires assessment of whether the gene–disease association is causal in a general sense, whether it acts independently of the exposure, and whether it is a competing cause in the plaintiff’s specific instance. The extreme, though not typical, example would be

53. Pernar et al., supra note 51, at 936 (emphasizing that although molecular epidemiology involves new “omics” technologies, “molecular epidemiology can only contribute valid and reproducible findings if epidemiologists critically integrate core methodological concepts of epidemiology”); id. at 947–52 (discussing usefulness of various epidemiologic study designs in molecular epidemiology research).

54. Kim G. Harley et al., Association of Organophosphate Pesticide Exposure and Paraoxonase with Birth Outcome in Mexican-American Women, PLoS One 6(8):e23923 (2011), https://doi.org/10.1371/journal.pone.0023923.

a health outcome or disease entirely determined by genetics,55 as is the case with sickle cell anemia.56

Molecular epidemiology may also become relevant to causation disputes in ways that go beyond genetics. In molecular epidemiology studies, as one researcher described them, “risk factors, outcomes, confounders or effect modifiers are measured with biomarkers.”57 Parties may use these types of studies to support or undermine claims that a plaintiff was exposed to an alleged toxicant, that an exposure caused a plaintiff’s disease or otherwise affected the plaintiff’s biochemistry or physiology, or that the plaintiff suffers from a particular condition or disease. In particular, parties may attempt to rely on research that seeks to identify “signature” molecular markers of disease etiology, with the validity, sensitivity, and specificity of the purported signatures likely to be in dispute.58 For example, in several cases involving claims that exposure to benzene caused a plaintiff’s leukemia, courts have considered the significance of certain chromosome aberrations as possible biomarkers of exposure or effect.59

55. E.g., Bowen v. E.I. Du Pont de Nemours & Co., No. CIV.A. 97C-06-194 CH, 2005 WL 1952859 (Del. Super. Ct. June 23, 2005), aff’d, 906 A.2d 787 (Del. 2006) (discussing how a newly discovered test for a newly discovered genetic variant established that a genetic mutation rather than a toxic exposure was “the cause of” plaintiff’s condition).

56. The sickle cell trait is a well-known example of a genetically determined condition. See Muin J. Khoury & Janice S. Dorman, Genetic Disease, in Molecular Epidemiology 365, 370 (Paul A. Schulte & Frederica P. Perera eds., 1993).

57. Paolo Boffetta, Biomarkers in Cancer Epidemiology: An Integrative Approach, 31 Carcinogenesis 121, 125 (2010), https://doi.org/10.1093/carcin/bgp269. Confounders are discussed in the section titled “Biases” below and effect modifiers are discussed in the section titled “Effect Modification” below.

58. Validity, in this context, refers to the degree to which the measurement of the “signature” marker measures what it purports to measure, as well as to whether the selected marker is generalizable from the study that derived it to the case in which it is being used. Sensitivity refers to the ability of an “etiologic signature” to identify true causal cases, i.e., to avoid false negatives. Specificity refers to the ability of an etiologic signature to identify false causal cases, i.e., to avoid false positives. The absence of a highly sensitive etiologic marker would be stronger evidence against causation than would be the absence of a less sensitive marker. The presence of a highly specific etiologic marker would be stronger evidence for causation than would be the presence of a less specific marker. See Steve C. Gold, When Certainty Dissolves into Probability: A Legal Vision of Toxic Causation for the Post-Genomic Era, 70 Wash. & Lee L. Rev. 237, 265–76 (2013) (discussing these concepts); see also infra Glossary of Terms.

59. E.g., Henricksen v. ConocoPhilips Co., 605 F. Supp. 2d 1142, 1149–50 (E.D. Wash. 2009) (noting absence in plaintiff of chromosomal aberrations found more frequently in toxicant-induced acute myelogenous leukemia than in idiopathic cases); Hendrian v. Safety-Kleen Sys., Inc., No. 08-14371, 2014 WL 1464462, at *7 (E.D. Mich. Apr. 15, 2014) (describing plaintiff’s expert’s testimony that presence of chromosomal aberrations indicated prior exposure to benzene); Harris v. KEM Corp., No. 85 CIV. 2127 (WK), 1989 WL 200446, at *3–*5 (S.D.N.Y. Dec. 2, 1989) (denying defendant’s motion for summary judgment where plaintiff’s expert testified that plaintiff’s leukemia had chromosomal aberrations consistent with benzene exposure).

Epidemiologic and Toxicologic Studies

In addition to observational epidemiology, toxicology models based on animal studies (in vivo) may be used to determine toxicity in humans.60 Animal studies have a number of advantages. They can be conducted as true experiments by assigning exposure at random and carefully controlling and measuring exposure. Animal studies can avoid the other problems that human epidemiology studies confront such as confounding61 by, for example, genetics, social factors, or nutrition; participant refusal, non-compliance, or loss to follow-up; and certain ethical issues including those involving certain subpopulations, such as pregnant women and children. Test animals can be sacrificed and their tissues examined, which may improve the accuracy of disease assessment.62 Animal studies often provide useful information about pathological mechanisms and play a complementary role to epidemiology by assisting researchers in framing and assessing the plausibility of hypotheses.

Animal studies have two significant disadvantages, however. First, animal study results must be extrapolated to another species—human beings—and differences in absorption, metabolism, and other factors may result in interspecies variation in responses. For example, one powerful human teratogen, thalidomide, does not cause birth defects in most rodent species.63 Similarly, some known teratogens in animals are not believed to be human teratogens.64 The second difficulty with inferring causation in humans based on animal studies is that such studies often use doses that are substantially higher than concentrations to which humans are exposed. This may require the estimation of dose–response relationships for lower doses that have not been tested in order to establish a dose at which no effect is expected. For many agents, such no-effect thresholds may

60. For an in-depth discussion of toxicology, see David L. Eaton et al., Reference Guide on Toxicology, in this manual.

61. See section titled “Effect Modification” below.

62. Ethical considerations against deliberately exposing humans to agents thought harmful, see supra note 18, have in the past not been thought to prohibit animal experimentation. Contemporary concern for the ethical treatment of animals used in research is reflected in a number of statutory and regulatory provisions. See, e.g., 15 U.S.C. § 2603(h)(1) (2022) (amended Toxic Substances Control Act requires Environmental Protection Agency to “reduce and replace, to the extent practicable, scientifically justified, and consistent with the policies of [the Act], the use of vertebrate animals in the testing of chemical substances and mixtures”); Nat’l Insts. Health, Animal Care & Use in the Intramural Research Program, NIH Policy Manual § 3040-2 (Mar. 18, 2022), available at https://perma.cc/9LW3-QJBQ; id. Appendix 1 (listing applicable statutes, regulations, standards, and policies).

63. Phillip Knightley et al., Suffer the Children: The Story of Thalidomide 271–72 (1979).

64. In general, it is often difficult to confirm that an agent known to be toxic in animals is safe for human beings. See Ian C.T. Nisbet & Nathan J. Karch, Chemical Hazards to Human Reproduction 98–106 (1983); Int’l Agency for Research on Cancer, Interpretation of Negative Epidemiological Evidence for Carcinogenicity (Nicholas J. Wald & Richard Doll eds., 1985) [hereinafter IARC (Wald & Doll)].

not be known or may not exist.65 Thus, inference conducted solely on the basis of animal studies is fraught with considerable uncertainty.66

Toxicologists also use in vitro methods, in which human or animal tissue or cells are grown in laboratories and are exposed to certain substances. While useful, the problem with this approach is also extrapolation—whether one can generalize the findings from the artificial setting of tissues in laboratories to whole human beings.67

Often toxicologic studies are the only or best available evidence of toxicity.68 Epidemiologic studies are difficult, time-consuming, expensive, and

65. See infra text accompanying footnotes 235–37.

66. See Soldo v. Sandoz Pharms. Corp., 244 F. Supp. 2d 434, 466 (W.D. Pa. 2003) (quoting the first edition of this reference guide); see also Gen. Elec. Co. v. Joiner, 522 U.S. 136, 143–45 (1997) (holding that the district court did not abuse its discretion in excluding expert testimony on causation based on expert’s failure to explain how animal studies supported expert’s opinion that agent caused disease in humans).

67. For a further discussion of these issues, see Eaton et al., supra note 60, “Extrapolation from Animal (In Vivo) and Cell (In Vitro) Research to Humans.” A number of courts have grappled with the role of animal studies in proving causation in a toxic substance case. One line of cases takes a very dim view of their probative value. For example, in Johnson v. Arkema, Inc., 685 F.3d 452 (5th Cir. 2012), the appellate court concluded that the district court did not abuse its discretion in discounting an animal study because “studies of the effects of chemicals on animals must be carefully qualified in order to have explanatory potential for human beings.” Id. at 463 (quoting Allen v. Pennsylvania Eng’g Corp., 102 F.3d 194, 197 (5th Cir. 1996)). See also Becnel v. BP Expl. & Prod., Inc., No. CV 17-1758-SDD-EWD, 2021 WL 4444723, at *2 (M.D. La. Sept. 28, 2021) (noting that relying on animal studies, in the absence of epidemiologic studies, is . . . “of very limited usefulness”) (quoting Brock v. Merrell Dow Pharms., Inc., 874 F.2d 307, 313 (5th Cir. 1989)); In re Mirena IUD Prod. Liab. Litig., 169 F. Supp. 3d 396, 445 (S.D.N.Y. 2016) (holding that the expert’s reliance on animal studies, without a sound basis for extrapolating these studies to humans, is inadmissible). Other courts have been more amenable to the use of animal toxicology in proving causation. See Metabolife Int’l, Inc. v. Wornick, 264 F.3d 832, 842 (9th Cir. 2001) (holding that the lower court erred in per se dismissing animal studies, which must be examined to determine whether they are appropriate as a basis for causation determination); see also In re Paoli R.R. Yard PCB Litig., 916 F.2d 829, 853–54 (3d Cir. 1990) (questioning the basis for the lower court’s exclusion of animal studies and remanding for further development of the record); Drake v. Allergan, Inc., 2014 WL 12718976, at *1 (D. Vt. Oct. 23, 2014) (“The Court is mindful that animal studies present certain risks but these risks are not sufficient to exclude them categorically, especially where there is other evidence of causation as is the case here.”). The Third Circuit in a subsequent opinion in Paoli observed:

[I]n order for animal studies to be admissible to prove causation in humans, there must be good grounds to extrapolate from animals to humans, just as the methodology of the studies must constitute good grounds to reach conclusions about the animals themselves. Thus, the requirement of reliability, or “good grounds,” extends to each step in an expert’s analysis all the way through the step that connects the work of the expert to the particular case. In re Paoli R.R. Yard PCB Litig., 35 F.3d 717, 743 (3d Cir. 1994); see also Cavallo v. Star Enter., 892 F. Supp. 756, 761–63 (E.D. Va. 1995) (courts must examine each of the steps that lead to an expert’s opinion), aff’d in part and rev’d in part, 100 F.3d 1150 (4th Cir. 1996).

68. The International Association for Research on Cancer (IARC), a well-regarded international public health agency, evaluates the human carcinogenicity of various agents. In doing so,

sometimes—when it is difficult to accurately measure exposure, or when the exposure or the disease is extremely rare—virtually impossible to perform.69 Consequently, epidemiologic studies do not exist for a large array of environmental agents. Where both toxicologic and epidemiologic studies are available, no universal rules exist for how to reconcile them.70 Researchers often employ

IARC obtains, evaluates, and synthesizes all of the relevant evidence, including animal studies as well as any human studies. IARC then publishes a monograph containing that evidence, IARC’s analysis, and a categorical assessment of the likelihood an agent is carcinogenic. In a preamble to each monograph, IARC explains what each of the categorical assessments means. IARC may classify a substance as “probably carcinogenic to humans” solely on the basis of the strength of animal studies. Int’l Agency for Research on Cancer, Human Papillomaviruses, 90 Monographs on Evaluation of Carcinogenic Risks to Humans 9–10 (2007), https://perma.cc/J52S-ELWH [hereinafter IARC Papillomaviruses]. When IARC monographs are available, courts generally recognize them as authoritative. See Hardeman v. Monsanto Co., 997 F.3d 941, 967 (9th Cir. 2021) (affirming lower court’s admission of IARC categorization of glyphosate as “a probable carcinogen”), cert. denied, 142 S. Ct. 2834 (2022). But IARC has only conducted evaluations of a fraction of potentially carcinogenic agents, and many suspected toxic agents cause effects other than cancer. See IARC, Revised Preamble for the IARC Monographs (2021), available at https://perma.cc/XXY4-7DKF.

69. Thus, in a series of cases involving Parlodel, a lactation suppressant for mothers of newborns, efforts to conduct an epidemiologic study of its effect on causing strokes were stymied by the infrequency of such strokes in women of child-bearing age. See, e.g., Brasher v. Sandoz Pharms. Corp., 160 F. Supp. 2d 1291, 1297 (N.D. Ala. 2001); see also In re Tylenol (Acetaminophen) Mktg., Sales Pracs. & Prods. Liab. Litig., No. 2:12-CV-07263, 2016 WL 3997046, at *7 (E.D. Pa. July 16, 2016) (in series of cases involving liver damage from use of acetaminophen (Tylenol) at or above the suggested dosage, efforts to conduct an epidemiological study of Tylenol’s effect on causing acute liver failure (ALF) were stymied by the rarity of drug-induced ALF). In other cases, a plaintiff’s exposure to an overdose of a drug may be unique or nearly so. See Zuchowicz v. United States, 140 F.3d 381 (2d Cir. 1998).

70. See IARC (Wald & Doll), supra note 64 (identifying several substances and comparing animal toxicology evidence with epidemiologic evidence). One explanation for the conflicting judicial treatment of toxicological studies of animals, see supra note 67, may be that when there is a substantial body of epidemiologic evidence that addresses the causal issue, courts find that animal toxicology has much less probative value. That was the case, for example, in several Bendectin cases. E.g., Turpin v. Merrell Dow Pharms., Inc., 959 F.2d 1349, 1359 (6th Cir. 1992); Brock v. Merrell Dow Pharms., Inc., 874 F.2d 307, 313; see also In re Paoli R.R. Yard PCB Litig., No. 86-2229, 1992 U.S. Dist. LEXIS 16287, at *16 (E.D. Pa. 1992) (excluding evidence of or based on animal studies linking PCB exposure to various health effects). Where epidemiologic evidence is not available, animal toxicology may be thought to play a more prominent role in resolving a causal dispute. See Michael D. Green, Expert Witnesses and Sufficiency of Evidence in Toxic Substances Litigation: The Legacy of Agent Orange and Bendectin Litigation, 86 Nw. U. L. Rev. 643, 680–82 (1992) (arguing that plaintiffs should be required to prove causation by a preponderance of the available evidence). For another explanation of the Bendectin cases as well as other toxic tort case clusters, see Gerald W. Boston, A Mass-Exposure Model of Toxic Causation: The Content of Scientific Proof and the Regulatory Experience, 18 Colum. J. Env’t L. 181 (1993) (arguing that epidemiologic evidence should be required in mass-exposure cases but not in isolated-exposure cases). See also IARC Papillomaviruses, supra note 68; Eaton et al., supra note 60, “Toxicology and Epidemiology.” The U.S. Supreme Court, in General Electric Co. v. Joiner, 522 U.S. 136, 144–45 (1997), suggested that there is no categorical rule for toxicologic studies, observing, “[W]hether animal studies can ever be a proper foundation for an expert’s opinion [is] not the issue. . . . The [animal] studies were so dissimilar to

an approach that considers the overall weight of evidence, that is, all of the relevant scientific evidence that addresses the question of interest.71 This methodology entails making a judgment about causation after carefully assessing the results, validity, and consistency of each epidemiologic and toxicologic study.72

Interpreting the Results of an Epidemiologic Study

Epidemiologists are ultimately interested in whether a causal link exists between an agent and a disease. However, the first question an epidemiologist generally addresses is whether an association is observed between exposure to the agent and disease. An association between exposure to an agent and disease is observed when disease occurs more frequently (or less frequently) when exposure exists than when exposure is absent.73 Although a causal relation is one possible explanation for an observed association between an exposure and a disease, an association does not necessarily mean that there is a cause–effect relation. Interpreting the meaning of an observed association is discussed below.74

the facts presented in this litigation that it was not an abuse of discretion for the District Court to have rejected the experts’ reliance on them.”

71. The methodology relies on expert scientific judgment to evaluate and weight the available evidence in order to reach the best conclusion. See Douglas L. Weed, Weight of Evidence: A Review of Concept and Methods, 25 Risk Analysis 1545 (2005), https://doi.org/10.1111/j.1539-6924.2005.00699.x. A number of courts have endorsed weight of evidence as a reliable methodology. See, e.g., In re Deepwater Horizon Belo Cases, No. 3:19CV963-MCR-HTC, 2022 WL 17721595, at *19 (N.D. Fla. Dec. 15, 2022) (stating that “weight of the evidence approach to analyzing causation can be considered reliable, provided the expert considers all available evidence carefully and explains how the relative weight of the various pieces of evidence led to his conclusion”) (quoting In re Abilify (Aripiprazole) Prods. Liab. Litig., 299 F. Supp. 3d 1291, 1311 (N.D. Fla. 2018)). As the court in In re Zantac (Ranitidine) Prod. Liab. Litig., 644 F. Supp. 3d 1075, 1168 (S.D. Fla. 2022), cogently observed: “Due to the ‘substantial judgment’ required of an expert in following this approach, it is crucial that the expert describe each step in the process by which he gathered and assessed the relevant scientific evidence” (quoting Abilify, supra, 299 F. Supp. at 1311)). When weight of evidence methodology is employed by a testifying expert, a critical step is adequately taking into account evidence contrary to the expert’s opinion. See Norris v. Baxter Healthcare Corp., 397 F.3d 878, 882 (10th Cir. 2005) (“We are simply holding that where there is a large body of contrary epidemiological evidence, it is necessary to at least address it with evidence that is based on medically reliable and scientifically valid methodology.”); Zantac, supra, at *129 (“a plaintiff’s expert must address epidemiological evidence that is inconsistent with his or her causation opinions”).

72. See sections titled “Statistical Power” and “General Causation” below.

73. A negative association implies that the agent has a protective or curative effect. Because the concern in toxic substances litigation is whether an agent caused disease, this reference guide focuses on positive associations.

74. See section titled “General Causation” below.

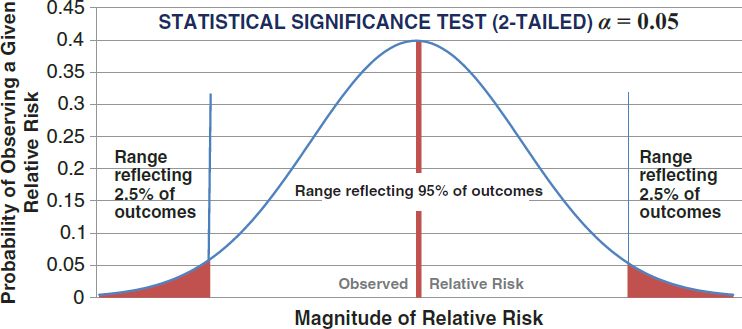

This section begins by describing the ways of expressing the existence and strength of an association between exposure and disease or health outcome. It then proceeds to address sources of error that may produce an incorrect or skewed association, including random chance (sampling error) and non-random or systematic error (bias). Systematic error may result, for example, from inaccuracies in ascertaining health and exposure, from the manner of selecting those studied and information about them, or from confounding bias. This section also describes the statistical methods epidemiologists use to evaluate the importance of, and to address, these potential sources of error with respect to whether an association is real.