Limited Affordable Low-Volume Manufacturing: Summary of a Workshop (2014)

Chapter: Summary of Workshop Presentations and Discussions

Summary of Workshop Presentations and Discussions

Robert Latiff, R. Latiff Associates

Robert Latiff welcomed participants to the workshop on limited, affordable, low-volume manufacturing, an activity of the National Research Council’s (NRC’s) Standing Committee on Defense Materials, Manufacturing, and Infrastructure (DMMI). The DMMI, which was formed under the auspices of the NRC’s National Materials and Manufacturing Board (NMMB), meets at the request of Reliance 21, a Department of Defense (DOD) group of professionals that was established in the DOD science and technology (S&T) community to increase awareness of DOD S&T activities and increase coordination among DOD services, components, and agencies.

Dr. Latiff noted that several of the presentations early in the day will focus on additive manufacturing. He said that additive manufacturing was not the intended workshop focus, but it is an important, timely topic that is relevant to low-volume production. He also noted that low-volume production may also be relevant to sustainment,1 to produce low volumes of replacement parts.

___________

1Here, sustainment refers to maintenance.

ADDITIVE MANUFACTURING AS A DISRUPTIVE TECHNOLOGY

Kenan Jarboe, President, Athena Alliance

Dr. Jarboe began his presentation by explaining that he was not going to describe additive manufacturing as a replacement for traditional manufacturing. He said that while the economic conversation has recently focused on the idea of a replacement technology, it is not the appropriate way to frame additive manufacturing technology. Instead, his talk would focus on additive manufacturing as a disruptive technology, put into the context of economics and other forces at work.

Dr. Jarboe then described the macro forces at work in the economy:

- The rise in the intangible economy. He said that there has been a shift in the factors of production away from tangible assets (such as land and capital) to intangible assets (such as knowledge). Knowledge is embedded not just in patents and copyrights but also in workforce skills, social relationships, and organizational processes. Dr. Jarboe pointed out that this creates a whole new series of factors in production that drive competitiveness. For example, the measure of the gross domestic product (GDP) has recently been modified to include intellectual property, which raised GDP overall by $500 billion.

- The fusion of manufacturing and services. Dr. Jarboe explained that the traditional breakdown of manufacturing and services does not make sense anymore, as they are intertwined. A book to be released by Massachusetts Institute of Technology, Production in the Innovation Economy (Locke and Wellhousen, 2014), is confirming this as well. Dr. Jarboe gave the example of Apple, which has fused together manufacturing and services not only by creating iPods and iPads but also by integrating them with the iTunes service. Dr. Schafrik interjected that manufacturing has always used services, in the form of information flow, logistics, and transportation. Dr. Jarboe responded that the relationship is changing now, because the value added has transitioned from pure manufacturing (that is, economies of scale) to the services/knowledge part of the mix, including the high level of knowledge embedded in the products.

- The change of the innovation process. Dr. Jarboe explained that in the past, the process model was linear. Vannevar Bush popularized a linear model that showed basic research feeding into applied research, followed by technology development. Dr. Jarboe said that now there are many different models of innovation. He likes a “stew pot” model, in which multiple ingredients are mixed together. Models are now driven by user need, with

-

bottom-up, design-based thinking involving rapid problem solving and prototyping.

- The move to the latest step in globalization, “Globalization 4.0.” Dr. Jarboe explained the different steps in globalization. Globalization 1.0 was characterized by growth in international trade. In Globalization 2.0, the supply chain became global, but production remained specialized in different regions. In Globalization 3.0, the complete economic structure became global, and the focus was on harmonizing the economic rules. In Globalization 4.0, production will be brought back to a very local system, though still within the global context.

Dr. Jarboe then defined a disruptive technology. He began with the example of the steam engine and the railroad. The steam engine was initially designed to be used as a pump (linear motion). The steam engine technology was then transitioned to the locomotive, where the pump’s linear motion was converted to rotational motion to turn the locomotive wheels. The railroad system was then overbuilt, causing shipping rates to drop precipitously. This series of events had three major impacts:

- New markets opened. With the overbuilding of the railroad, more kinds of retail companies (such as Sears) began shipping by rail, since costs were low. Thus, overbuilding the railroads opened entirely new markets. It also increased the demand for machine-based manufacturing (such as steel, machining of parts).

- Management structures changed. Management changes were needed to schedule trains and standardize time zones across the United States. Dr. Jarboe recommended the book The Visible Hand: The Managerial Revolution in American Business (Chandler, 1977) for its explanation of this phenomenon.

- Government processes changed. The railroads led to a faster rise of the civil service in that they called for synchronized introduction of new concepts such as time zones and standards reaching across the whole country—for example, a unified railroad track gauge. Dr. Jarboe then stated that, today, information technology would change government processes in a similar way. He was challenged on his statement by one participant; Dr. Jarboe responded by saying that, as an example, massively distributed and widespread Internet use would allow constituents to inform members of Congress of their opinions.

Dr. Jarboe then said the lesson from the railroad example is that a disruptive technology has two main characteristics: It allows for something new (not just

an improvement on something already in existence), and it has spillover effects that create new activities. He pointed out that additive manufacturing is a perfect example of a disruptive technology using this definition. First, additive manufacturing began as a technique for rapid prototyping, but it allows

- Manufacturing new shapes that could not be manufactured before. For example, additive manufacturing techniques can create prosthetics that would have been prohibitively expensive using conventional techniques.

- Harnessing the new use of materials. Additive manufacturing can combine materials in ways that were not possible before—for example, making a single piece of variable density. Dr. Jarboe imagined a baseball bat made with variable density, hard at one end, soft at the other.

Dr. Jarboe explained that once the materials have changed, the design process needs to change as well, and a completely different approach to manufacturing is called for. This is a hallmark of a disruptive technology.

Second, Dr. Jarboe noted that additive manufacturing has several spillover effects that fit neatly into the macro forces he discussed at the outset of his talk:

- Additive manufacturing is based on knowledge, not physical assets. As a result, the manufacturing approaches change: manufacturing can now be accomplished anywhere there is a suitable printer.

- The economic structure changes. Manufacturing and service are now fused together.

- The innovation model changes. Manufacturing becomes more bottom-up, as designs can be changed at the user end rather than only at a large manufacturing company’s design department.

- Additive manufacturing enables Globalization 4.0. This means localized production—printing at home, for instance. However, access to raw materials can be difficult, so the model is better suited to a regional printing site; Dr. Jarboe gave the example of a local hardware store printing individual screws as the customer needs them. Dr. Latiff asked if Globalization 4.0 could occur without additive manufacturing. Dr. Jarboe responded that as additive manufacturing technologies improve, the technique will replace traditional tools in the same local geographic location(s).

Dr. Jarboe then discussed how additive manufacturing disruptive technology might change the design and function of weapon systems. He pointed out that whatever we can do with this technology our adversaries can do as well. Strategic and military readiness issues then get wrapped up in additive manufacturing development. Also, as power shifts from the production site to the raw materials site,

control of raw materials becomes more important. The International Traffic in Arms Regulations (ITAR) is not so relevant when the design can be sent anywhere in the world and the parts manufactured additively there. A workshop participant pointed out that critical data are protected by ITAR, but Dr. Jarboe replied that the regulations may not be effectively enforceable. Dr. Jarboe concluded his talk by saying that spillover effects from additive manufacturing will occur, but we do not know where the technology will lead us other than that it will allow us to do things we have not been able to do before.

During the question-and-answer period, a participant pointed out that there is a long way to go before additively manufactured parts are considered reliable and durable. Not only does additive manufacturing create more options geographically Dr. Jarboe said, but also it allows flexibility for hybridizing. Some components can be produced additively, other subtractively. The Federal Aviation Administration has ongoing work in the certification of airline parts with an additive manufacturing component.

Another participant pointed out that if we expect additive manufacturing to be disruptive, we should be seeing that disruption now in the toy market, where additive manufacturing use is widespread. However, Dr. Jarboe believed that the hobby toy market is still too small to see this effect. Another participant said that in the automotive industry, if there is a shortage or if it is impossible to make parts conventionally for some reason, the industry turns to additive manufacturing to make 10,000 parts or so as a stopgap measure. This is not disruptive, but supplemental.

A participant pointed out the problem of patent infringement, whereby it may become easier to make a patented product, putting the small manufacturer out of business. Dr. Jarboe agreed that this could be problem, but switching to an open source business model might be a response. He noted that the underwater exploration community has designs and kits for small underwater robots that can be customized and built additively at a fraction of the price of conventionally produced robots. The result is an expansion of the market for such products so that the small manufacturers in underwater exploration can still thrive even though the basic designs are open to all.

Workshop participants then discussed the idea that the power structure will shift to the raw materials side, where there can be bottlenecks or chokeholds from the material suppliers. Dr. Jarboe pointed out that a range of materials might need to be kept on-site for production. He likened the situation to that a paint shop, where the different materials are like the paint pigments. The paint color is custom-mixed based on a formula. Likewise, a manufactured piece could be custom-made from the different materials.

Another participant pointed out that additive manufacturing is at a crossroads, and if the technique is not picked up soon, it will remain only a niche application. The community is still in search of that big application. Dr. Jarboe agreed there is

no “killer app” right now. However, hobbyists are making inroads as they develop important products and new additive-only designs. He again mentioned underwater robotics as a good example. He thought perhaps hobbyists could enlarge their businesses, or perhaps the hardware store model might prove successful.

Dr. Latiff asked what, if anything, was being done in Congress to get out in front of this issue. Dr. Jarboe pointed out that Congress is a reactive body, and they do not generally get out ahead of a problem. If the community can identify a specific issue, Congress can work to address it.

The discussion ended on the topic of design possibilities. Dr. Jarboe said that large-scale systems are not yet feasible for additive manufacturing. We cannot, for instance, use it to develop a whole airplane or building, at least at this stage of the technology. The challenge right now is to think about how individual additively manufactured components can change the design space.

LOW-VOLUME MANUFACTURING USING ADDITIVE PROCESSES

Dale Carlson, General Manager for Technology Strategy, GE Aviation

Dr. Carlson was unable to participate in the meeting because of a last-minute conflict in travel plans. Dr. Schafrik, General Manager, GE Aviation, presented Dr. Carlson’s ideas on his behalf.

Dr. Schafrik began the talk by showing the history and emergence of direct digital manufacturing, tracing it back to the macro-layered construction of the pyramids at Giza in 2300 B.C. and continuing through to today’s qualification of direct digital manufacturing (DDM) and its transition from rapid prototyping to low-volume production for the aerospace industry.

Dr. Schafrik then noted some issues that must be addressed to accelerate the adoption of DDM. He divided these issues temporally into short-term, mid-term, and long-term challenges. In the short term (2014), a main issue of consideration is the improvement of surface finish. Another issue to be addressed is fatigue endurance. Dr. Schafrik noted that Dr. Carlson’s charts show tensile strength and ductility properties. Fatigue properties are also critically important for many applications. Achieving a fatigue life for DDM comparable to that achievable with conventional production methods is of interest, and this may require postprocessing steps. Process monitoring is another key issue. It includes such challenges as improving modeling tools, improving machines, controlling input materials (especially the powder feedstock), improving powder delivery mechanisms, and maintaining consistent energy sources to avoid stop/start during lengthy process cycles. Finally, another issue in today’s world is establishing industry standards. Developing common terminology is a related challenge.

In the medium term (notionally 2016), Dr. Schafrik said that the focus will shift to the development of better analytical tools to predict material capabilities. He pointed out that there will be application-specific and higher-capacity machines to ensure the process parameters are optimal for each manufacturing application.

In the long term (2018 and beyond), he said that there will be a focus on the development of best practices and the increased use of automation. Applications of the future include graded materials, bio-analog materials, and smart materials (these are difficult to process by conventional means). Dr. Schafrik noted that GE is interested in increasing the choice of sizes in which the product is available and the throughput of the process, with the latter being of more immediate interest.

After describing additive manufacturing as being in the early adoption phase of the sigmoidal adaptation curve, he went on to discuss the systems used in additive manufacturing. The two primary systems currently are laser deposition and electron beam deposition, each of which has advantages. The powder bed/powder feed is a critical operation. As part of the qualification of the process, extraneous matter has been introduced into the powder bed to determine its resultant effect on the material properties. This experiment, with others, was helpful in establishing production standards and specifications. Dr. Schafrik noted that a limited number of alloy powders are readily available from powder suppliers, although larger users need volumes sufficient to warrant the production of specialty powders. He pointed out that during additive manufacturing much of the powder is swept off after some of each layer is consolidated, and the unused powder can be collected and used again. A research question is identifying how many times the powder can be used before its morphology changes too much to be reused. Other research questions include using wire feed instead of powder,2 assessing layer thickness in real time, increasing chamber sizes, assessing tolerances, and improving surface finish. Properly processed alloys can approach the strength and fatigue properties of wrought materials.

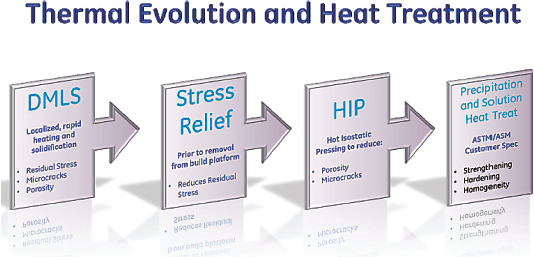

Dr. Schafrik then showed the processes needed to produce a structural component using laser deposition, illustrated in Figure 1. There are four primary steps:

- Direct metal laser sintering (DMLS). Apply localized, rapid heating, melting, and solidification;

- Stress relief. Reduce residual stress without distortion of the part; should be done prior to the removal of the part from the build platform;

- Hot isostatic pressing (HIP). Reduce internal porosity and microcracks; and

- Precipitation and solution heat treatment. Enhance the structural alloy via heat treatment processes to transform the as-deposited microstructure into an engineered microstructure.

___________

2For example, Sciaky makes an electron beam system that uses titanium wire rather than powder.

FIGURE 1 Processing steps needed to create a structural material. SOURCE: Courtesy of Dale Carlson, GE Aviation. Slide no. 6.

Dr. Latiff said that if the part being manufactured needs to be of high tolerance, the impact of these four processing steps on the physical dimensions may matter. Dr. Schafrik responded that one could do a finish machining operation. Dr. Latiff countered by pointing out that additive manufacturing is often used to make unique geometries that cannot be machined or made in any other way, so a machining operation would not always be a viable solution. The speaker later added that advances in process models and process controls will enable precision shape control of the finished part without the need for finishing operations.

Dr. Schafrik then compared four different manufacturing methods: additive manufacturing, wrought/forging, casting, and powder metallurgy. The advantages of additive manufacturing include the ability to make complex shapes with little waste, and to do so rapidly. The product’s properties are good, but the overall cost is currently just fair. Wrought and forged parts from cast ingots are widely used in manufacturing premium quality parts because of the materials’ superior fatigue properties, but the buy-to-fly ratio is poor. Dr. Schafrik reported that for aerospace parts, typically 6-8 lb of material are required to produce 1 lb of finished part. While this is a high ratio, it was formerly 10:1, so progress is being made. Casting is cost-effective, but there can be segregation and shrinkage on the microscale as the part solidifies, which introduces defects that can affect overall part life. A hybrid approach combines elements of additive manufacturing with elements of casting or forging.

He pointed out that people often say that DDM results in mechanical properties similar to those of forged materials. However, the accuracy of that statement

depends on the material system and the DDM processing and postprocessing steps to obtain the desired microstructure and resultant properties. Dr. Schafrik provided examples of the microstructure of a cobalt-chromium alloy, of Inconel 718, of a titanium alloy, and of an aluminum alloy, comparing an additively manufactured part (with heat treatment) to a wrought component. In the aluminum alloy example (a propeller made from 6061 aluminum), the heat-treated additively manufactured part actually had better properties than the wrought part.

Dr. Schafrik then showed a production part, a cobalt-chromium alloy fuel nozzle. It is designed in such a way that it cannot be conventionally manufactured and must be made via additive manufacturing. The cross section is very complicated, with multiple internal passages in the nozzle. The conventional part would need at least 10 braze joints, as well as multiple rounds of brazing. This would result in degraded properties. Using additive manufacturing, the part consists of only one piece, and its properties are improved. GE is planning to produce a number of these nozzles, which creates a need for reproducible results. GE also is investigating other materials in addition to the cobalt-chromium.

Dr. Schafrik mentioned that GE has formed a joint venture with Sigma Labs for real-time quality control. It is investigating a number of different concepts, and preliminary results are encouraging. The intent is to make the resultant quality control system available to the industry.

Dr. Schafrik pointed out that the use of additive manufacturing will create a supply chain shift. For this to happen on a large scale, supplier capabilities must be improved. Some requirements are these:

- Increase sophistication of additive process equipment;

- Enhance capability to produce more complex shapes;

- Modifiy current design practices to fully exploit DDM capabilities;

- Replace castings with DDM; and

- Reduce the time and cost to qualify the new process.

During the question-and-answer period, someone asked if GE is working on the development of the next generation of additive manufacturing machines. Dr. Schafrik responded that it is having discussions with equipment manufacturers, giving feedback on the improvements that should be made in the machines.

A participant pointed out that the cost of additive manufacturing of structural parts is high and asked if approaches are under way to reduce that cost. Dr. Schafrik said that companies are looking to reduce the cost of the powder and increase the throughput rate of the processing. The heat treatment steps are also candidates for cost reduction. He pointed out that in the case of the cobalt-chromium fuel nozzle, the part “bought itself” onto the engine by virtue of its superior cost, durability, and functionality.

Dr. Latiff pointed out that the fuel nozzle example would result in millions of parts produced. He wondered how this fits in with the workshop theme of low-volume production. Dr. Schafrik said that it depends on one’s definition of low volume. GE makes several thousand engines per year. Each engine has tens of fuel nozzles. Dr. Schafrik considers this low volume, especially given that many machines are required to produce even this limited volume; production is increased by deploying more additive machines. Another participant pointed out that DOD is really interested in rate-independent production rather than low-volume production. In general, DOD would like to see the same cost per unit rather than having the costs scale dramatically depending on the production volume. Variable-rate production is key.

Another participant asked if DDM could it be used for replacement parts for engines. Dr. Schafrik said that the qualification of the part becomes a challenge.

Dr. Jarboe asked how GE changes its design processes to take advantage of DDM. Dr. Schafrik said that GE does quite a bit of testing to failure at the sub-scale and full-scale level. Why and how the part fails in the tests are analyzed. The analysis and lessons learned are incorporated into the design practice, including the mechanical design as well as materials processing.

DESIGN AND DEVELOPMENT OF ELECTRONICS AS CONTROLLABLE, WELL-CONTROLLED PROCESSES

David H. Johnson, Senior Electronics Failure Analyst, AFRL Materials Directorate

Mr. Johnson began by stating that his goal is to treat the electronics design and development processes as controllable, as is done for manufacturing. Electronics design in military applications is currently more of an art than a science—though he acknowledged that, as a failure analyst, he tends to see the worst cases. If the design and development of electronics are controllable, then the end product should be working and reliable the first time; in other words, the user should see a first-pass yield. The idea of a first-pass yield fits neatly into the idea of low-volume manufacturing. When one is building only a few of a particular item, it is efficient and cost-effective to avoid multiple iterations.

Mr. Johnson explained that avionics has had poor reliability since the 1970s. When a part was to be replaced, certain versions of the replacement part would work and others would not. The parts might function in other applications—that is, the problem has been not with part failure but rather with the part’s compatibility in subsystems and systems.

He made several observations about failures in electronics systems:

- Most electronic parts do not have inherent mechanisms for wearing out.

- Parts are the fundamental unit of design, assembly, and manufacturing. They are also the fundamental unit of failure, repair, and obsolescence. One misapplied or unstable part can render an entire system unstable and unreliable. It is critical for each part, at every circuit location, to be correct. For this to occur, the design and development processes must be completely controllable. Some parts have unavoidable life limits, but capable, controllable, well-controlled design and development processes should be able to identify life-limited parts and find alternative parts or circuit topographies that eliminate all, or almost all, sensitive parts. The goal is to have a failure-free service life.

- Parts fail, not systems or subsystems or assemblies. There are very few inherent wear-out mechanisms in electronics, and unless parts and materials are misapplied, there should be very few failures over the entire service life of a system. However, the DOD may have to tolerate a heavy maintenance cost on top of the high original manufacturing cost, driven by parts that are not fully suitable for application in harsh environmental conditions or in specific circuit locations.

Mr. Johnson said that most of today’s solid-state electronics parts, unlike vacuum tubes and other parts of the past, do not have wear-out mechanisms. The initial problems associated with today’s parts tend to be either poor quality or related to undocumented characteristic/parametric variability (i.e., vendor data sheets not documenting the full range of variability of essential parameters and characteristics). Mr. Johnson explained that the Rome Air Development Center field failure return study demonstrated this type of problem. Solid-state parts, which had been removed for cause at Air Force repair depots, were tested separately by each of the parts’ original vendors. It was determined that 85 percent of the parts that had been diagnosed as having failed and that were removed still complied with the applicable part-level acquisition specs. Parts were failing not because they fail to meet their specifications but because there is an underlying problem with how they are integrated into systems. Parameters key or critical to the proper function and performance of a given vendor’s version of a part, when it is used at a particular circuit location, were or are not tightly enough controlled by the acquisition specifications over time and temperature. This problem has been observed over and over again in failure analysis and investigations into the root causes of poor reliability and manufacturing yields. Mr. Johnson said there is no customer demand in the DOD for designers to identify, document, or control critical parameters of each and every part as applied at each circuit location. Mr. Johnson acknowledged that the task may sound monumental, but in reality, with

modern computer-aided design (CAD) modeling and simulation capabilities, it would not be very onerous. Often, a small number of unstable parts in a system tend to dominate the maintenance demand. During design, circuit optimization should include minimizing the number of parts with inadequately controlled critical parameters. Those parts having critical parameters that cannot be adequately controlled by vendor or military acquisition specifications are the ones for which a detailed, location-specific specification needs to be developed. Such a specification would ensure that the one or two parameters important for proper performance and reliability at a specific circuit location are controlled within limits that account for parametric drift due to aging and thermal effects over the design service life, using test sampling to verify performance.

Mr. Johnson pointed out that most original equipment manufacturers (OEMs) are using commercial-grade parts, which typically have more specified characteristics and parameters as well as tighter limits than the military version of the same parts. However, for parts sold using the vendor’s own part numbers and specifications, there are disclaimer statements that allow the vendors to change any number of parameters (such as the construction of the part, design of the die, tested parameters, testing conditions, and accept/rejection criteria) without notice to those planning to use or already using their parts in design, manufacturing, and/or field support. As a result, the specification becomes a notional description of the part, rather than a true specification that actually controls design/application critical characteristics. Larger manufacturers prepare part specs of their own that establish and control parameters and associated limits critical for part use at each and every circuit location where applied. If buying in low volume, however, DOD OEMs are forced to buy parts through distributors, using the vendor’s own part number and specifications. When buying parts to a vendor-controlled specification, the delivered parts can vary substantially from the published vendor specification. These variations can occur from part to part within the same lot and certainly from lot to lot. This is a real problem if the part is to be used in a high-performance system where parts are being pushed to near-maximum performance limits. When, for standardization or availability purposes, more than one vendor’s part can be substituted during manufacturing and field support, rework and field reliability problems arising from poorly controlled critical parameters become much worse.

Mr. Johnson suggested that certain circuit locations be flagged to note where critical parameters need to be controlled more closely than is possible when using the available part-level acquisition specification. These parameters should be documented, including the tighter limits needed at a specific circuit location. These limits can then be used to sort parts and, thereby, enhance part suitability at particular sensitive circuit locations. This would give confidence that the system will work the first time used, with high reliability. In a study of an Air Force fire-control

radar undertaken to identify root causes for very heavy maintenance demand, the DOD discovered a 94 percent correlation between specific circuit locations causing very heavy factory rework burden and those same circuit locations also causing the vast majority of field failures and maintenance demand. Indeed, manufacturing rework was found to be a very good predictor of field failures and reliability. Most importantly, factory rework data often accurately predict very early in an acquisition program which parts will cause the vast majority of field failures. Factory rework data identify those parts that have been misapplied during design and development. A well-controlled, closed-loop design process will not only prevent factory rework, cutting acquisition costs, but will also substantially reduce costs of ownership by reducing field failures. If a requirement is established for the OEM to determine the correct root causes of factory rework by identifying and gaining control of those key/critical parameters/characteristics missed in original design and development, field reliability will be improved and maintenance burden and costs driven down very early in the acquisition process. As part failures are driven down over the service life of a system, parts obsolescence is also significantly driven down. If an obsolescence problem does occur, knowing the circuit-location-specific critical part parameters allows the cheapest, fastest obsolescence solutions to be found (part-level solutions rather that circuit assembly redesign and manufacture).

A participant noted that Mr. Johnson reported on a large set of data correlating parts and locations with failures and asked if a systematic process could be put in place to design systems going forward. Mr. Johnson replied that one can identify problematic parts and parameters through Monte Carlo simulations and other variability and tolerance analysis techniques, even in cases where the parameters meet the specs. Then, design trades can be made to minimize the number of parts with critical parameters. The ones that must remain could be documented, along with limits that ensure proper function and performance over time and temperature, and the circuit specs amended accordingly.

Dr. Latiff said he was surprised that location-specific parameters were not specified or controlled. He wanted to know if Mr. Johnson would propose a change to military specifications on how parts are designed and built. Mr. Johnson said no, it is not possible to have vendors redesign or specify their parts for low-volume military applications. He is addressing design practices at the circuit card and above—ensuring that COTS parts (and all other grades of parts), when included in systems, do not create instabilities and failures in spite of the parametric variability that vendor disclaimer statements can allow/enable.

A participant asked if it would be possible to stop using the vendor’s part altogether and provide spec-controlled drawings instead. Mr. Johnson responded that that would be fine—if vendors accepted and would be willing to supply parts (in low volume) to the drawings. If the part is low volume, part vendors would be reluctant to accept such drawing. As a result, it would probably be better to control

the variability of the existing vendor part by sorting parts during original manufacturing so as to ensure entire-service-life suitability of each part used during original manufacturing. Another participant noted the connection to the GE discussion of powder supplies for additive manufacturing. In that case, GE needs to verify the input to guarantee that it has received the requested material. The question becomes one of verification. In low-volume production, the costs do not typically include verification testing. Another participant noted that materials can vary significantly, and in-house testing will often be needed to make sure the material microstructure is accurate. In-house testing will guarantee that the material has the desired properties. With electronic parts, unlike materials, reliability can be time-dependent. Mr. Johnson also pointed out that vendor part testing is usually conducted at room temperature, and only a subset of parameters is tested. When field conditions are outside the testing parameters, more variation may be seen in the electronic parts. So, sorting of parts to ensure adequate control of critical parameters at each circuit location where such control is necessary must be done with testing across a range of temperatures rather than only at room temperature. Fortunately, most parts will have only one or two critical parameters that must be tested during sorting so as to ensure circuit location suitability: Not every parameter in a specification would need to be retested to tighter limits. And, experience indicates that only a subset of parts will need specific parametric sorting if circuit designs are optimized to reduce the number of sensitive circuit locations. These design practices are commonly used for high-volume commercial and industrial electronics. The design tools already exist to perform variability sensitivity analysis necessary to minimize sensitive circuit locations and to identify, document, and provide a means of controlling critical parameters that can’t otherwise be eliminated.

Substantial savings would be realized in both acquisition and life-cycle support of our military systems if significant reduction of factory rework during original manufacturing and elimination of field failures could be achieved—especially if those failures that are caused by out-of-tolerance parametric drift are removed during the factory rework. Here, parametric drift is normally due to thermal effects that are not duplicated during diagnostic testing at our depots. In addition to significant reductions in acquisition and ownership costs, mission availability and readiness metrics would also be substantially improved as the goal of failure-free service life is approached.

Customer demand for a capable, controllable, well-established design and development process for electronics/avionics, that in part would require identification, documentation, and controlling key/critical parameters over time and temperature at each and every location in a circuit assembly, can and should be met. The design tools and capabilities to implement these Engineering 101 concepts exist. The added time to perform the necessary engineering tasks would be minimal. There is a need to hold OEMs responsible for identifying root causes of

rework and providing corrective actions such that virtually all parts, used at each and every circuit location, are suitable for the application and will function properly over the entire design service life. Given that most modern electronic parts technologies have no inherent wear-out mechanisms, if they are not misapplied, failure-free service life is a goal that could be accomplished in many of our military systems that today require maintenance attention following almost every mission. Tolerance analysis of signal paths causing high rates of cannot-duplicate failures could be used to identify and gain control of the parametric tolerance stacks causing these types of failures. Cannot-duplicate intermittent failures are the cause of huge expenses for maintenance, with faults often not found in spite of many attempts to diagnose and repair.

REDUCING TOTAL LEAD TIME WITH QUICK RESPONSE MANUFACTURING

Bill Ritchie, President, Tempus Institute

Mr. Ritchie began his talk by describing his own history in the manufacturing industry and how production volume has varied through his career. He illustrated the differences between the high- and low-volume markets. Mr. Ritchie began his career manufacturing 48,000 struts per day for General Motors. He then moved to making custom one-offs for truck bodies and dumpster collectors, with 4,000 customers a year. He then produced engineered-to-order pumps and water-blasting equipment. He then moved to developing custom gears. He pointed out that he knows from personal experience that high-volume manufacturing techniques do not apply to one-off production. His talk focused on quick-response manufacturing (QRM) and its applicability to low-volume manufacturing, and he recommended two seminal books on QRM, written by Rajan Suri, a professor at the University of Wisconsin (Suri, 1998, 2010).

Mr. Ritchie then described the Center for Quick Response Manufacturing at the University of Wisconsin, with which he is affiliated. The center was founded in 1993 as a university-industry partnership to address methods for lead time reduction in manufacturing. The Center has over 200 member companies, including many international companies.

Mr. Ritchie explained that there are two types of variability in manufacturing: dysfunctional and strategic. Dysfunctional variability means that something went wrong, and it includes things such as redesigns, “hot” jobs requiring expediting, machine breakdowns, and wrong specs. Strategic variability is the result of changes in circumstances, and it includes such things as increased product options, custom-engineered products, and highly variable demand. Traditional manufacturing

techniques seek to reduce variability, no matter the source. The purpose of QRM is to exploit strategic variation in addition to resolving dysfunctional variation. The overall goal is to reduce total lead time. When total lead time is reduced, other benefits are realized, such as improved quality and decreased costs. Mr. Ritchie gave an example of a company that reduced manufacturing lead time from 75 to 4 days, lowering the cost per part by 30 percent. In another example using QRM, lead times were lowered from 47 hours to 1 hour by reducing premanufacturing (i.e., administrative) lead time. Mr. Ritchie provided additional examples of total lead time reduction that lowered cost and increased profit.

Mr. Ritchie broke down QRM into four core concepts and described each one in more detail:

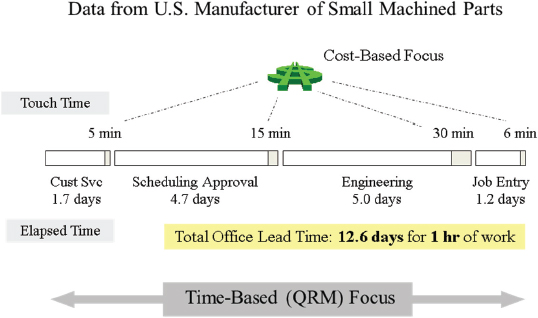

- Understand the power of time. Mr. Ritchie said that lead time drives everything: business understanding, decisions, and metrics. He pointed out that in most cases the touch time (the amount of time actually working with the product) is but a fraction of the overall elapsed time in a manufacturing project. This provides the opportunity to dramatically reduce the overall elapsed time by reducing the lead times at each step of the project. Figure 2 shows the time breakdown for an example manufacturing process. In the example, the office lead time was 12.6 days, during which only 1 hour of work was performed. Mr. Ritchie pointed out that most companies focus on improving touch-time efficiency—the direct labor portion—whereas the real saving to be gained is in total lead time. Overhead is usually 50 percent of total costs, while direct labor costs are 5 to 10 percent. He showed results from John Deere, looking at the relationships between total lead time reduction and cost for 12 of their projects. In the study, lead time decreased by anywhere from 36 to 94 percent, and overall cost decreased by an average of 25 percent.

- Organize the enterprise for responsiveness. The main idea behind QRM is to develop multifunctional cells that are organized around task completion instead of the traditional stovepipes, where each function is separate from the others.

- Understand and exploit system dynamics. Mr. Ritchie explained that long lead times tend to result in even longer lead times elsewhere in the project. Variation grows exponentially as project time increases.

- Develop a strategy for the entire enterprise. Mr. Ritchie emphasized the need to introduce specific techniques to reduce total lead time and not to focus on merely reducing costs.

Mr. Ritchie noted that companies traditionally focus on the touch time, because that is when the actual work is being conducted. However, the additional

FIGURE 2 Comparison of a cost-based focus and a time-based focus for a sample manufacturing process. SOURCE: Presentation by Bill Ritchie, Tempus Institute. Slide no. 13 (from Suri, 2010).

time spent managing projects is more expensive than the touch time, because the lead time dwarfs the touch time. He pointed out that the use of multifunctional cells reduces total time, but the touch time increases to enable this. Mr. Ritchie explained that the traditional practice is to multitask, to work on another project while waiting for a response from another team member. In a multifunctional cell, team members work on a project until the task is complete. In his example of a “basic” order, the use of multifunctional cells reduces total order time by 75 percent, but touch time increases by 15 percent. In his example of a “complex” order, total order times decrease by about 75 percent again, but touch time increases by 25 percent.

To summarize, Mr. Ritchie said again that QRM is a strategy that introduces specific techniques to reduce total lead time, not just focusing on direct cost. He said that quick response manufacturing concepts apply throughout the enterprise, with most of the improvement in time savings in up-front office applications. To actually reduce cost and meet schedule requirements, Mr. Ritchie said that one should focus on reducing total lead time. Finally, he pointed out that everybody wins under this strategy: End users and suppliers benefit from improved deliveries and total cost reductions.

Mr. Ritchie then addressed the issue of how to apply this to the DOD. He

pointed out that affordability is a main concern for DOD, and lead time reduction could make certain programs more affordable. He pointed to the High Velocity Maintenance project, which investigated the total lead time for the repair of a C-130 and made adjustments to improve efficiency. Mr. Ritchie also said that total lead time could be the primary performance metric for all DOD manufacturing efforts.

In the question-and-answer period, Dr. Latiff commented that this talk seemed to focus on efficient manufacturing, not variable-rate or low-volume manufacturing. He asked how this fit into the framework of low-volume manufacturing. Mr. Ritchie replied that one-off manufacturing needs to be efficient, in terms of controlling total time.

Another participant noted that part of the cost premium in low-volume manufacturing is risk: part of the cost is moving up the learning cycle, and part of the cost is uniqueness (the fact that you cannot get the product anywhere else). The participant asked how the cost savings under QRM would translate to DOD and how the costs associated with risk, the learning curve, and uniqueness would be affected. Mr. Ritchie responded that the cost might benefit the company and not DOD, at least not directly. However, there are other benefits to QRM that would apply, such as overall decreased time and the ability to delay the manufacturing start.

Dr. Schafrik pointed out that using multifunctional cells tends to increase quality and decrease the number of redesigns. Problems can be identified quickly and can be fixed right when they occur. Collaborating in cells means fewer hand-offs. In short, everything improves when lead time is reduced. Other participants asked if the cells need to be colocated. Mr. Ritchie said that was critical. Another participant asked what has the biggest impact on decreasing the total time, and Mr. Ritchie responded that the single biggest issue is changing the mind-set of the employees, primarily the leaders.

Dr. Schafrik opened the discussion period by briefly summarizing each of the four talks from the workshop’s first day. He then pointed out that although the participants discussed how to define low volume, they did not come up with a good answer. He also noted that another driver in this area is part qualification, including the requirements needed for qualification. He pointed out that the workshop discussion had been more focused on responding to the needs of variable demand than on the needs of low volume. One participant noted that design, qualification, and acceptance testing become much more critical as you move to smaller lot sizes. Another participant said that bargaining power is important as well; a small customer among many other larger ones will not get much attention, but a single buyer will get more attention, even if the lot size is small.

A participant brought up the issue of COTS and pointed out that the military

is dependent upon COTS supplier specs, which can change arbitrarily. Although Mr. Johnson had said when asked that he did not think military acquisition policy should change, in reality some participants thought that is what his talk was recommending—that the military should provide the specs.

Another participant noted that a common point of discussion was the combination of low volume and high mix; the associated variety and lack of repetition are the big drivers. Mix and repetitiveness are actually broader concerns and impact all manufacturing, not just low-volume manufacturing. A participant noted the need for competencies and for a multiskilled workforce that could handle variety as it arises. The multiskilled workforce is as important as the equipment needed to address high mix in a cost-effective, viable manner.

A participant noted that additive manufacturing (AM) has often been equated with low-volume manufacturing, but that is not necessarily true. AM is a tool with a niche in certain applications, but it is not the solution to low-volume issues. Another participant gave the example of hearing aids: The single largest commercial application of AM, both in volume and in value, is hearing aid bodies. In this application, each practitioner supplies a laser profile file for each individual ear. Hearing-aid AM has not led to localized or diffuse production, however. Instead, large factories with many AM machines produce the parts.

Dr. Schafrik noted that the control systems for production are another key factor in low-volume, high-mix production.

As an example, a participant noted that there are companies producing tens of thousands of individualized parts per day via additive manufacturing.3 This is certainly not low-volume AM, though it is high-mix AM.

EXPLOITING ADVANCED COMPUTATIONAL RESOURCES FOR COMPETITIVE LOW-VOLUME MANUFACTURING

Nayanee Gupta, Research Staff, Science and Technology Policy Institute

Dr. Gupta began her presentation by noting that most manufacturing jobs today are high-tech jobs. For a company to remain competitive in this type of environment, it must introduce new design and production processes that leverage computational resources. Modeling and simulation tools have shown a high rate of return on investment for products that require complex engineering and for industries that have lengthy design and product development cycle times. Dr. Gupta noted that the Council on Competitiveness asserts that the United States is lagging behind other countries in the development of public-private partnerships to

___________

3Invisalign reportedly manufactures 50,000 custom, clear aligners for teeth per day using additive manufacturing processes.

access high-performance computing as a shared resource. The European Union has developed a partnership (Partnership for Advanced Computing in Europe, or PRACE4) for sharing high-performance computing resources among small and medium-sized European manufacturers. Germany’s Automotive Simulation Center also has a collaboration program, SimTech,5 in automotive supply chain manufacturing. Dr. Schafrik pointed out that GE and many other aerospace companies have their own supercomputing facilities and do not depend on a national facility. He asked if the Council on Competitiveness study focused on smaller manufacturers. Dr. Gupta clarified that she was speaking about smaller manufacturers; the larger companies tend to have their own in-house resources to address modeling and simulation. She was characterizing low-volume manufacturers, typically small or mid-size companies.

Dr. Gupta then defined and described low-volume manufacturing. Low-volume manufacturing (LVM) is used for prototyping, for complex or customized products, and for high-mix, low-volume production. LVM typically needs short product development and prototyping times, and the incorporation of modeling and simulation could lower up-front costs. LVM must be flexible, so that multiple product designs can use the same tooling and equipment, and agile, so that process flow can switch among different product designs. Dr. Gupta then described how LVM can benefit from modeling and simulation: Science-based predictive simulation replaces costly trial-and-error experimentation in the design and development of complex or custom products. Dr. Gupta said that modeling and simulation can potentially benefit LVM through very large cost reductions, shorter design cycle times, improved efficiency, and enhanced performance. She cited two studies that quantified return on investment, one in terms of reduced cost (Weatherington, 2011), and one in terms of reduced development time (Littlefield, 2007).

Dr. Gupta explained that three elements are necessary for the adoption of modeling and simulation:

- Awareness of and access to simulation models, including technical knowledge for using the models. The application software must be at the right level of specialization. This is currently a problem in manufacturing. Although software packages exist for many classes of problems, they do not meet the specialized or tailored needs of complex manufacturing.

- Computing infrastructure and training. Currently, there is a lack of sufficient talent in these areas. Only the largest companies can assemble teams of experts with specialized knowledge of scientific and technical programming. High-performance computers and programming skills are needed.

___________

4http://www.prace-ri.eu/. Accessed on September 30, 2013.

5http://www.simtech.uni-stuttgart.de/. Accessed on September 30, 2013.

- Cost and expertise, including infrastructure, license, and trained personnel. Businesses would like to exploit the problem-solving power of high-performance computing, but they find it too expensive. The cost of software licenses can also be prohibitive for a smaller manufacturer.

Dr. Gupta then discussed the importance of simulation models in manufacturing. She explained three types of simulation models:

- Physics-based research and development models. These models are broadly applicable across several industries and product types (flow models, material property models, thermal models, and so forth.).

- Product and process models. These models use CAD and computer-aided engineering (CAE). They include integrated design and process models; tighter integration between design and process reduces the technology development cycle time.

- Full-scale manufacturing models (cost and process models).

Dr. Latiff asked about the model assumptions, such as macrostructure and material properties. Dr. Gupta responded that there is a succession of multiscale models. Material property models feed into device models, which feed into circuit models, and so forth.

Dr. Gupta pointed out that a wide array of simulation models exist at universities and national laboratories. However, these models do not transition well to the private sector at the level of the small to mid-size manufacturer. The software requires an application software “wrapper” to solve a specific engineering problem. An example of this is finite-element analysis in collision analysis. There are several layers between the underlying finite-element model and the specific engineering problem. Fewer than half of manufacturers have the resources to access or use this software. Dr. Gupta then proposed open-source model development to facilitate access to a broader population of manufacturers. If this is done from the beginning of the model development, the user and developer community potentially could expand. A successful example to follow would be the National Weather Service model for hurricanes, WAVEWATCH III.6 This model is open source and actively enhanced by the research community.

Dr. Gupta then presented results from two different surveys showing that computing infrastructure is desired by the community. The National Center for Manufacturing Sciences reported that 75 percent of respondents believe there is a competitive value in advanced computational models but less than 50 percent

___________

6http://polar.ncep.noaa.gov/waves/index2.shtml. Accessed on September 30, 2013.

said they had the expertise needed to use it.7 Similarly, a Council on Competitiveness survey of 77 companies using computers for prototyping and large-scale data modeling found that around 50 percent of the respondents were limited by computing capability.8

Dr. Gupta then cited four different cost impediments to accessing high-performance computing:

- Hardware costs, though Dr. Gupta acknowledged these costs were continually decreasing.

- Maintenance costs, particularly the staff costs associated with having dedicated, trained personnel.

- Software costs, which are more significant for smaller manufacturers.

- Technical costs such as porting across systems, scaling up to large-scale modeling on parallel systems, and integrating complementary software tools.

Dr. Gupta then outlined potential usage models to facilitate access to computing resources. She suggested developing a shared resource that would bundle hardware, software packages, and technical talent. She singled out the Ohio Supercomputing Center9 as an example of this usage model. She suggested that sharing resources in this manner would also be useful to aerospace and automotive manufacturers. Dr. Latiff asked how actively and how well these types of centers were currently being used. Dr. Gupta responded that, anecdotally, heavy machinery and tooling companies have provided extremely positive feedback, and they value being able to offload the responsibilities associated with maintaining their own computing facilities. The dedicated staff would be a burden to these companies. She said she could provide other examples if they were wanted.

To summarize her talk, Dr. Gupta provided three technology-related suggestions to enhance access to high-performance computing:

- Better usage models for shared access to high-performance computing;

- Customized simulation tools for specific applications in different engineering communities; and

- Open-source, integrated, dynamic models. Federal agencies should take the lead on both developing and disseminating these models.

___________

7National Center for Manufacturing Sciences, 2010, Modeling and Digital Simulation among U.S. Manufacturers: The Case for Digital Manufacturing, Intersect360 Research.

8Reflect: Council on Competitiveness and USC-ISI In-Depth Study of Technical Computing End Users and HPC is available at http://www.compete.org. Accessed on September 30, 2013.

9https://www.osc.edu/. Accessed on September 30, 2013.

She ended her talk with four policy-related suggestions to facilitate access to high-performance computing:

- Institutes such as the National Network of Manufacturing Institutes,10 established to provide computation resources to small and mid-size manufacturers,

- More shared facilities on the model of the Ohio Supercomputing Center,

- Workforce training, and

- More analysis to determine the best way to introduce high-performance computing into the workflow.

Dr. Latiff opened the question-and-answer period by noting that other states fund regional centers like the one Dr. Gupta described in Ohio. He asked if the federal government supported the regional centers, or if federal agencies have created their own. Dr. Gupta replied that the national laboratories have shared resource facilities, particularly in the Department of Energy. There is a problem with awareness, and of connecting the right manufacturers with the right laboratory capabilities. Dr. Latiff pointed out that the manufacturers would need help and expertise, not just facilities.

A participant asked if there was enough expertise and talent being developed through the U.S. university system to meet the demand for high-performance computing. Dr. Gupta said that was a difficult question to answer. There is some trained expertise, but probably not enough, as the needs are substantial.

A participant noted that high-performance computing and simulation were not sufficient; manufacturers need equipment to ultimately test models. That requires infrastructure, something that the U.S. government tends not to have. The participant noted that Norway has a good facility for metallurgy. Another participant noted that Canada has an outstanding computing facility for wood materials; the wood suppliers in Canada fostered its development.

A workshop participant brought up the idea of validation and verification (V&V). He pointed out that a program exists in the DOD to replace very expensive shock trials with modeling and simulation. The first program to use the models would have to conduct the expensive testing anyway, to validate the models, and no program wants to bear this cost. Also, when a company brings in a new missile, DOD requires new V&V to ensure existing models apply to the new missile—so the modeling is not useful. How can this problem be overcome? Dr. Gupta responded that it is necessary to leverage similar work, looking across disciplines and collecting and analyzing as much data as possible. The solution should be found iteratively, rather than perfecting the answer for each specific application. The participant

___________

10http://manufacturing.gov/nnmi.html. Accessed on September 30, 2013.

responded that, in industry best practices, each program is assigned an additional objective, which is to do something for the first time. DOD, however, does not have this culture.

Dr. Schafrik noted that the real gap is in software, not in access to high-performance computing. Small companies may not have access to supercomputers, but their software was not written with parallel processors in mind anyway, so lack of access is not the hindrance. His company, GE Aviation, works with small suppliers to advise them which software to use and how best to employ it.

Another participant noted that there are other shared access models worth examining. For instance, the Manufacturing Extension Partnership11 is a successful program in the manufacturing environment and has a presence in all 50 states.

Someone asked about the state-of-the-art in commercially available software. Dr. Gupta said that the answer depends upon the product or sector. There is no one software application that covers modeling from microstructure to the finished product. Most software in manufacturing is by necessity very specialized. No standardization has been performed in software and protocols.

The final question asked to compare the United States to the more successful European nations. Dr. Gupta said that Germany has clusters in place, and the European Union has policy and planning programs to help with access to high-performance computing.

Michael McGrath, Vice President for Systems and Operations Analysis, Analytic Services, Inc. (ANSER)

Dr. McGrath focused his talk on the recommendations of the NRC study Equipping Tomorrow’s Military Force: Integration of Commercial and Military Manufacturing in 2010 and Beyond (NRC, 2002), which was sponsored by the Joint Defense Manufacturing Technology Panel. He pointed out that before the study began, the main concern in civil–military manufacturing integration was saving money. The 2001 terrorist attacks occurred as this study was being conducted, and the main concerns in civil–military integrated manufacturing shifted to rapid response and technology superiority. In 2013, however, the focus of manufacturing integration in the United States has returned to saving money.

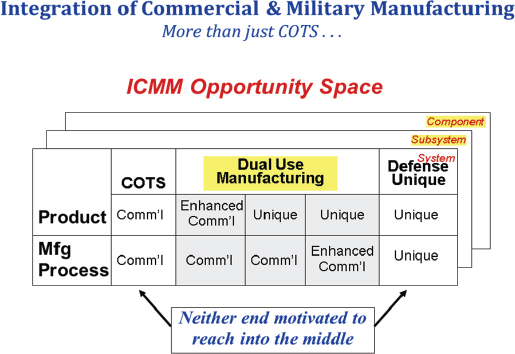

The report pointed out that there are opportunities at the system, subsystem, and component levels for using commercial technology in military systems. Opportunities increase as one moves down to the component level. It is rare that a

___________

11http://www.nist.gov/mep./ Accessed on September 30, 2013.

FIGURE 3 Opportunity space for integrated commercial and military manufacturing. SOURCE: Presentation by Michael McGrath, ANSER. Slide no. 4. (from NRC. 2002. Equipping Tomorrow’s Military Force: Integration of Commercial and Military Manufacturing in 2010 and Beyond. Washington, D.C.: The National Academies Press, p. 24).

commercial product could replace a military system. Dr. McGrath explained that the military acquisition spectrum tends to work at the two ends of the spectrum: purely military manufacturing or purely COTS. Figure 3 shows the opportunity space of integrating commercial and military manufacturing. The report focused on the middle portion of the figure, where dual-use manufacturing is utilized. Dr. McGrath provided four examples of such dual use:

- Example 1. The development of military products from commercial lines. This project was a joint Air Force ManTech/TRW project. This was an acquisition experiment in addition to being a technical experiment. The project involved parts for the F-22 and the RAH-66 (Comanche). It demonstrated military module manufacture on a high-quality commercial line; the use of commercial plastic parts and supplier systems to reduce cost; new contracting practices to access commercial suppliers; flexible computer-

-

integrated manufacturing; and the use of integrated product and process development (IPPD) management and integrated product teams (IPTs). Dr. McGrath indicated that the project worked well, and a 30-50 percent cost saving for electronics modules was expected. However, the F-22 went into substantial redesign and the RAH-66 was cancelled, so the parts never went into production. Nevertheless, the project demonstrated that military parts could be made in a commercial facility.

- Example 2. Integration of commercial and military manufacturing at Rockwell Collins. Rockwell Collins’s mixed military and commercial contract manufacturing within the same plant resulted in large savings. (Most contractors use separate plants for producing military and commercial parts.)

- Example 3. Northrop Grumman ALQ-135. A commercially produced electronic warfare subsystem was created, resulting in a 52 percent reduction in costs.

- Example 4. DARPA miniature air-launched decoy. The goal of this project was to see how far the use of commercial components could be taken. The flyaway cost was $30,000, and such unconventional items as soda vending machine parts, surfboard fabrication techniques, and cell phone components were used in the manufacture. The decoy did not have the range and endurance required for Air Force use, however. Northrop Grumman redesigned the craft, and then the program was cancelled and then reconstituted. Raytheon developed a new decoy with a price more than quadruple the original Defense Advanced Research Projects Agency target. Raytheon is now outsourcing the electronics to commercial entities.

Dr. McGrath stated that the 2002 NRC report Equipping Tomorrow’s Military Force contained five primary findings:

- Defense system integrators have a pivotal role in integrated commercial and military manufacturing. They decide where to source the components, so their role is important.

- Demonstrations have shown that integration can be conducted successfully at the subsystem level.

- Commercial trends make integration more attractive. For instance, electronics and other systems are improving; commercial processes are becoming digital and more flexible; and contract electronics manufacturing was a $70 billion per year industry at the time of this report (now, it is probably closer to $200 billion per year).

- Long-standing barriers to integration exist. Onerous acquisition rules are not acceptable to commercial suppliers. Commercial item procurement is not applicable to Research and Development. Intellectual property rules

-

are unsettling and inconsistent to commercial suppliers. Acquisition cycle times are too slow in the military; by the time government is ready to purchase a commercial part, the part often has been changed or is no longer available. Profit policies do not fit the commercial model and discourage outsourcing. DOD is not willing to share savings with industry.

- DOD is not currently equipped to understand the commercial marketplace. Individual program managers cannot pioneer acquisition reform alone. Their training does not include anything related to the commercial market.

To address these findings, Dr. McGrath referred to six primary recommendations of the 2002 NRC report:

- Implement policies, incentives, and guidelines for integrating commercial and military manufacturing.

- Contract for life-support and technology refreshment.

- Establish a commercial acquisition academy at the Defense Acquisition University to augment training and education.

- Fund and execute rapid-response demonstration programs to build a broad ICMM experience base.

- Create mechanisms to increase awareness of future commercial technology and capabilities.

- Invest in research and development to increase the mutual compatibility of military operating environments and commercially produced components, to mitigate technical barriers.

Dr. McGrath then explained why he believes this report is still unfinished business. He noted that the recommendations were issued a decade ago, but the defense acquisition culture has not changed. He gave the example of an improvised explosive device (IED) jammer from 2005. This project was given high priority by then Defense Secretary Donald Rumsfeld, who asked for 10,000 units in 60 days. To enable this, the government held a 30-day competition. A commercial supplier, M/A COM Technology Solutions, built 8,000 units in 60 days and viewed the project as “patriotic production.” There was no cost savings, but the response time was dramatically shorter than usual (traditional companies were quoting 12 months or 8 months at best, whereas M/A COM was able to complete it in 60 days). Related follow-on work went back to normal government procurement practices and was awarded to a traditional defense supplier. In another program after this, DOD was building a multifunctional radio frequency system, and it invited a dozen electronics manufacturing services (EMS) companies to brief it

on the project. Those companies explained that they could build a large fraction (say, 90 percent) of the components, but the system would need to be designed so as to allocate the commercial fraction on a separate board from the remaining 10 percent. If everything was integrated at the subsystem level, the EMS companies could not be involved because their separately produced components could not be incorporated. A traditional defense supplier was selected as the systems integrator, however, and no EMS companies were part of the program. The lesson learned was that use of commercial suppliers needs to be considered by the defense prime during systems engineering.

Dr. McGrath summarized by saying there may be technical issues, such as the need for trusted components, but in general technical issues are not the main problem. The main problem has to do with the acquisition process. He suggested this initiative could be tied to DOD’s Better Buying Power 2.0 program. He concluded by asking the group for ways to make constructive progress on ICMM in this era, since we cannot afford to continue to do what we are doing now.

Dr. Latiff began the question-and-answer period by suggesting that the 2002 NRC study should be revisited in depth to learn what has worked and what the impediments are. Another participant pointed out that contract manufacturing has changed and is already changing again. The relative competencies and quality of companies in countries around the world have shifted dramatically in the last 10 years.

Another participant was taken with the concept of the F-16 decoy assembled from commercial parts. He wondered what else in our inventory could be made in such a way. This could be a strategic issue, whereby cost would be a significant advantage. Another participant suggested that this may be another example of the United States “over-engineering” problems. He said this could be exemplified by comparing the very expensive technology for human spaceflight produced by the United States to the much less expensive, “thrown together” model used by the U.S.S.R—yet both systems flew successfully. Another participant described a study on innovation in the military field. In this study, Marines needed to create a water system and did so by taking commercial parts and putting them together in novel ways to solve the problem. Integration happens in the field to solve critical problems; however, this does not cycle back to any formal design.

A participant gave two examples of current DOD projects that include COTS. The XM29 Punisher system is a COTS turnkey solution. Also, the Navy radar program for DDG-1000 is a COTS-based solution. These programs show that there is some desire to save money in DOD by moving to the commercial sector. The network integration evaluation (NIE) receives user input on early prototypes and is including COTS in its prototypes.

TURN DOWN THE VOLUME: DESIGN FOR LOW-VOLUME MANUFACTURING

Eric Schneider, Mechanical Engineer, Key Tech

Mr. Schneider began his presentation by describing his organization. Key Tech12 is a product development firm based in Baltimore having three main markets: medical (its primary market), industrial (such as electromechanical devices and R&D instruments), and consumer devices (such as hand tools). He showed a typical product timeline, from concept to prototype to handing over for manufacture. He noted that Key Tech works on prototypes but is not a manufacturing firm per se. He explained that the duration of the entire process varies from project to project. For example, a blood meter took about 2 years from concept to completion, including testing.

Mr. Schneider provided a case study example: a neural drug device delivery system that provides a precise injection dose during a neurosurgery procedure. This device was the case study in an article he authored in 2010 (Schneider, 2010). Key Tech was responsible for the development process from R&D through Food and Drug Administration approval. Key Tech then teamed with a contract manufacturer for preliminary builds and producing the final product. The preliminary build lot size was the 10-20 units needed for clinical trial verification. The lot size in final production would be fewer than 100 units per year. Mr. Schneider then described some of the challenges associated with the development of this system:

- Display and interface. Key Tech could not justify an expensive off-the-shelf iPad-like display and instead developed a monochrome liquid crystal display.

- Protective window and enclosure fabrication. The product needs a protective window. It would normally be manufactured via injection molding in a large-scale production facility, but injection molding would be cost prohibitive in low volumes. Key Tech then moved to a variation of thermoform molding as the alternative mode of manufacture. This was also true for the enclosure fabrication. Dr. Latiff asked about using additive manufacturing to develop the injection molding tooling, but Mr. Schneider responded that Key Tech has been unable to find a company willing to do this on a commercial scale. Another participant noted that the Army’s Edgewood Chemical Biological Center is using metal laser sintering technology to create mold inserts for injection-molded parts.

- Software/firmware. All code must comply with the IEC 62304 international

___________

12http://www.keytechinc.com/. Accessed on September 30, 2013.

-

standard for medical device software. Mr. Schneider noted that software development is inherently manpower-intensive. To reduce software costs, the development time must be reduced. Mr. Schneider noted that Key Tech could use an off-the-shelf operating system, but then the operating system must be validated in its entirety.

- Managing customer expectations. Customers now expect an iPad-like interface, and they had to be convinced of the utility of a simpler, less expensive interface.

- Contractors. It is difficult to find contract manufacturers to build only 10-20 units for the preliminary build.

- Syringe pump (a.k.a. syringe driver) sourcing. It can be difficult to find COTS parts that will work for the life cycle of the product; if the COTS company changes its component design, it can be difficult to find legacy parts.

A participant pointed out that the risk and durability requirements for a medical device are very sensitive and asked how the required robustness could be built in when designing for low-volume production. Mr. Schneider responded that robustness is addressed via compliance with all standards, both hardware and software. Key Tech was responsible for developing protocols to meet the required standards. The FDA review has a risk analysis component in place.

Another participant asked Mr. Schneider to explain the medical device in the case study in more detail. Mr. Schneider explained that the enclosure holds a syringe driver that controls the movement of the plunger of a precision syringe. The plunger is moved to deliver precise amounts of a drug on demand during a neurosurgical procedure. The plunger fits into a holder that is driven by an off-the-shelf syringe driver. The syringe driver has a high-precision lead screw to move the plunger back and forth. Accuracy is so critical in this application that a second, redundant system was built in to shut down in the event that the measurements show the syringe pump is inaccurate. The syringe pump itself is fairly large, taking up much of the size of the unit. It is possible to make that pump smaller if it is custom designed, but it is not as cost effective in low volumes to custom-design a pump.

A participant asked if the operating system must be validated each time software is developed or modified, and pointed out that this seems redundant. Mr. Schneider said that from his understanding, yes—every time a change is made to the software, revalidation is necessary.

Another participant asked what Key Tech gives to the manufacturer for production—whether it is an entire digital data set or something else—and how easy it is for the manufacturer to move forward with that information package. Mr. Schneider responded that there is a lot of work associated with programming the microcontrollers. Key Tech does provide digital drawings, including 3D models

and 2D drawings. Key Tech also provides design descriptions per FDA requirements that list the design basis and justification.